Most AI research teams, when faced with a choice between solving a hard problem and routing around it, choose the detour. Subnet 3 trained an entire 72 billion parameter dense model specifically to avoid working with Mixture of Experts (MOE) architecture, even though MOE would have cut the training cost in half.

The reason is that decentralized MOE training is an engineering nightmare that most serious labs have quietly decided is not worth the pain.

Eyad Gomaa decided to solve it with his project called Quasar (Bittensor Subnet 24), and alongside the MOE problem, it is also tackling long context understanding, the other major unsolved bottleneck in modern AI.

The conversation he had on the Ventura Labs podcast covers the architecture, the mission, and the personal journey behind both.

The Two Problems at the Center of Everything

Of the important part of the conversation was when Eyad was asked series of questions that were aimed at simplifying the problem Quasar is solving:

a. Why is long context such a hard problem?

Eyad: “It comes down to the root of the models themselves. The Transformer architecture’s attention mechanism looks at all tokens at once, which worked fine for short context. But now people rely on AI daily, and the architecture itself is the bottleneck. Imagine we have AGI, the smartest AI, and we ask it to solve cancer, but it can’t. Sorry, you had the limit for today. This is a pretty stupid idea.”

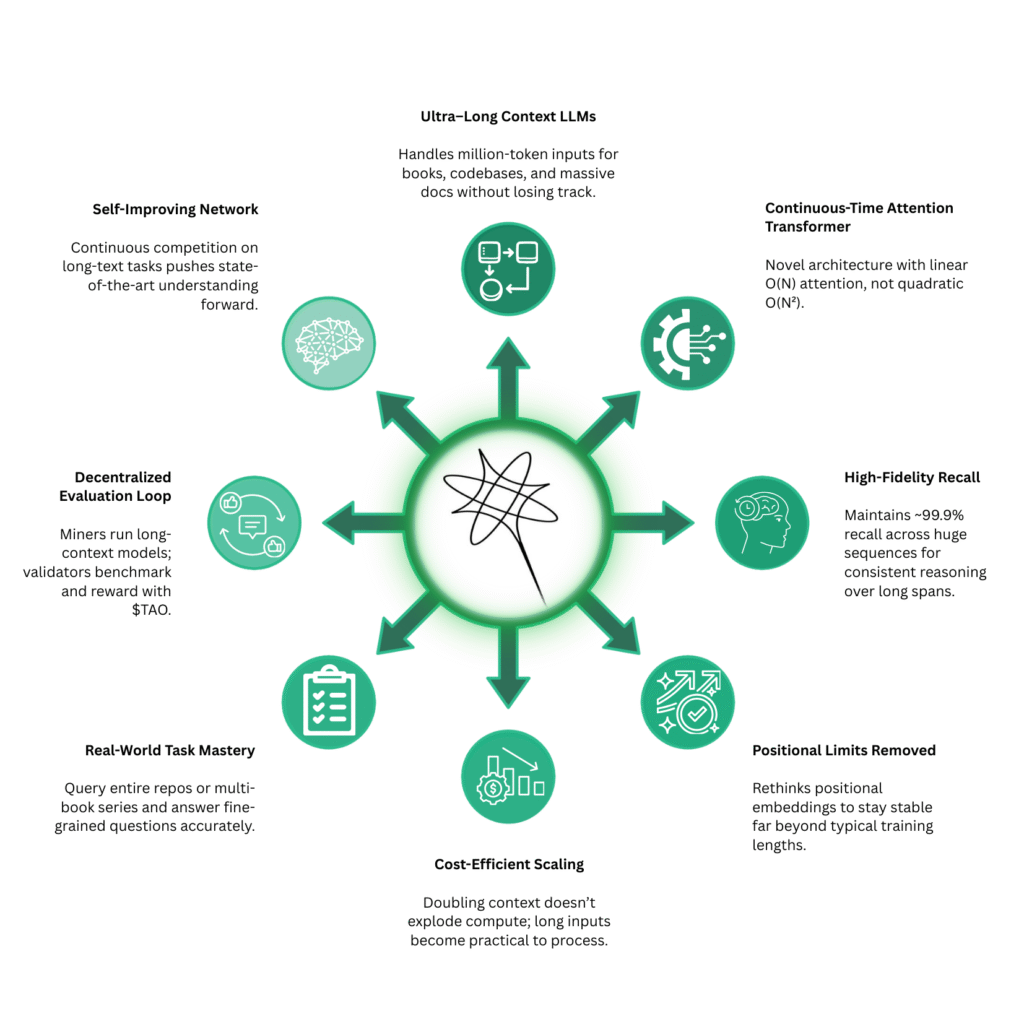

Quasar’s answer is what the team calls continuous time attention, a mechanism they describe as Transformers 2.0, processing tokens linearly with no increasing cost or performance drop. The current model handles 10 million tokens effectively, and the roadmap points toward an infinite context window.

b. Why is decentralized MOE training so much harder than dense model training?

Eyad: “The main issue is the router. In MOE setups, you have experts that each specialise in something: coding, math, and so on. Routers sometimes go in unexpected ways that you can’t fully control, even in a centralized environment. Adding a decentralized setup means each miner handles a different expert from a different location. That’s the main bottleneck.”

Quasar‘s breakthrough is a new MOE design enabling one-to-one communication between experts rather than the all-to-all communication that makes standard MOE training incompatible with decentralized setups.

How the Subnet Works

The current implementation runs a knowledge distillation process where miners train Quasar models by learning from larger teacher models like Qwen. The workflow breaks down as follows:

a. The teacher model generates outputs based on training data,

b. Each miner trains their own expert with their own weights using the Quasar architecture,

c. All miners process the same distillation data simultaneously, and

d. Validators evaluate miner outputs using MOE loss metrics and measure proximity to the teacher model’s results.

What separates this from other distillation subnets is the underlying architecture. As Eyad put it: “Arbos just distills into dense models. But we introduced the Quasar architecture with long context. You’re training a model that already processes millions of tokens. It’s not just any model.”

Why Open Source and Decentralized

Building open source and decentralized for Eyad is a conviction that runs through everything Quasar is building.

He noted that “Right now, all we do is pray that AI labs don’t solve AGI right away and we don’t lose our jobs. That’s kind of what everyone is doing, just watching and waiting. And I said, this is a really stupid idea. The people need to decide what’s going to happen with AI. It’s the most important technology, and we just need to decide what it does and what it doesn’t. That’s why I wanted to make it open and decentralized. That’s why we’re building here.”

The Origin Story

Eyad had been working on the Quasar architecture for two years before Bittensor entered the picture. He reached out to Joseph Jacks of OSS Capital, showed him the architecture with no models and no product behind it, and received what felt like a rejection: build on Bittensor first. This he took seriously, connected with DSV Fund and Bitstarter team, and launched Subnet 24.

What followed was Bittensor’s co-founder, Jacob ‘Const’ Steeves, reached out unprompted after reviewing the research: “The next day I found him in my DMs telling me, I will buy you the subnet and give you all the emissions so you can just work fully on this. Do you agree?” Const now holds the subnet owner key, mentors the team actively, and takes almost nothing in return. “It’s really rare in the crypto space to find someone like Const,” Eyad said. “Thank God we did.”

The Roadmap

Quasar has a partnership with Chutes (Subnet 64) to host the model for inference once ready, and the team is building its own API alongside a product called Quasar Copilot, designed to bring long-context capabilities into daily workflows. A data partnership with a major unnamed player will supply training data previously only available to frontier labs.

The three-year goal was clearly stated: “I would like to see a state-of-the-art model coming from Bittensor that people actually use on a daily basis. It will be open and fully decentralized, and everyone can control how their future is going to look like.”

What Bittensor Did for the Team

Perhaps the most honest part of the conversation was when Eyad reflected on how Bittensor changed not just what they are building, but how they think about building it.

“Before Bittensor, we were simply nerds building something cool. But we just moved from that era into being a real company that builds value and not just something cool. That’s what I thank Bittensor for.”

He also described Bittensor in a way that cuts through the noise for anyone still trying to understand what the network actually is: “I would say it is Y Combinator, but only for real AI work that focuses on producing something with value.”

Conclusion

Eyad Gomaa is among the youngest subnet owners in Bittensor, and Quasar may be one of the most technically ambitious projects on the network. The team came in with two years of architecture work, no crypto background, and a willingness to take on problems that better-resourced labs have chosen to avoid.

The philosophical conviction behind it, that people should control what AI does and does not do, is as important to the project as the engineering itself. Quasar is a deliberate attempt to close the gap between open-source models and the frontier, built on architecture designed from the ground up for the problems no one else wanted to solve first.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment