Training a frontier AI model requires hundreds of specialized chips communicating at extraordinary speeds over infrastructure that only a handful of organizations in the world can afford to operate. That constraint is not incidental to how the AI industry is structured. It is, in large part, why power sits where it sits.

The core problem keeping it that way is bandwidth. Moving data between machines during training requires speeds that consumer internet connections cannot match. Until that gap closes, large-scale AI training stays locked inside the datacenter, and the datacenter stays locked behind the balance sheets of the largest technology companies. Macrocosmos, for IOTA (Bittensor Subnet 9), just published a paper proposing a concrete architectural solution to that problem. They are calling it ResBM, the Residual Bottleneck Model.

The Problem

In a datacenter, machines pass data between each other over links running at roughly 300 gigabits per second. On a standard consumer internet connection, you are working with something 300 times slower. The pipeline stalls, throughput collapses, and serious training becomes impractical.

Previous compression attempts ran into the same wall every time:

a. Aggressive compression corrupts data in ways that accumulate across layers,

b. That accumulation causes model training to degrade or fail entirely, and

c. Placing compression on the residual stream, the network’s core stability pathway, disrupts the gradient flow that keeps deep networks functional.

The Macrocosmos team identified exactly why prior approaches kept failing and built their architecture specifically around avoiding it.

What ResBM Does

ResBM introduces a bottleneck layer that reduces the size of data traveling between pipeline stages, but places that compression alongside the residual stream rather than on top of it. The core stability pathway stays intact.

Training runs end-to-end with standard tools, no specialized mathematical machinery required, and no modifications to the underlying training process.

What the Numbers Show

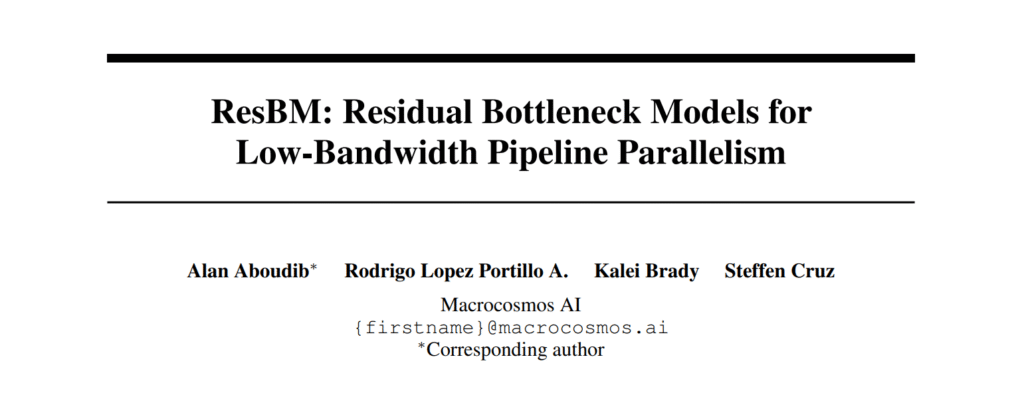

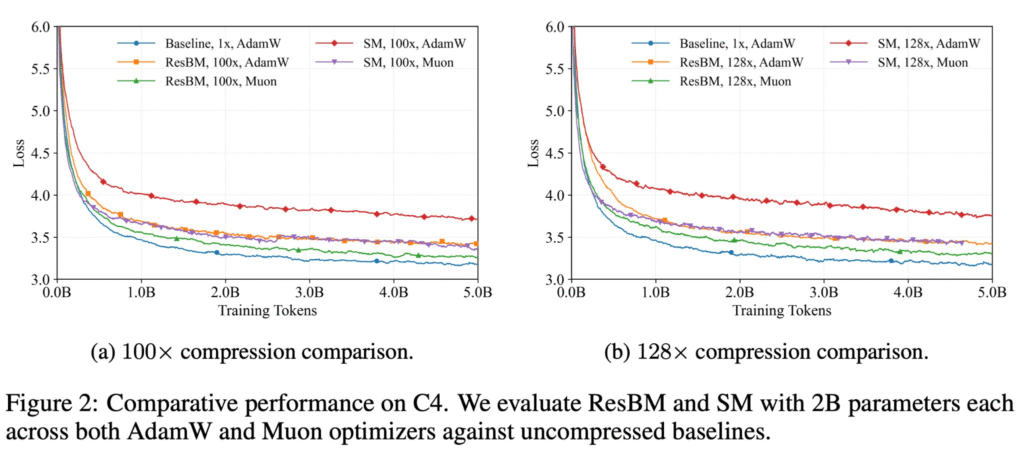

The results in the paper are direct and worth stating clearly. Across every benchmark the team tested, ResBM performed at a level that prior approaches could not reach:

a. 128x activation compression with no meaningful loss in model quality versus an uncompressed baseline,

b. Near-full training throughput recovered on an 80 Mbps consumer connection, despite running on a link 125 times slower than a datacenter setup,

c. Outperforms the previous best-known approach at every compression level tested, and

d. Only 3.3% additional parameters added, making the overhead genuinely negligible.

Why It Matters for Bittensor

Bittensor’s entire proposition rests on decentralized infrastructure producing intelligence that competes with centralized systems. If large-scale training across ordinary internet connections becomes architecturally tractable, the GPU clusters sitting idle in research labs, small data centers, and independent operators around the world stop being inference-only resources and become serious training infrastructure.

That changes the competitive landscape of AI in a fundamental way, and it changes who gets to participate in building the next generation of models.

Conclusion

The distance between a research paper and a production training run is real. Larger scales, broader architectures, and the conditions of a live decentralized network are the tests still ahead.

But for years the honest answer to whether you could train a serious AI model without a datacenter was: probably not. ResBM makes that answer considerably more interesting. The ceiling on what decentralized compute can accomplish just moved, and the team that moved it is building on Bittensor.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment