Every AI model that generates text, processes an image, or executes a trade is drawing on hardware that belongs to one of a very small number of companies. AWS, Google Cloud, and Microsoft Azure control the overwhelming majority of accessible AI compute, and that concentration has real consequences for anyone trying to build outside those ecosystems.

Bittensor was designed to change that arrangement by turning compute into an open, incentivized market where anyone can supply GPU power, and anyone can access it on demand. Compute subnets are where that vision becomes concrete.

The Size of the Compute Market

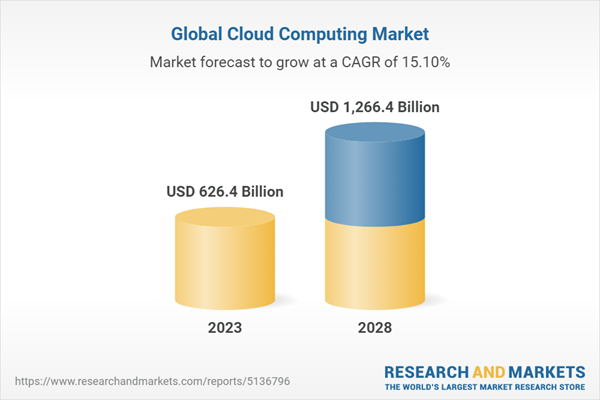

The global cloud computing market was valued at over $670 billion in 2024 and is projected to surpass $1.2 trillion by 2028, with AI infrastructure representing the fastest-growing segment within it.

GPU compute has become the defining bottleneck of the AI era, with demand from model training, inference, and agentic workloads growing faster than supply can keep pace. NVIDIA’s data center revenue alone crossed $47 billion in fiscal year 2024, a figure that speaks to just how capital-intensive and strategically critical this layer has become.

For decentralized AI to be more than a theoretical proposition, it needs to solve the compute problem at its root. A decentralized network that still depends on centralized hardware is not genuinely decentralized at any meaningful level, and compute subnets are the answer to that challenge within Bittensor.

Why Compute Subnets Are Essential to Bittensor

Compute subnets occupy a uniquely load-bearing position within the Bittensor architecture, and that distinction matters more than most people appreciate. Every subnet on the network, regardless of what it produces, requires compute to function. Validators need hardware to evaluate miner outputs, miners need GPUs to generate forecasts and inferences, and as the network scales, those requirements scale with it.

Without a decentralized compute layer capable of meeting that demand reliably, Bittensor’s growth becomes self-limiting, eventually bottlenecked by the same centralized providers it was built to replace.

There is also a deeper structural argument here: Bittensor’s credibility as a decentralized AI network depends on its ability to demonstrate that the intelligence it produces does not secretly trace back to centralized infrastructure. Compute subnets close that gap, ensuring the hardware layer is as distributed and permissionless as the network it supports. They are not one category among many within Bittensor; they are the substrate that makes every other category viable at scale.

What Compute Subnets Do

Compute subnets aggregate global GPU resources into a single permissionless marketplace governed by performance and incentives rather than contracts. The structure is straightforward:

a. Miners contribute GPU or CPU power and earn rewards proportional to the quality and reliability of what they provide.

b. Validators continuously benchmark miner performance, verify hardware claims, and enforce the quality standards that keep the marketplace trustworthy.

c. Users and developers rent compute on demand for AI training, inference, or any GPU-intensive workload they need to run.

This creates a live market where compute is priced through supply and demand rather than dictated by a provider with no competitive pressure to be fair.

The Subnets Building This Category

Several compute subnets have emerged as the clearest expressions of this model in practice:

a. Targon (Subnet 4) provides secure, developer-friendly GPU infrastructure built to feel familiar to anyone coming from traditional cloud platforms. It offers dedicated GPU rentals, serverless model deployment, and a Python SDK, all backed by NVIDIA Confidential Computing for hardware-level privacy.

Targon is deliberately designed to reduce the friction of switching from centralized providers, making decentralized compute accessible without requiring developers to rebuild their entire workflow to use it.

b. ComputeHorde (Subnet 12) functions as an internal scaling layer that other subnets across the Bittensor network depend on, rather than a consumer-facing product. It transforms untrusted miner GPUs into verified, reliable compute that validators can use to run heavy evaluation workloads without owning expensive hardware themselves.

c. Quantum Compute (Subnet 48) extends the decentralized compute model into entirely new hardware territory by opening access to real quantum computers through Bittensor’s incentive architecture.

Miners must own or operate physical quantum hardware, or hold a direct and documented partnership with one, ensuring the resources offered are authentic rather than simulated. By applying the same permissionless market structure to quantum hardware, Subnet 48 positions Bittensor at the frontier of one of the most scarce and expensive computing resources in the world.

d. Lium (Subnet 51) is a peer-to-peer GPU marketplace where anyone can supply or rent compute without KYC requirements or centralized intermediaries of any kind.

Lium’s actual rental revenues have begun outpacing its blockchain incentive subsidies, meaning organic customer demand now exceeds the token rewards propping it up, which is the clearest signal of genuine product-market fit in this category.

e. Chutes (Subnet 64) is a decentralized serverless platform that lets developers deploy and scale AI models in seconds without managing any underlying infrastructure. It supports large language models, image models, and audio models, and has established itself as a top provider on OpenRouter alongside major players like Anthropic.

Chutes is positioned as a direct Web3 alternative to OpenAI’s API, offering broader model diversity and a decentralized backend that no single company can restrict or shut down.

f. Byteleap (Subnet 128) connects distributed bare-metal GPU servers and high-performance clusters across multiple independent data centers into a single coordinated network. Compute jobs are submitted, assigned, and validated entirely on-chain, with no central authority making allocation decisions at any point in the process.

Supporting hardware from RTX 3090s all the way to B200s, Byteleap positions itself as an enterprise-grade decentralized public cloud for AI workloads that demand both performance and trustless coordination.

Conclusion

Compute is not a supporting character in the AI story. It is the story, and who controls it will shape more of the next decade than almost anything else being discussed right now. Compute subnets within Bittensor are building a live, functioning answer to that question, and the subnets covered here represent the leading edge of a category that is only going to grow more critical over time. The shift from renting compute from a monopoly to participating in an open market is subtle on the surface, but over time it changes everything about who gets to build, who gets to scale, and who gets to win.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment