Bittensor has spent years generating real products across inference, compute, data, and other AI primitives, but the network has longed for a clean surface where that output could actually be found by someone outside the protocol’s most active corners. TaonSquare is built to close that gap.

Launched by Yuma Group in beta, TaonSquare is a discovery layer for the full universe of AI tools running on Bittensor. It surfaces subnet capabilities, pricing, API details, and integration paths in one unified directory, and it does so for two audiences simultaneously.

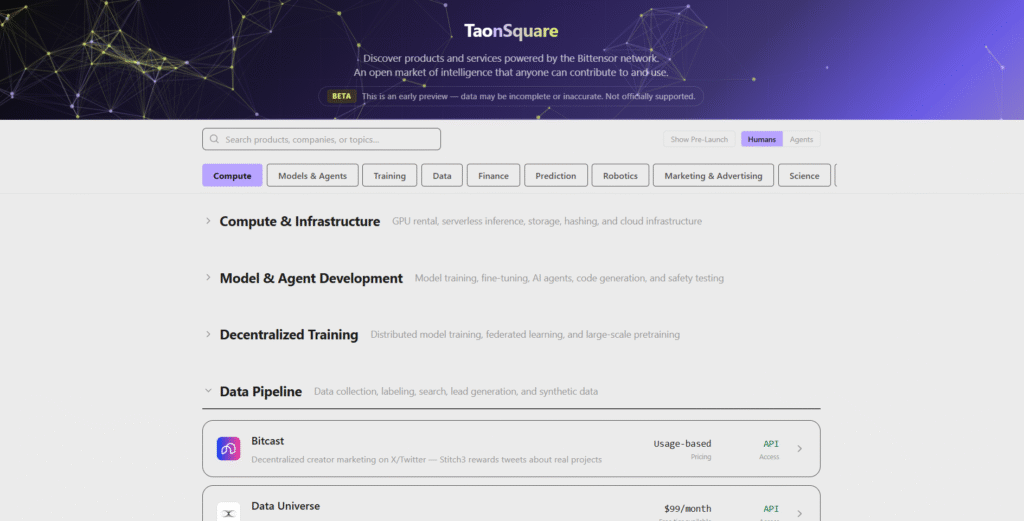

What’s in the Directory

The current Bittensor network spans 128 subnets, each producing its own commodity output. The catalog covers the full AI stack:

a. Compute and Infrastructure (GPU Rental, Serverless Inference, Storage, Hashing, and Cloud Infrastructure),

b. Model and Agent Development (Model Training, Fine-Tuning, AI Agent, Code Generation, and Safety Training),

c. Decentralized Training (Decentralized Model Training, Federated Learning, and Large-Scale Pretraining),

d. Data Pipeline (Data Collection, Labeling, Search, Lead Generation, and Synthetic Data),

e. Finance,

f. Predictive Systems (Forecasting, Sports and Financial Predictions, and Trading Signals),

g. Robotics (Robotics Control, Embedded AI, and Swarm Coordination),

h. Marketing and Advertising,

i. Science, and

j. AI and Machine Learning.

Each entry includes the practical information a buyer or developer actually needs in order to evaluate it, including pricing model, API availability, current market cap denominated in $TAO, integration links, supporting documentation, and product status.

All of it is presented in a unified format rather than scattered across Discord channels, GitHub readmes, and the dozens of individual subnet websites that previously held the information in fragments.

For Human Users

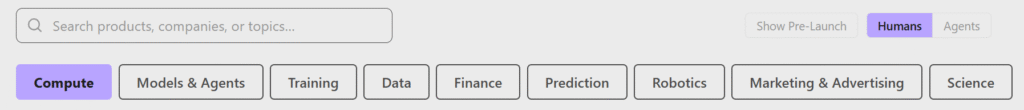

For humans, TaonSquare functions as a curated browsing surface designed for the kind of exploration that previously required hours of digging through the Bittensor ecosystem.

A developer adding LLM inference to a stack can filter by category, compare API options across subnets, and read integration details without leaving the page.

A buyer evaluating compute providers can sort the available options by pricing and market cap to find the combination that fits a given cost constraint.

The browsing flow supports filtering by category, tags, pricing model, API availability, and free-text search, with additional filters for market cap range and product status. Any two or more subnets can also be placed side by side for direct comparison.

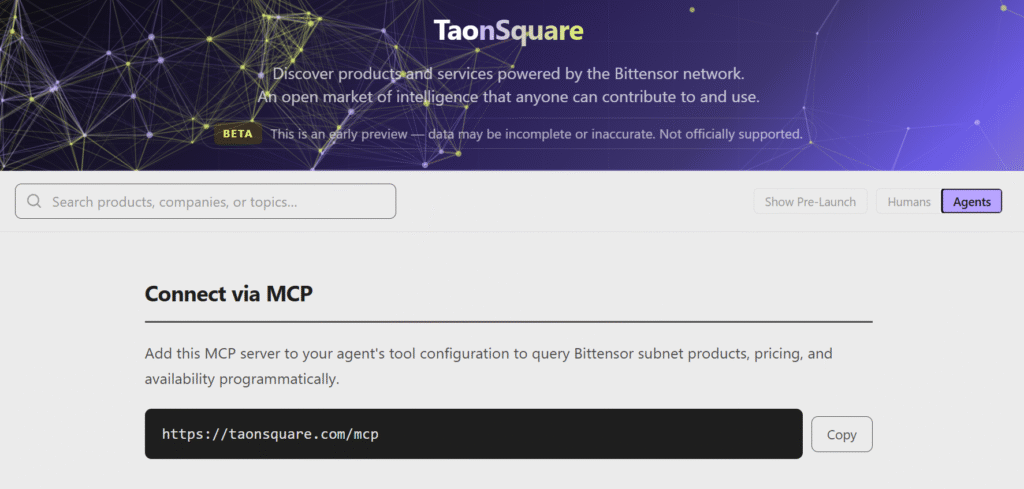

For Agents Through MCP

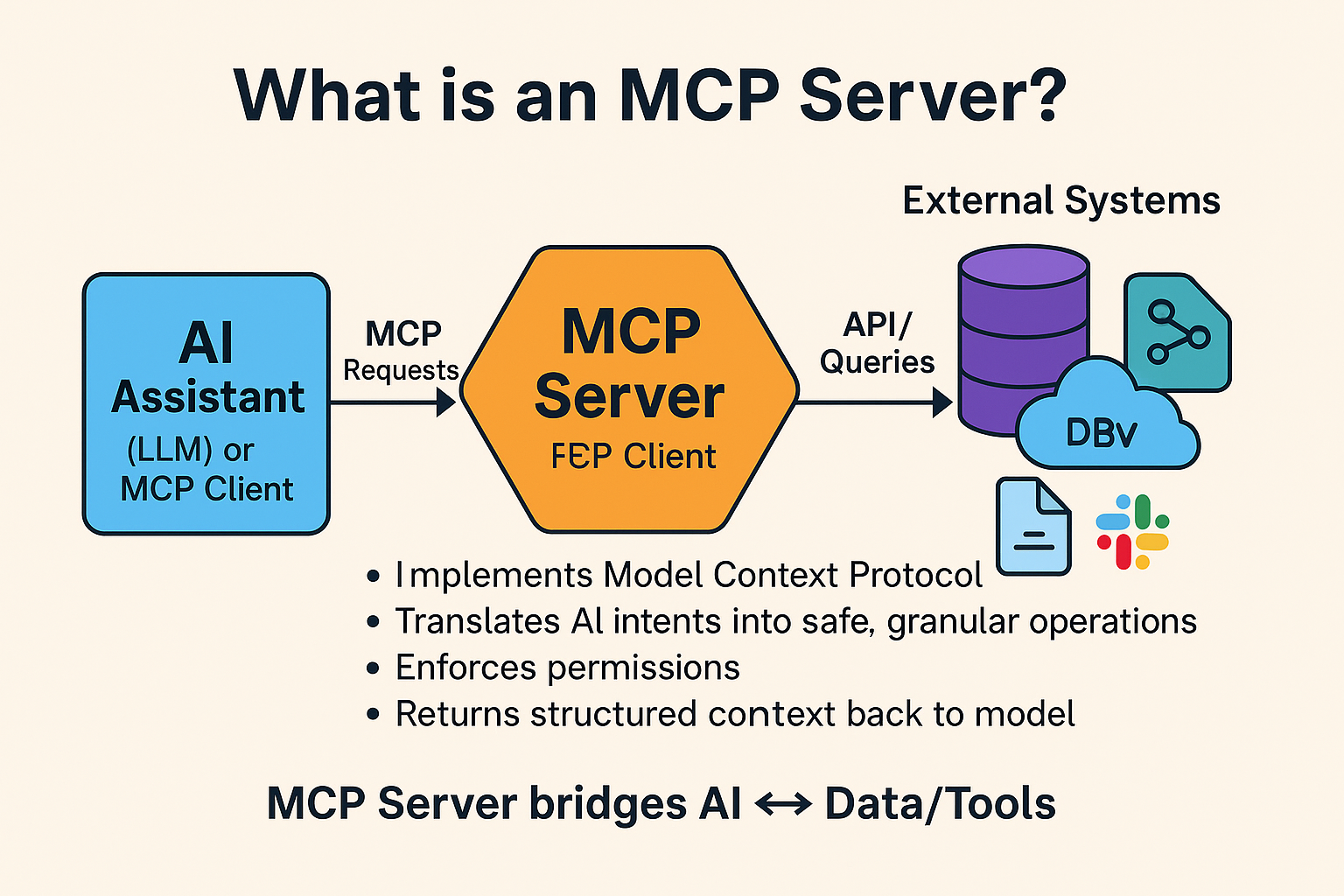

The second audience is one that did not exist when most of the Bittensor ecosystem was originally being designed. AI agents now need to discover and consume external services programmatically, on behalf of their users, without human intervention sitting in the middle.

TaonSquare handles this through MCP (the Model Context Protocol), which is widely adopted across agent frameworks. By adding the TaonSquare MCP server to an agent’s tool configuration, that agent gains structured access to the full Bittensor catalog.

Through the MCP interface, an agent can do all of the following:

a. Browse the full product catalog and pull an overview of every subnet,

b. Retrieve detailed information for any specific subnet, including pricing and access points,

c. Search by category, tags, pricing model, API availability, or free-text query,

d. Filter by market cap range or product status,

e. Compare two or more subnets side by side, and

f. List all product categories with associated counts.

The practical effect is that an agent looking for the cheapest LLM inference endpoint, or the highest-rated image generation subnet, can resolve that question through a single MCP call rather than scraping disparate sources. The integration positions Bittensor subnets to be consumed directly by the next generation of agent frameworks, rather than requiring each framework to build its own custom integration layer for the network.

Conclusion

TaonSquare is not a flashy launch, but it is the kind of infrastructure that determines whether the products running underneath it actually get used by anyone. Discovery has been the longest-running gap in Bittensor’s adoption story, and several of the network’s strongest subnets have been routinely overlooked by potential users who simply could not see them in the noise.

By giving humans a navigable browsing surface and giving agents a programmatic interface to the same underlying data, TaonSquare closes both halves of that gap at once. The beta is acknowledged as early and the team has flagged that some of the data may be incomplete, but none of that changes the underlying significance of what has been shipped here.

The infrastructure layer for agentic AI now has a storefront, and the application layer above it can finally start being built against something stable rather than something improvised on the fly.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment