AI assistants have a memory problem: Each new conversation starts fresh, as the preferences, context, tasks, and detail all reset the moment a session closes. For light chat use, the limitation is tolerable, but for the longer agentic workflows now defining serious AI work, it becomes a structural failure.

Ditto exists to fix that. Running as Subnet 118 on Bittensor, the project operates as a long-term memory substrate for AI agents, converting scattered chats, files, and tool history into durable context agents that can be recalled on demand.

Built around that memory layer is an open-source agentic operating system designed to take direct aim at closed platforms like Claude Cowork, Perplexity Computer, Slack, and Google Drive.

The Project at a Glance

In a single line, Ditto is an open-source agentic OS (operating system) with persistent memory underneath, powered by a Bittensor subnet.

It breaks into three layers:

a. A workspace where organizations coordinate people, agents, projects, files, and conversations in one place.

b. A memory graph that gives agents long-term recall across sessions and tools.

c. A harness that wraps the underlying model and pushes its behavior closer to that of a top closed system.

Peyton Spencer, the CEO of Ditto, calls the harness “the car the LLM drives,” describing it as what lets an open model like DeepSeek or Kimi perform with the polish of Claude, at a fraction of the cost.

The Bittensor Mechanics

Ditto runs as Subnet 118 using the standard subnet architecture where:

a. Miners generate memory-related outputs like retrieval, inference across long histories, surfacing the right fragments at the right moment.

b. Validators score those outputs against accuracy, coherence, and how well they match the subnet’s evaluation criteria.

c. $TAO emissions flow according to validator scoring and VTrust metrics.

What miners actually ship goes beyond per-query answers, they contribute code (open-source repositories) that refine the harness itself. The optimization targets are retention quality, inference latency, error recovery, behavioral consistency, and prompt processing.

Every winning improvement gets pulled into the live product.

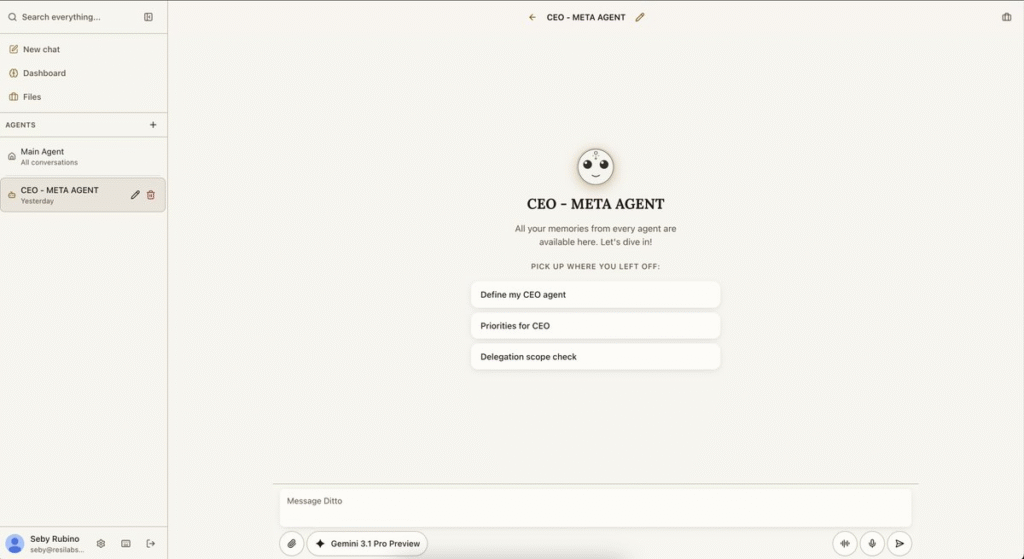

What Users See

The workspace consolidates the tools usually sprawled across half a dozen apps into one environment (people, agents, projects, issues, conversations, files, and tasks in a unified view.) An orchestration layer beneath the surface routes work between humans and agents, manages projects, and provisions new resources autonomously.

For end users, Ditto behaves like an AI assistant that remembers. Past conversations get organized into named Subjects automatically, with the connections between ideas surfacing visually as they accumulate. Context first mentioned months ago can be pulled back on demand.

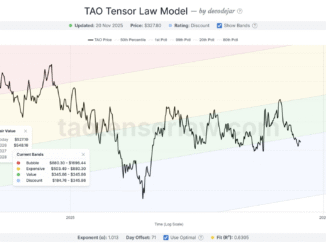

The Benchmark

Ditto’s first published evaluation runs on LongMemEval. Using Gemini 3.1 Pro:

a. Composite: 77.8

b. QA accuracy: 71.2% across 500 questions

c. Session recall: 87.6%

The number that matters most sits below those headlines. On the seed-only retrieval path, where the system surfaces the right memories before the model goes hunting, accuracy reaches 90% at 1.8 seconds median latency. Fall back to multi-hop tool calls and accuracy collapses to 61%, with latency stretching into minutes.

The weakest dimensions are temporal reasoning (57.1%) and multi-session recall (56.4%), and that is precisely where miners are most incentivized to push.

The team has been explicit that LongMemEval is a warm-up, and once it saturates, the subnet shifts to generating its own next-generation memory benchmarks, with current research drawing on the MemPalace approach.

Positioning Within Bittensor

Most Bittensor subnets (like Data Universe) target other problems such as data collection, content-model training, compute routing, but Ditto’s niche is different.

Persistent context and personal memory for an individual user’s agents is a surface most of the network has not entered, which places Ditto among the first mainnet subnets dedicated to it.

The memory graph it produces is designed to layer above the data-side subnets, not compete with them.

Conclusion

Among Bittensor subnets, Ditto is unusually grounded. The framing is ambitious, but everything underneath it is concrete; a working product, a published benchmark, a public baseline, and code as the unit of miner output rather than vibes. The roadmap moves through measurable goals: improve memory, improve speed, then build the next benchmark when the current one runs out.

The deeper wager is that the long-term value of open-source AI accumulates in the harness, not the model, that a workspace built on persistent memory with continuously improving infrastructure beneath it can credibly compete with the closed-platform incumbents currently running the category. Subnet 118 is the engine keeping the harness sharp. The workspace above it is where the work shows up.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment