We are at the beginning of an entirely new wave of company building: Agent-native startups. This kind is designed from day one around AI agents doing the work human teams used to do, and they are starting to look the way internet companies looked in the late nineties.

The opportunity is enormous, the velocity is real, and most of the infrastructure these companies will eventually depend on does not exist yet.

One of the pieces that does exist, and that almost every serious agent-native company will eventually need, is being built by Trishool (Bittensor Subnet 23). It is called Halo.

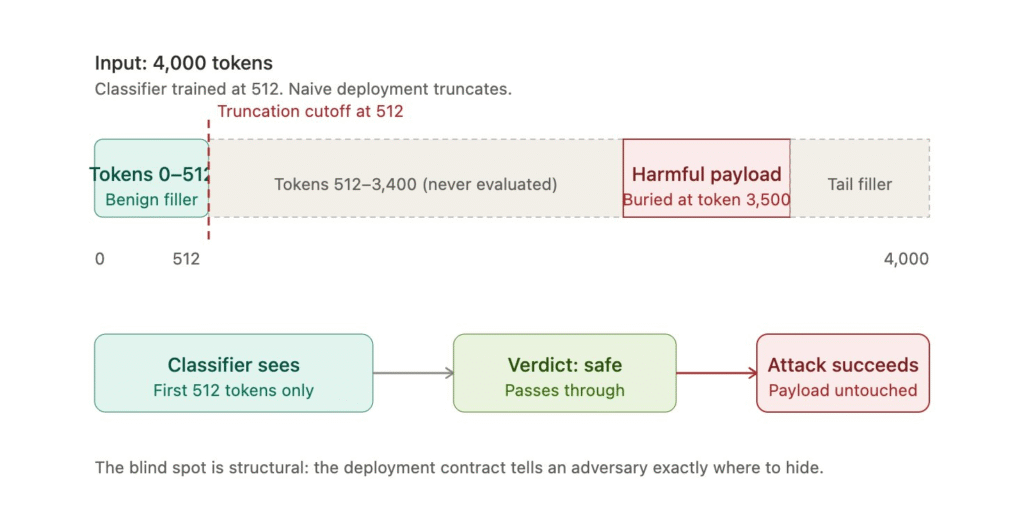

The Problem Most Safety Classifiers Quietly Have

Almost every safety classifier deployed in production today shares the same hidden weakness, and it has nothing to do with how smart the model is. It has to do with how the model gets used:

a. Trained on a Fixed Window: Most safety classifiers are trained on inputs of around 512 tokens,

b. Real Inputs are Longer: User-generated content in production often runs into the thousands of tokens,

c. Truncation Kicks in by Default: Standard practice is to cut the input down to the first 512 tokens before running the classifier, and

d. The Rest is Never Evaluated: Whatever sits beyond the cutoff is invisible to the model, regardless of what it contains.

The verdict comes back based only on the slice the model could see, and everything else slides through unchecked.

Why This Becomes a Real Attack

The attack does not require any model expertise, it only requires knowing that truncation is happening, which is a safe assumption about most naively deployed classifiers.

The playbook is straightforward:

a. Pad the Start with Benign Content: A cooking paragraph, a weather report, anything that reads as safe.

b. Bury the Harmful Content Later: Push it past the truncation cutoff,

c. Submit Normally: The classifier evaluates only the safe-looking opening, and

d. Get a Clean Verdict: The harmful content was never seen.

The vulnerability is in the deployment contract itself, which is exactly what makes it dangerous and exactly why it needs an infrastructure-level fix rather than a model-level one.

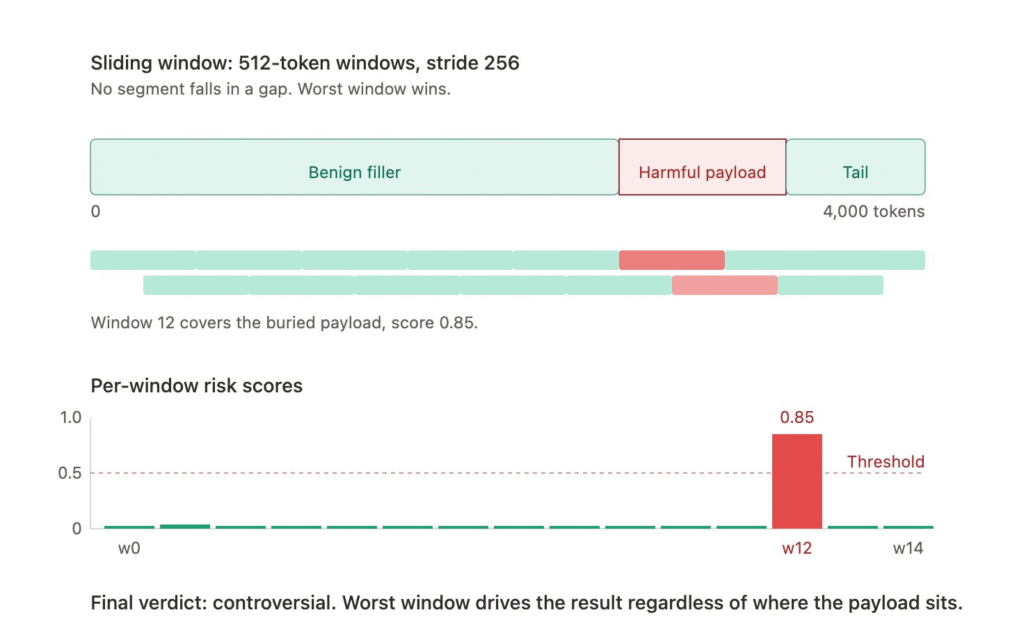

How Halo Closes the Gap

Halo replaces truncation with a sliding window approach that evaluates the entire input:

a. Full Tokenization: The complete input gets tokenized rather than cut short,

b. Overlapping 512-Token Windows: Each window steps forward by 256 tokens, creating 50 percent overlap so nothing falls into a gap,

c. Independent Classification per Window: Every window is scored on its own,

d. Risk Scoring: Each window receives a combined probability of unsafe and controversial content, and

e. Worst-Case Verdict. The final classification reflects the highest-risk window anywhere in the input.

The model powering it is Qwen3.5-0.8B, small enough to be fast and deployable while strong enough to handle the work. The windowing happens entirely in the inference wrapper, which means the model itself does not need to change for the safety guarantee to apply.

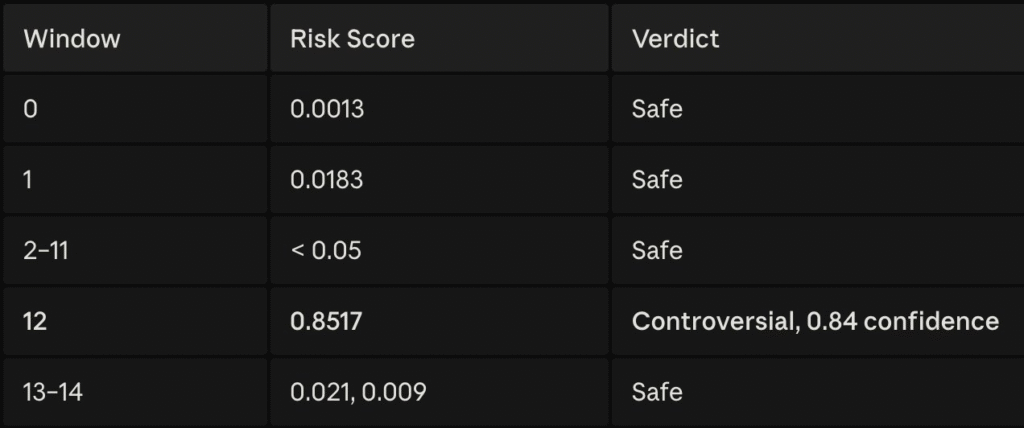

Thus, in a recent live test on a long input that resolved into 15 overlapping windows, naive truncation returned a clean verdict because the first windows looked safe. Halo flagged the input as controversial because window 12, deep inside the content, contained material truncation never saw.

Why This Matters for Every Agent-Native Startup

The wave of agent-native companies being built right now will eventually need safety infrastructure that does not collapse the moment someone tries to bypass it.

The reasons Halo will end up underneath a lot of these companies are concrete:

a. Long Inputs are Unavoidable: Agent workloads regularly process long documents, multi-step user instructions, and chained context that easily exceeds 512 tokens,

b. Building a Classifier from Scratch is not Realistic: Most teams will reach for off-the-shelf safety tooling rather than train their own,

c. Truncation-Based Tools Fail Silently: Teams using them often have no idea their classifier has a structural blind spot until something goes wrong, and

d. Halo is Open-Source by Design: Any team building on decentralized AI can adopt, audit, and extend it without licensing fees or proprietary lock-in.

Trishool’s stated goal is to build shared infrastructure the entire agent ecosystem can depend on, in the same way the early internet eventually settled on common protocols rather than proprietary stacks.

Conclusion

The agent-native era is going to look a lot like the early internet, and the companies building shared infrastructure now will end up underneath an enormous amount of what gets built on top.

Halo is one of those pieces. It addresses a structural failure most teams do not know they have, it solves it in a way that is open, auditable, and deployable today, and it is being developed inside one of the most credible decentralized AI ecosystems currently operating.

Every agent-native startup is eventually going to need a real safety layer. Trishool (Bittensor Subnet 23) is quietly building one of the few that will actually hold up.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment