The AI chat market spent three years looking like a one-horse race. ChatGPT set the standard, the rest of the field played catch-up, and most users never thought to ask whether the default option was actually the best one. That assumption no longer holds.

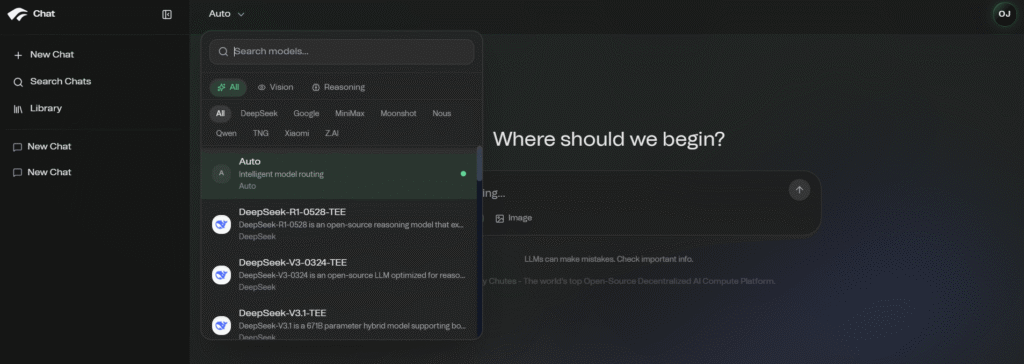

Chutes Chat, the consumer-facing front end of Bittensor Subnet 64, has quietly built something the centralized incumbents structurally cannot match: 60+ open-source frontier models inside one conversation, hardware-enforced privacy as a baseline, automatic model routing, and pricing that makes ChatGPT Plus look expensive.

One Conversation, 60+ Models, Half the Price

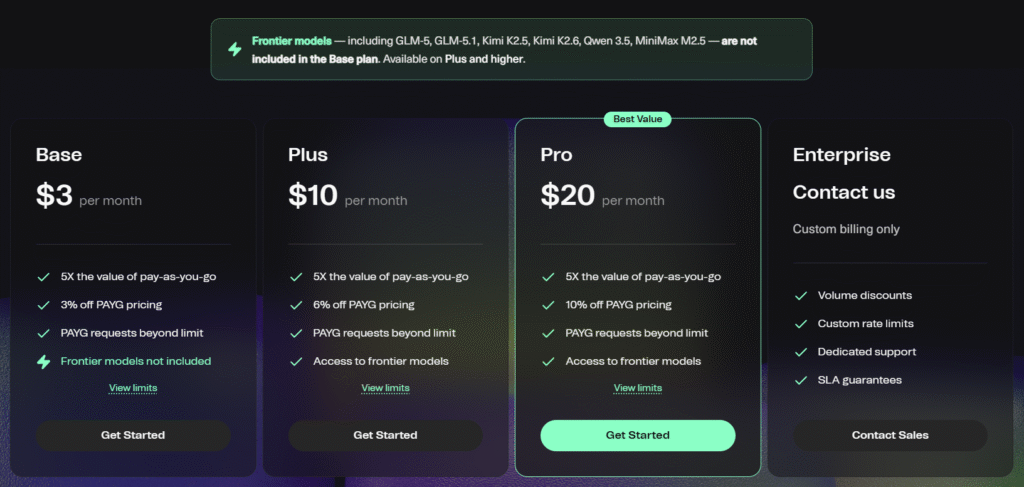

ChatGPT Plus is $20 a month and gives you GPT-5.5, Chutes’ $10 Plus a month gives 60+ frontier models inside the same conversation (including several that have outperformed GPT-5.5 on benchmarks!):

| Tier | Chutes Chat | ChatGPT |

| Free | Available with Usage Limits | Limited Access |

| Entry | $3 (Base) | $8 (Go) |

| Mid | $10 (Plus) – Frontier Models Included | $20 (Plus) |

| Pro | $20 (Pro) – 10% off PAYG | $100+ (Pro) |

| Enterprise | Custom Billing, Volume Discounts, SLAs | Custom Enterprise Pricing |

The Right Model for the Right Task

ChatGPT locks every workflow into one model. Chutes lets users move between specialized frontier models inside the same chat:

a. Mimo V2 Flash for general reasoning,

b. MiniMax M2.5 for code generation,

c. Kimi K2.6 for image input and 262K context,

d. Qwen3.5-397B for frontier reasoning,

e. GLM-5 for multilingual depth, and

f. DeepSeek V3.1 for everyday tasks.

Several of these models have outperformed GPT-5.5, Claude, and Grok on benchmarks. Kimi K2.6 has emerged as one of the strongest open-source models for long-context reasoning. Chutes lets you pick the best model for the task. ChatGPT does not give you that choice.

Hardware-Enforced Privacy at a Price the Incumbents Cannot Match

ChatGPT gives you a privacy policy, but Chutes gives you hardware enforcement. More than 15 frontier models on Chutes run inside Trusted Execution Environments (TEE).

TEE are hardware-locked enclaves where prompts go in, completions come out, and nothing in between is readable by anyone, not even Chutes itself.

The pricing makes the gap embarrassing for the incumbents:

a. DeepSeek V3.1 (TEE) at $0.20 / $0.80

b. Kimi K2.5 (TEE, text + image) at $0.45 / $2.20

c. DeepSeek R1-0528 (TEE) at $0.45 / $2.15

d. Qwen3.5-397B (TEE) at $0.55 / $3.50

DeepSeek V3.1 with TEE on Chutes is cheaper than Claude Haiku 4.5 with no privacy guarantees at all. ChatGPT cannot match this. Hardware enforcement requires architectural choices centralized providers have not made and cannot quickly retrofit.

Automatic Model Routing: A Capability Built for Power Users

ChatGPT routes everything to one model. Chutes ships intelligent multi-model routing as a native feature, fully OpenAI-compatible.

Define a pool of up to 20 models, and Chutes routes requests across them automatically through a single alias, using one of three strategies:

a. Sequential failover, trying models in priority order if the first is busy,

b. Latency-optimized, selecting whichever model has the lowest time to first token, and

c. Throughput-optimized, selecting whichever model produces the highest tokens per second.

The only change required to integrate is the model field. Existing OpenAI SDK (Software Development Kit) code works without modification. If a model is at capacity or degraded, your users never see it.

The system routes around the issue using live performance data. ChatGPT, by structural design, cannot offer this.

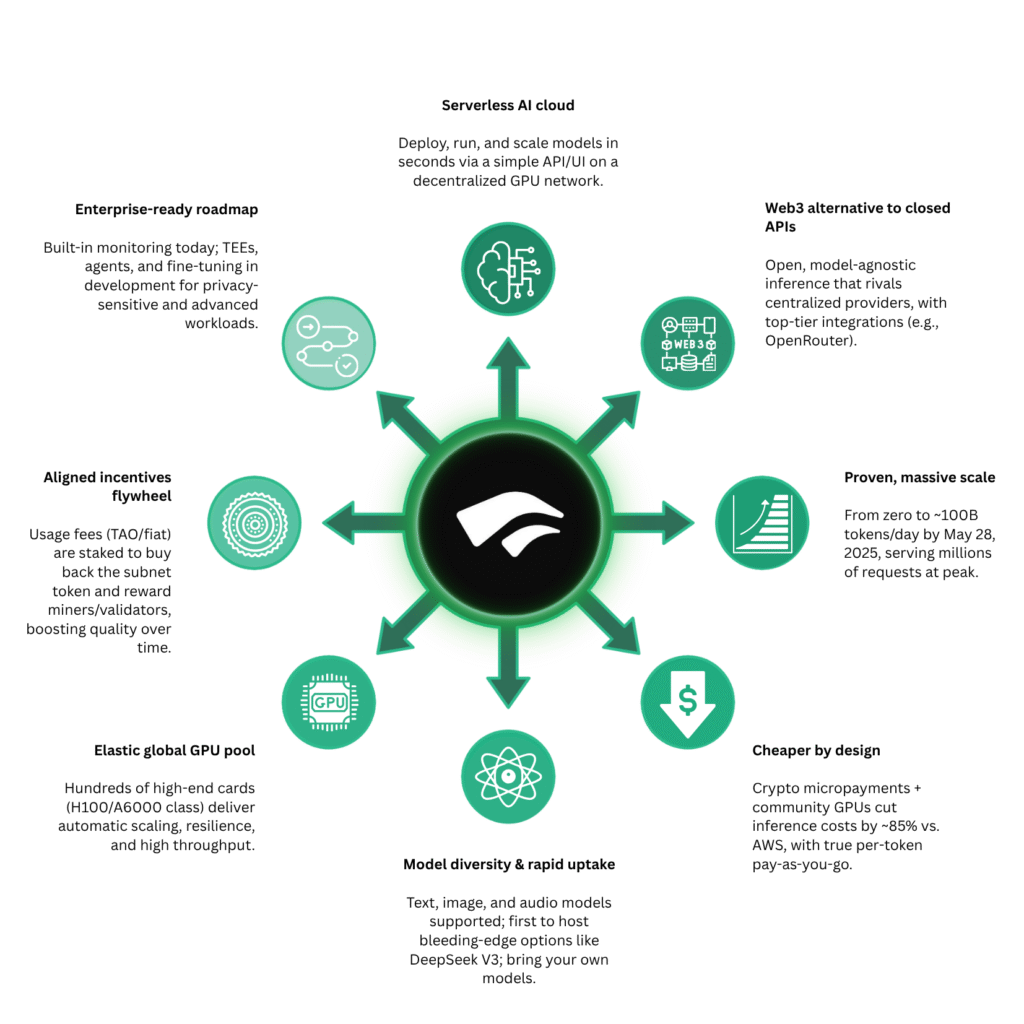

Why Chutes Can Deliver All of This for Less

The economics underneath Chutes is structurally different, because it runs on Bittensor Subnet 64, a competitive marketplace where independent GPU (Graphics Processing Unit) operators bid to serve inference. Thus, the competition drives prices down.

No single ‘company’ or ‘individual’ controls supply, as they are the natural output of a permissionless market for compute, and they get passed directly to the end user.

The Free Plan and What You Should Know About It

Chutes Chat is accessible via chutes.ai/chat with no credit card required and immediate access to working models.

The limits are honest:

a. Frontier models including GLM-5, Kimi K2.5, Kimi K2.6, Qwen 3.5, and MiniMax M2.5 are not included on the Base plan

b. Usage limits are tighter than the paid tiers

c. Beyond-limit requests fall back to pay-as-you-go pricing

For frontier model access, the $10 Plus plan is the natural step up. For maximum capability at the best per-token price, the $20 Pro plan delivers more than ChatGPT’s $20 Plus tier across every meaningful axis.

The Quiet Verdict

This is not a debate about which AI assistant is marginally better, it is a question about what kind of product the market actually wants. ChatGPT offers one model, one price, one set of trade-offs, and Chutes offers a market of frontier models, hardware-enforced privacy, intelligent routing, and OpenAI-compatible integration at half the cost.

The advantage is structural and it grows the more seriously a user engages with the platform. ChatGPT had a three-year head start, but Chutes is building what comes next.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment