Every major AI video platform available today shares the same underlying structure: a company controls the model, sets the pricing, decides what can and cannot be generated, and owns the infrastructure you depend on entirely.

For most users that arrangement has felt acceptable, mostly because there was no alternative worth taking seriously. Leoma, built by Rendix Network on Bittensor Subnet 99, is the alternative.

What Leoma AI Does

Leoma is a Bittensor subnet dedicated entirely to AI video generation, engineered from the ground up and production-ready from day one.

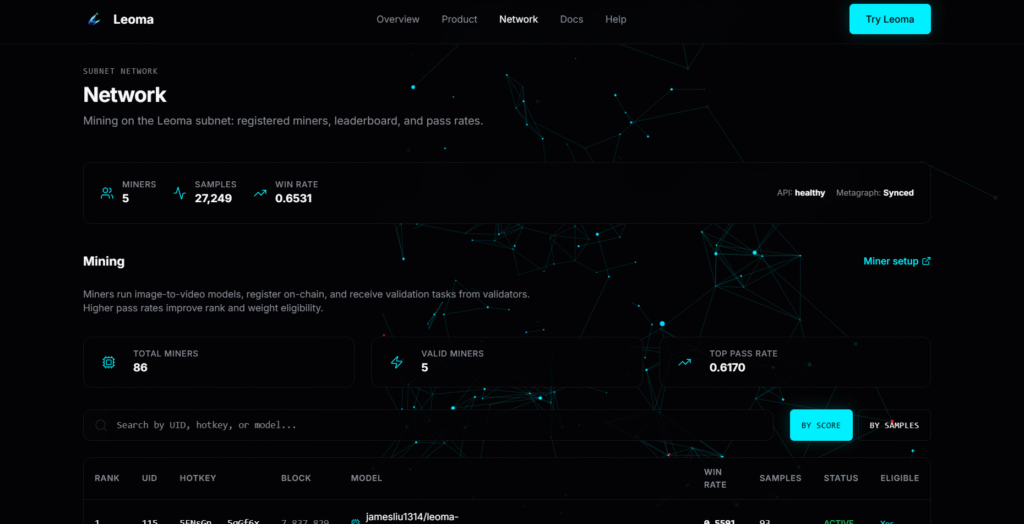

The current implementation supports Text-Image to Video generation, where validators send miners a first frame and a text prompt, miners return a short video, and the output is benchmarked against a real clip to determine whether it passes evaluation. Rankings and rewards flow directly from that performance data, with no central authority deciding who wins.

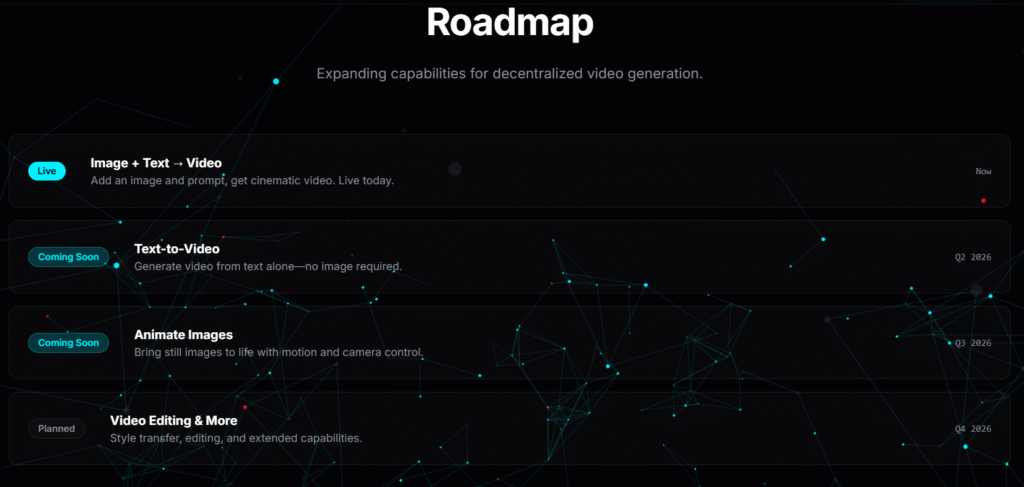

The roadmap extends this foundation to include Text-to-Video and Image-to-Video support, progressively expanding what miners can compete on and what users can generate.

How It Works

The architecture is clean and the roles are clearly defined:

a. Miners fine-tune and deploy video generation models, registering a Hugging Face model and a Chutes endpoint on-chain.

Strong pass performance improves their rank and their share of subnet emissions.

b. Validators sample tasks from real clips stored in Hippius S3, distribute challenges to miners, run layered evaluation across multiple quality dimensions, and submit aggregated results to the Leoma API, which computes final weights set on-chain.

For end users the experience is simpler still: upload a starting image, describe the motion you want, and receive a high-quality video generated by whichever model is currently performing best across the network.

The Model Underneath

Leoma (Subnet 99) is currently built on Wan2.2, a 14-billion parameter architecture widely regarded as one of the strongest open-source video generation models available.

Miners are not training from scratch, they are performing targeted fine-tuning on top of this foundation, guided by a structured evaluation pipeline that scores outputs across six distinct dimensions:

a. Fidelity, measuring frame-level realism and artifact suppression,

b. Prompt adherence, assessing semantic alignment with the text conditioning,

c. Motion consistency, evaluating spatial and temporal coherence across frames,

d. Temporal stability, checking for flicker and drift between frames,

e. Visual quality, covering sharpness, composition, and lighting realism, and

f. Camera dynamics, rating cinematic motion and perspective control.

Because the evaluation signal is this granular, miners are incentivized to push meaningful improvements rather than overfit to narrow metrics. This results in the same base model class producing progressively higher-quality outputs as competition intensifies, which is exactly how decentralized iteration is supposed to work.

Why This Model Beats the Alternative

Centralized video generation platforms come with a predictable set of constraints: rate limits, content restrictions, corporate pricing, and a single point of failure if the service goes down or changes its terms.

Leoma’s structure inverts every one of these:

a. No single gatekeeper controls access or output quality,

b. The best model wins every request, not the one a company decided to promote,

c. Top miner models are open-sourced, letting miners build on each other’s progress toward state-of-the-art performance, and

d. The network runs across distributed infrastructure with no single point of failure.

Asides from being a mere philosophical position, it is a practical architecture where competition produces better outputs over time for everyone using the network.

Built for Anyone Who Creates

Leoma is designed to be useful across a wide range of creative and commercial contexts. Content creators can generate short clips and motion graphics for social platforms without expensive tooling.

Marketing teams can ship ad creatives, product demos, and explainer videos without agency timelines. Developers can integrate directly via API and build applications and workflows on top of the best-performing models the network produces.

The mining competition is live now, the infrastructure is ready, and the quality ceiling is only going to rise as more models join and compete.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment