Cover Image Credit: Platform

Most projects in the Bittensor ($TAO) ecosystem launch with a working product and a roadmap, but Cortex Foundation did something different. They published a 41-page whitepaper for Platform (Bittensor Subnet 100) that proposes a formal argument for why centralized AI development is structurally incapable of sustained innovation, then builds a network designed to make that argument empirically true.

It is probably the most ambitious document ever released in the Bittensor ecosystem, and it deserves more attention than it has received.

The Central Argument

Every major AI lab trains its models on a single architecture chosen before training begins, decided by a small internal team, and frozen for the entire training run. That single decision determines the performance ceiling of everything that follows, regardless of how much compute gets spent afterward.

Platform uses DeepSeek’s trajectory to prove the point. Each generation of DeepSeek models introduced a new architectural component that delivered efficiency gains no amount of additional compute could replicate:

a. DeepSeek-V2’s Multi-head Latent Attention reduced training costs by 42.5%,

b. DeepSeek-V3 trained a 671 billion parameter model for $5.6 million, competitive with GPT-4 at an estimated $50 to $100 million, and

c. DeepSeek-V4 achieved million-token inference at 27% of the previous generation’s compute cost.

Every gain was architectural, not computational. A lab that commits to the wrong architecture spends hundreds of millions training a fundamentally suboptimal model, with no internal mechanism to discover that a single structural change would have produced better results at a fraction of the cost.

Platform’s argument is that this is not fixable by hiring more researchers or spending more money. It is a structural limitation of centralized development, and the only solution is to make architecture itself a competitive search space rather than a fixed constraint.

The Four-Challenge Pipeline

What separates Platform from every other Bittensor subnet is that its four challenges form a complete production chain where each stage feeds directly into the next, creating a closed loop that continuously improves its own capability:

a. PRISM searches for the optimal architecture by having miners propose and compete over configurations across a vast combinatorial space. A four-tier evaluation funnel progressively filters candidates from basic validation through proxy scoring, short training runs, and full benchmarking.

Platform formally proves through its Architecture Search Superiority Theorem that a subnet searching over architectures achieves strictly superior capability compared to any fixed-architecture competitor.

b. The Training Challenge takes the architecture discovered by PRISM and trains a base model in a fully decentralized manner, with no central coordinator, no trusted aggregator, and no single point of failure.

It is the direct successor to Covenant-72B.

c. Data Fabrication produces high-quality prompt-response pairs for reinforcement learning fine-tuning, using a two-pass anti-plagiarism pipeline to ensure the synthetic data genuinely improves the model rather than introducing noise.

d. The Term Challenge evaluates the resulting agent through SWE-Forge, a continuously regenerating benchmark that extracts real merged pull requests from GitHub every epoch. Because the benchmark renews continuously, data contamination is structurally impossible.

Term Challenge evaluation results feed back into architecture search and training strategy, creating a network that optimizes its own production pipeline without any central authority directing the process.

What Is Already Delivered

Separating what Platform has demonstrated from what it is promising matters, and the whitepaper is honest about that distinction.

Covenant-72B is the most important empirical proof in the document. Trained across at least 70 independent nodes distributed across multiple continents with no single controlling entity, it achieved an MMLU score of 67.1, surpassing Meta’s LLaMA-2-70B at 65.6, which was trained on Meta’s own centralized infrastructure. Capability parity at frontier scale has been reached. Decentralized training is no longer theoretical.

SWE-Forge is live and in production. The continuous benchmark generator works, the six-layer anti-manipulation pipeline is deployed, and the code is open source. The transition from Terminal-Bench 2.0 to SWE-Forge is documented as a case study in honest self-correction: Terminal-Bench 2.0 was retired after miners developed strategies that maximized scores without improving real capability.

Platform acknowledged the failure and fixed it rather than defending a broken benchmark.

The validator incentive framework is formalized through three game-theoretic proofs establishing that:

a. Honest evaluation is a Nash equilibrium under Yuma Consensus,

b. Coalitions controlling less than the majority stake threshold are structurally inefficient, and

c. Deviation from consensus is automatically self-penalizing through reduced emissions.

These are mathematical properties of the protocol, not promises.

What Is Still Ahead

Platform 3.0, targeting May 2026, activates all four challenges simultaneously for the first time. The roadmap is ambitious and the whitepaper is direct about what has not shipped:

a. PRISM enters active evaluation in early May, though its repository remains private and the final evaluation design may evolve as research progresses,

b. The Training Challenge is still in active research with genuinely hard open questions around gradient aggregation under heterogeneous hardware, straggler mitigation without central coordination, and honest contribution verification at minimal cost,

c. Data Fabrication is upcoming alongside PRISM under Platform 3.0, and

d. Atlas 3.0 introduces autonomous emission allocation, adjusting challenge weights dynamically based on participation rates and demonstrated impact rather than fixed percentages or manual governance votes, with governance running continuously rather than in discrete epochs

The most ambitious commitment in the whitepaper is producing a state-of-the-art open source model trained entirely through Platform, from architecture search through training to evaluation, with no external runs and no centralized orchestration. If it materializes, it is a defining milestone for decentralized AI.

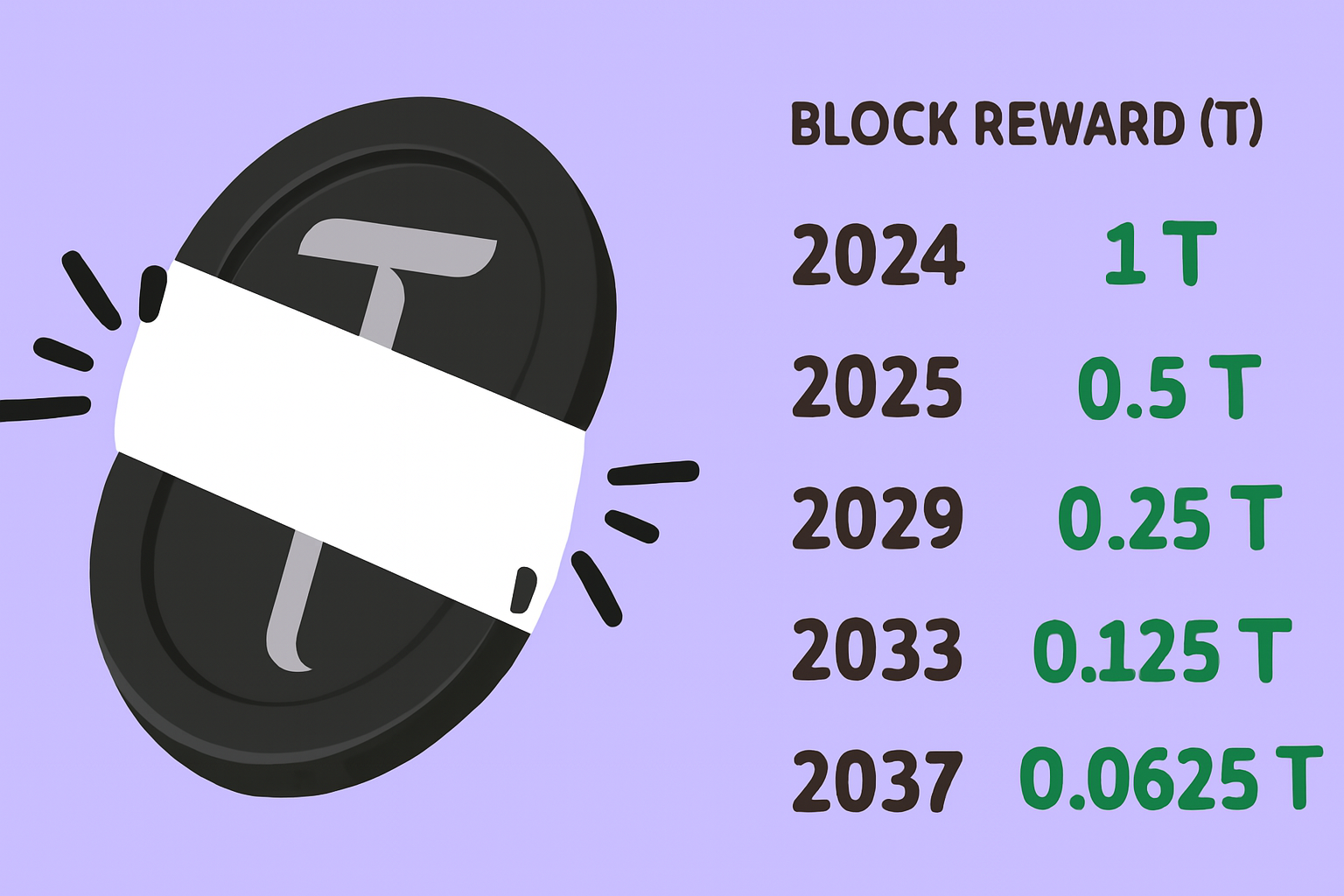

The Economic Argument

Platform makes a quantitative case for why $TAO-denominated rewards can outcompete lab salaries for AI talent. Centralized labs draw from an effective pool of roughly 15,000 to 20,000 candidates globally, constrained by PhD requirements, visa eligibility, and geographic concentration. Platform’s addressable pool is bounded only by internet access and demonstrated capability.

The structural difference is significant:

a. A miner anywhere in the world earns the same $TAO per unit of demonstrated capability as a PhD researcher in San Francisco,

b. No hiring committee, no visa requirement, no cost-of-living adjustment affects reward distribution, and

c. Rewards flow proportionally to benchmark performance, with no knowledge of the miner’s identity, credentials, or location.

The whitepaper estimates Platform’s effective talent pool is two to three orders of magnitude larger than the centralized alternative, an advantage that is not incremental but structural and compounds over time as the network grows.

Three Questions Worth Sitting With

The whitepaper raises broader questions that apply to the entire Bittensor ecosystem, not just Subnet 100.

The First is Structural: Most current Bittensor subnets replicate centralized API hosting in a decentralized form. Platform argues explicitly that this creates no structural competitive advantage over centralized labs. If the argument holds, a significant portion of the ecosystem is suboptimal by construction.

The Second is Economic: The incentive flywheel Platform describes works as long as $TAO price sustains meaningful reward value. A prolonged price compression would stress-test the model in ways that technical challenges would not, and this dependency deserves honest acknowledgment.

The Third is methodological: Platform does not just promise, it proves. The Architecture Search Superiority Theorem is formalized mathematics, the validator propositions are game-theoretic guarantees, Covenant-72B is empirical evidence, and SWE-Forge is code in production.

This level of rigor raises the standard for what serious decentralized AI research looks like, and it sets a bar that most projects in the space have not yet approached.

Conclusion

The Platform whitepaper is a serious intellectual argument that centralized AI development has a structural ceiling, paired with a working system designed to demonstrate that the ceiling can be broken from the outside. The empirical evidence is real, the mathematical proofs are formalized, and the roadmap is honest about what has and has not shipped.

Execution risk is real but the framework Platform has built and the milestones it has already reached represent something genuinely different from what the rest of the Bittensor ecosystem has produced so far.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment