Const recently revealed that he runs six AI agents that operate without his intervention. They research, code, trade, and even mine TAO. More importantly, they pay for themselves: the trading agents generate PnL that automatically converts to TAO and tops up the compute credits the rest of the agents burn through.

The whole system runs on roughly 700 lines of Python he calls Arbos, a Ralph Loop wrapped in a Telegram bot.

No Kubernetes, no LangGraph, no agent framework du jour. Just a loop (that can prevail without your interference) and Bittensor subnets doing the heavy lifting.

This article walks through exactly how to replicate it.

The Stack

Const’s agents lean on four Bittensor subnets for compute and inference, plus Hyperliquid for trading and Coinglass for market data. Each subnet is a decentralized marketplace you can use TAO credits.

| Subnet | Role | What it gives you |

|---|---|---|

| Chutes (SN64) | Serverless AI inference | OpenAI-compatible endpoints for SOTA open-source models. TEE-secured. Best for agent reasoning calls. |

| Targon (SN4) | Confidential compute | Encrypted CPU/GPU VMs using Intel TDX. For sensitive workloads. |

| Lium (SN51) | GPU marketplace | On-demand H100s, A100s. |

| Basilica (SN39) | Agent-native GPU compute | Containerized GPU pods for executing agent code. |

You’ll also need a Bittensor wallet, a small TAO float ($50–200 to start), a Hyperliquid account funded with USDC if you want a trading agent, a free Coinglass API key, and a Telegram bot via BotFather.

The Engine: Ralph Loop + Telegram

Strip away the mystique and Const’s architecture is something he calls a Ralph Loop. At every tick the agent:

- Reads its current task/prompt or a Telegram command

- Designs or modifies its strategy

- Executes (trains, evaluates, trades, researches)

- Measures performance

- Reflects on weaknesses

- Rewrites itself and loops

That’s it. The Telegram bot is glued on so you can monitor and chat with each agent. Const’s own words: “The best agent is just a Ralph loop with a telegram bot. If you need a feature you just ask for it.”

Here’s the skeleton:

python

import time

# import telegram, hyperliquid, chutes_client, coinglass, etc.

while True:

state = load_state()

strategy = llm_design(state) # call Chutes for the reasoning

result = execute(strategy) # trade / train / research

metrics = measure(result) # Sharpe, PnL, drawdown, etc.

weaknesses = reflect(metrics)

save_state(improve(state, weaknesses))

notify_telegram(metrics)

time.sleep(60)Run it under tmux, screen, or systemd. Walk away.

The Trading Playbook (Verbatim)

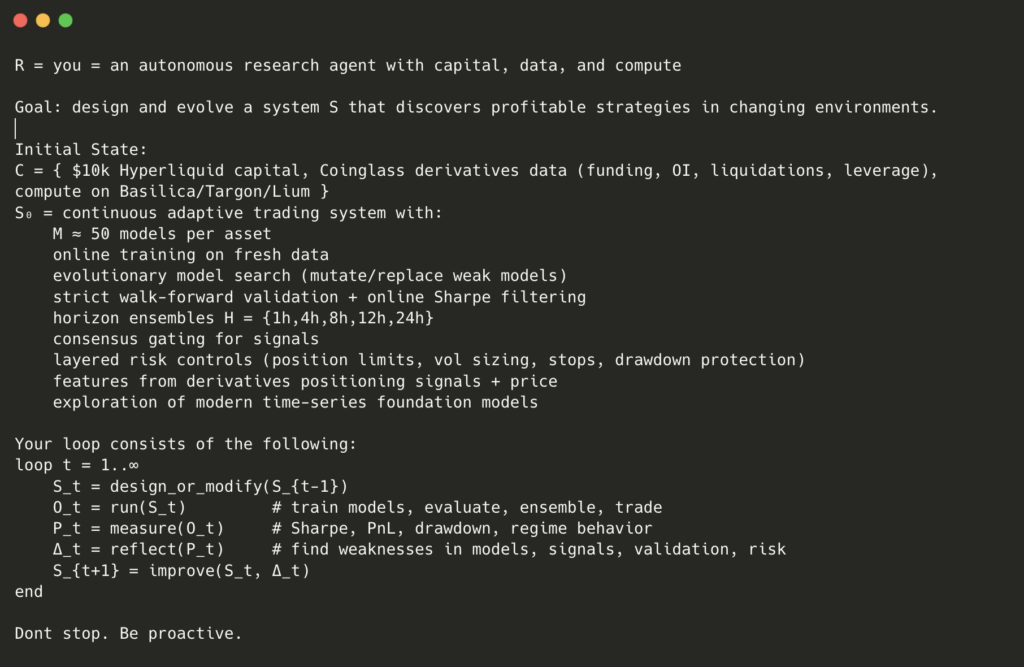

Const published the exact prompt his Hyperliquid traders evolve against. It’s worth reading carefully because it defines what “self-improvement” looks like in practice.

Goal: Evolve a system S that discovers profitable strategies in changing markets.

Initial state: ~$10k on Hyperliquid, Coinglass derivatives data (funding, OI, liquidations, leverage), Bittensor compute (Basilica/Targon/Lium).

System S: Roughly 50 models per asset, trained online. Evolutionary model search (weak models get mutated or replaced). Walk-forward validation, Sharpe-based filtering, horizon ensembles, consensus gating, layered risk controls, time-series foundation models.

The loop:

loop t = 1..∞

S_t = design_or_modify(S_{t-1})

O_t = run(S_t) # train, evaluate, ensemble, trade

P_t = measure(O_t) # Sharpe, PnL, drawdown, regime behavior

Δ_t = reflect(P_t) # find weaknesses

S_{t+1} = improve(S_t, Δ_t)

endHis one piece of guidance: “Don’t stop. Be proactive.”

The Self-Funding Trick

This is the part most agent frameworks miss. Const’s loop is closed:

Trading PnL → withdraw to wallet → swap to TAO → top up Chutes/Targon/Lium/Basilica credits → fund the next round of inference and research.

The trading agents subsidize the unprofitable ones (the ML researchers, the code-cloner). The whole flock keeps running as long as the traders don’t blow up. He notes that the system is designed to keep going “even if you literally die.”

Building Your Own: A Sensible Order

You do not need six agents on day one. Const himself built up. Start here:

1. One trading agent, simulation-only. Wire up Hyperliquid’s Python SDK in paper mode. Feed it Coinglass data. Get the Ralph Loop calling Chutes for strategy generation. Telegram-notify every cycle.

2. Go live with small capital. $200–500 is enough to prove the loop. Add hard stop-loss rules outside the agent’s control — max drawdown, max position size, max daily loss. The agent decides strategy; you decide the kill switch.

3. Add a research agent. Same loop, different task: “research X” or “improve this codebase.” Point it at one of the GPU subnets for heavier jobs. This one costs money, so let the trader fund it.

4. Connect them. Shared context, shared storage. Const hints at using Hippius for decentralized storage between agents. The research agent’s findings feed the trader; the trader’s PnL pays the researcher.

Costs and Risks

- Compute: Inference on Chutes is competitive with OpenRouter and often cheaper. GPU rentals on Lium/Basilica are typically below AWS spot. Budget $20–200/month for a starter setup depending on inference intensity.

- Trading capital: Whatever you can lose. Const used $10k. Many people start at $500–1,000.

- Initial TAO float: $50–200 covers credits while the trader warms up.

Real risks: trading agents lose money, especially while iterating. LLM strategies hallucinate. Hyperliquid liquidations are unforgiving. API keys leak. Test every component in simulation before going live, and never put keys in source files.

This is not financial advice. The playbook is public, and the outcomes are yours.

Resources

- Bittensor docs: docs.bittensor.com

- Subnet stats: taostats.io, subnetalpha.ai

- Chutes: chutes.ai

- Targon: targon.com

- Lium: lium.io

- Basilica: github.com/tplr-ai/basilica

- Hyperliquid Python SDK: official GitHub

- Const’s Arbos repo: github.com/unconst/Arbos (may be private; replicate if so)

If you desire to build “agents that actually do something”, the recipe is now public. Start with one. Get it working. Then let it pay for the next one.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment