Open competition moves faster than closed development, it self-corrects, and it produces results that nobody on a fixed roadmap would have predicted on the timeline they arrived at. That is exactly what just happened on the Affine (Bittensor Subnet 120).

One week of live miner competition produced Affine-I, a model that has overtaken Qwen3-32B across most benchmarks, with its biggest leads landing precisely where they matter most for agentic AI: software engineering, terminal task execution, and long-context retrieval.

This is not only a marginal improvement on a leaderboard but also a testament to what incentivized permissionless model competition is capable of producing.

What the Benchmarks Show

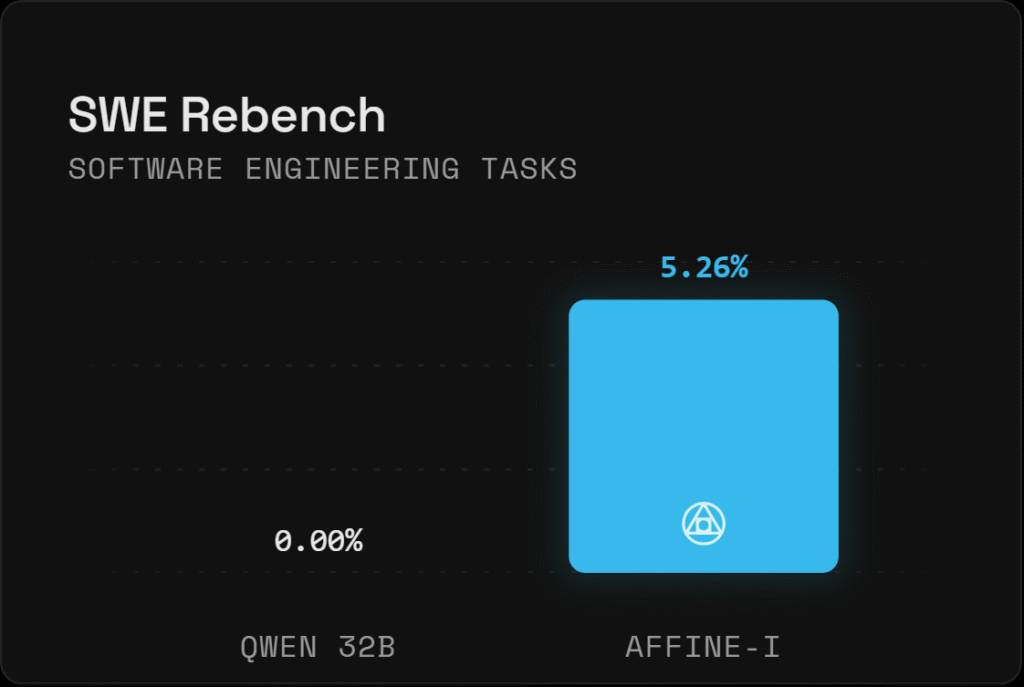

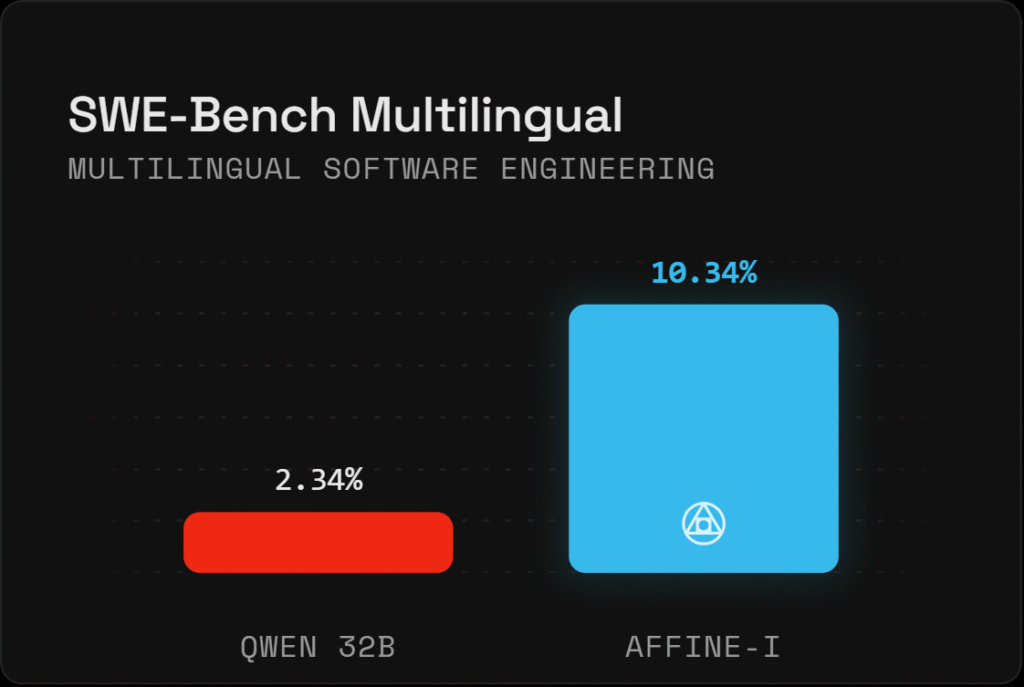

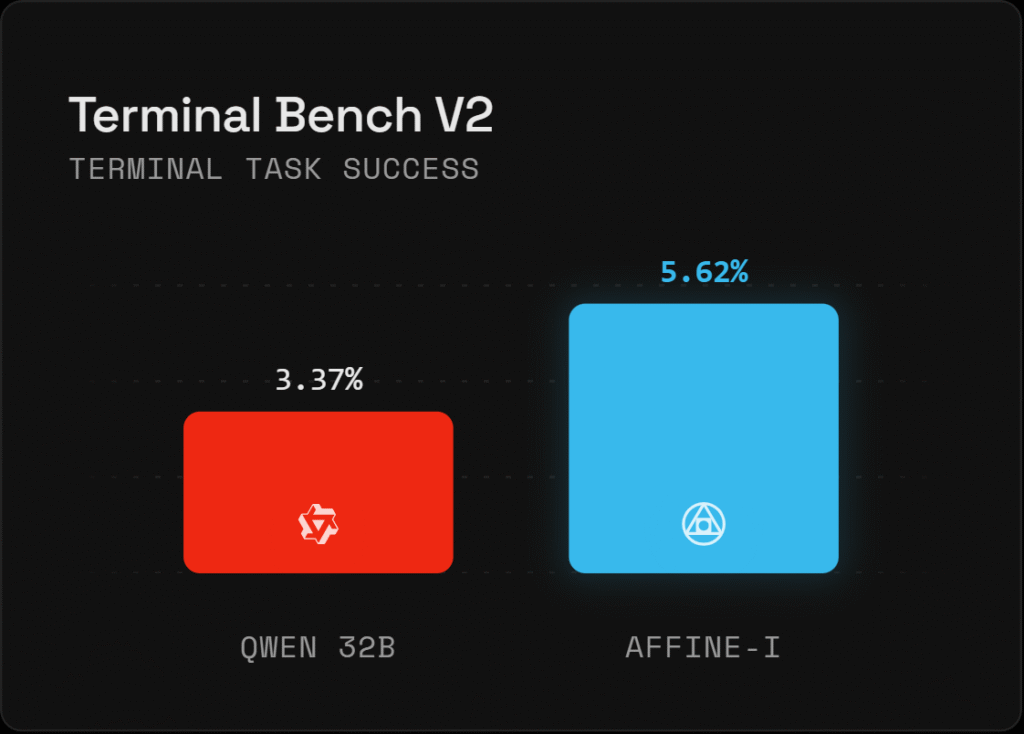

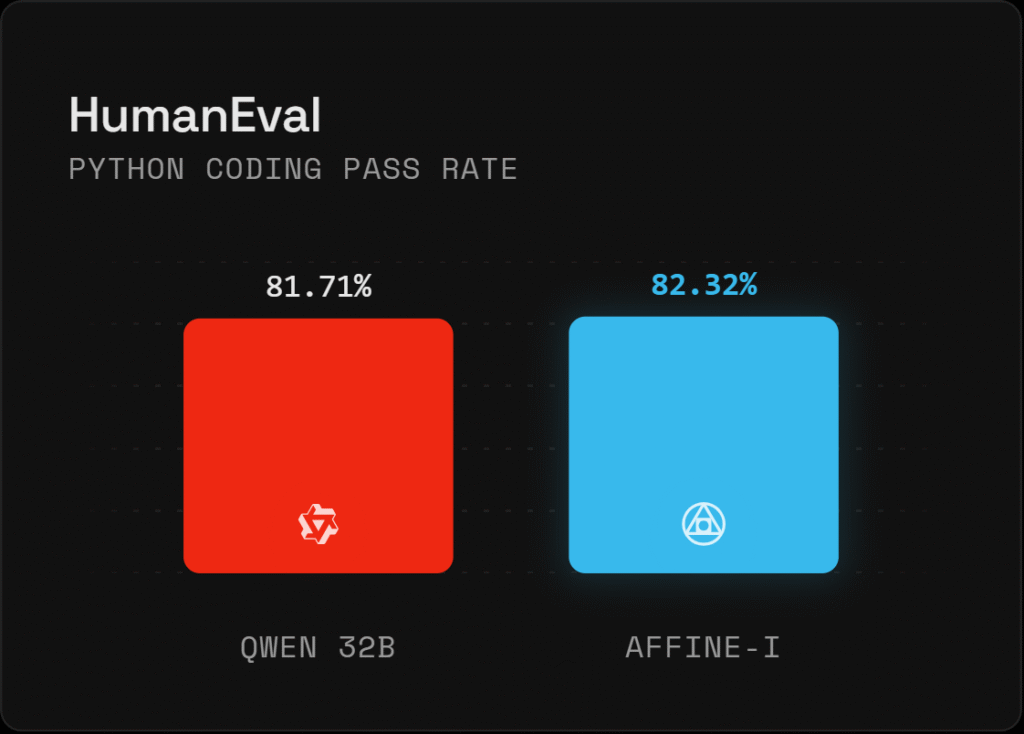

The comparison between Affine-I and Qwen3-32B ran across eight benchmarks. Affine-I led on six of them, with the margins on the most contamination-resistant tests being the most telling:

a. SWE-Rebench: 5.26% versus 0.00%. This is the number that matters most, and we will come back to it,

b. SWE-Bench Multilingual: 10.34% versus 2.34%, Affine-I’s largest margin across the entire suite.

c. Terminal Bench V2: 5.62% versus 3.37%, measuring real terminal task success in live agentic workflows.

d. HumanEval: 82.32% versus 81.71%, a consistent lead on Python coding pass rate.

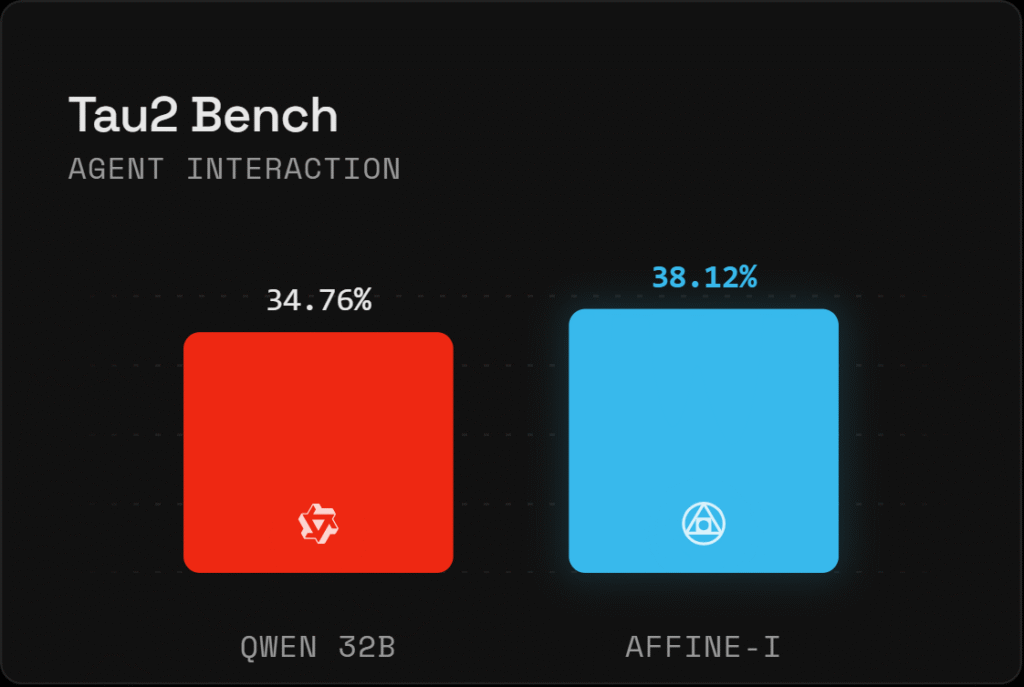

e. Tau2 Bench: 38.12% versus 34.76%, covering agent interaction quality.

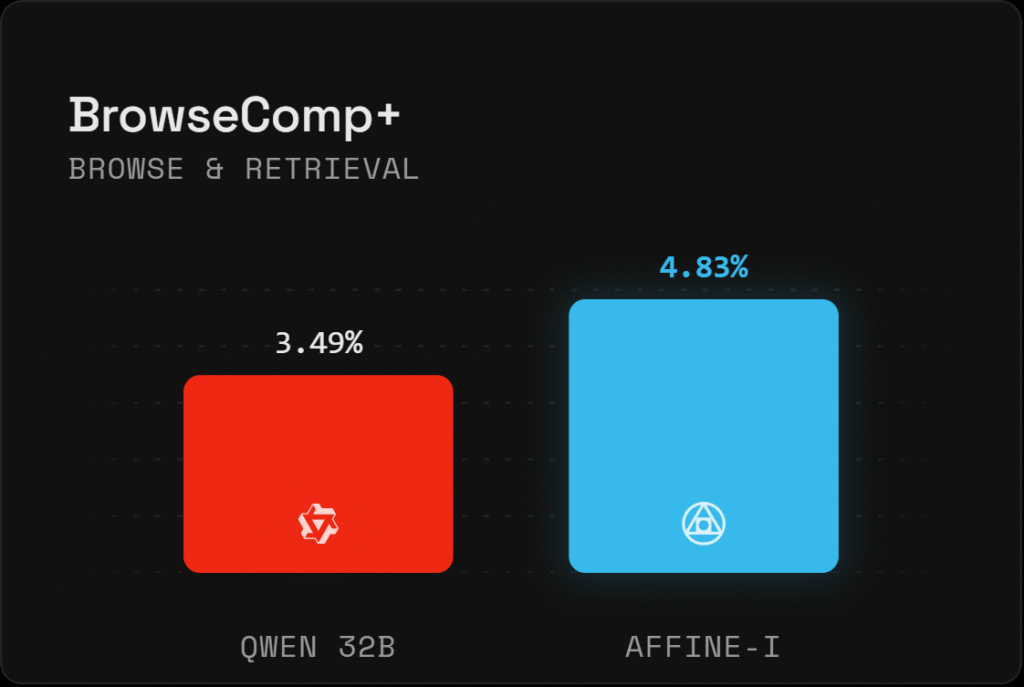

f. BrowseComp+: 4.83% versus 3.49%, measuring browse and retrieval performance.

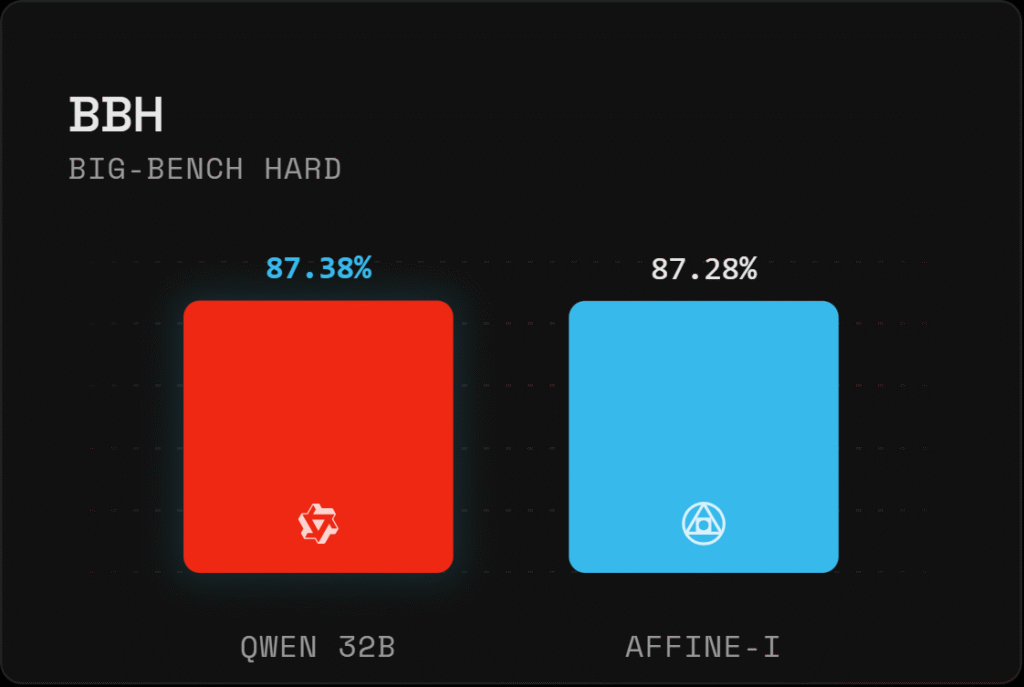

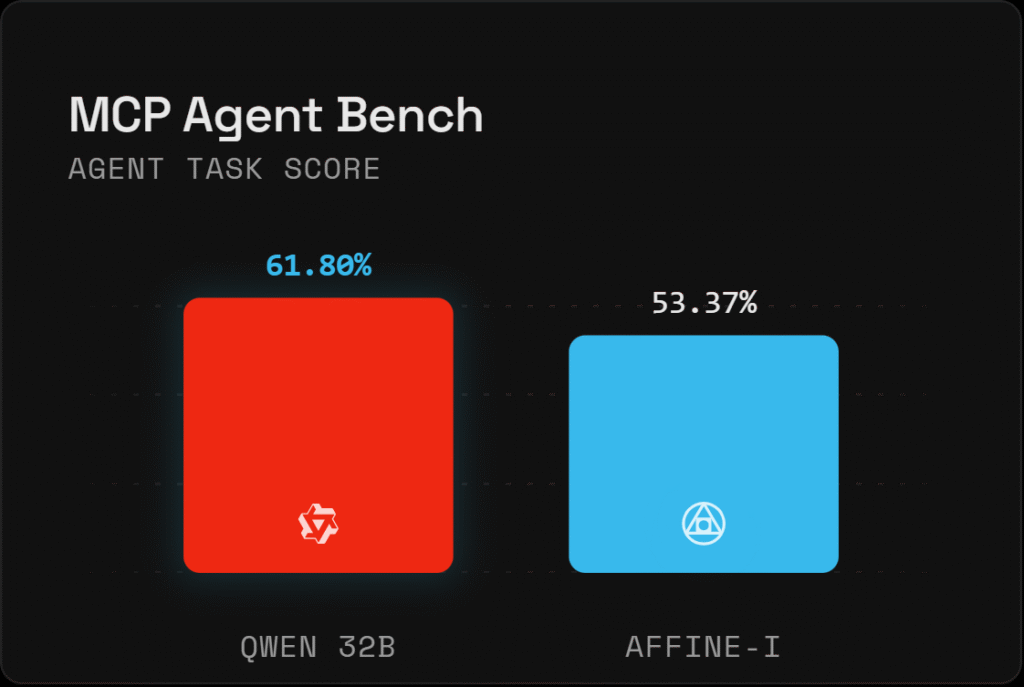

Qwen3-32B leads on BBH and MCP Agent Bench, both flagged as directional snapshot benchmarks not yet suitable for strong leaderboard claims.

On the stable benchmarks where the results carry full weight, Affine-I is ahead.

Why SWE-Rebench Is the Number Worth Focusing On

Most benchmark results carry an asterisk once you know how they work. Models trained on large internet datasets have often seen the test cases, making high scores achievable through memorization rather than genuine problem-solving. SWE-Rebench was built specifically to close that gap, using a continuously refreshed task supply that makes data contamination structurally impossible.

Affine-I scoring 5.26% against Qwen3-32B’s 0.00% on this benchmark is not a narrow win on a technicality. It is evidence that the competitive mining environment on Affine is producing real software engineering capability that did not exist in the base model before the competition began. A five-point lead on a contamination-resistant benchmark, after one week, is the kind of result that commands serious attention.

The Models That Built the Foundation

Before Affine-I, there was an earlier four-model evaluation run on April 7th covering Axon1 M19, Leary CX, and Leary CS against the Qwen3-32B-TEE baseline. Those results established the trajectory that Affine-I is now extending:

a. Leary CX led on complex long-context and agentic workloads, posting the best accuracy on BrowseComp-ZH and the strongest F1 on MemoryAgentBench,

b. Leary CS dominated tool-use, leading on task completion and tool selection while running efficient query times,

c. Axon1 M19 was competitive on long-context memory and posted the highest ROUGE-L recall, though less consistent on tool-use tasks, and

d. Qwen3-32B-TEE held a respectable baseline on Chinese retrieval and tool-call correctness but trailed on every complex agentic workload in the suite.

Each of those models was a stepping stone. Affine-I is where that progression currently stands, and it is explicitly described by the team as just the opening move.

Conclusion

Affine-II and Affine-III are already in development, each targeting a different agentic workload, and the competitive environment that produced Affine-I in a single week does not pause between iterations. What these benchmarks demonstrate is straightforward: open, incentivized model competition on Bittensor is producing measurable, contamination-resistant gains on tasks that matter for real-world deployment, on a timeline that closed development pipelines cannot replicate. Every new model that tops the leaderboard raises the floor for the next one. The arena does not slow down, and based on what one week just produced, that is exactly the point.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment