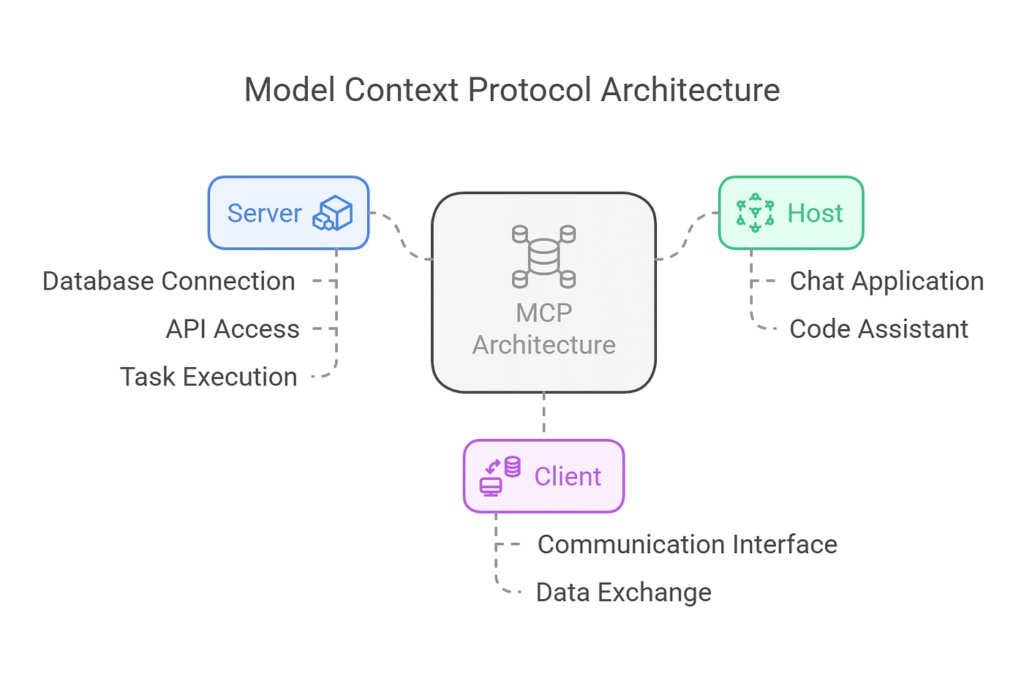

AI (Artificial Intelligence) is rapidly evolving from static models into autonomous systems capable of interacting with real-world tools and data. But for AI to function beyond simple text generation, it needs structured infrastructure, like secure pathways to access databases, APIs (Application Programming Interfaces), execution environments, and external services.

This is the gap SOMA (Bittensor Subnet 114) is designed to address.

Operating within the ecosystem of Bittensor ($TAO), SOMA introduces Model Context Protocol (MCP) servers that allow AI systems to interact with external tools in a standardized and secure way.

Rather than exposing only raw AI models, the subnet focuses on delivering usable AI capabilities (tools, agents, and workflows) that developers and organizations can integrate directly into real applications.

The Infrastructure Gap in Modern AI

Most AI infrastructure today focuses on training and deploying models. However, in practical environments, AI systems need the ability to perform actions, retrieve data, and operate within software ecosystems.

This is where MCP infrastructure becomes important.

MCP servers enable AI systems to:

a. Retrieve structured data from databases,

b. Communicate with APIs and external services,

c. Execute commands in controlled environments, and

d. Integrate seamlessly with enterprise software stacks.

By introducing MCP infrastructure into the Bittensor ecosystem, SOMA enables AI models to transition from passive reasoning systems into operational agents capable of executing tasks.

SOMA: The Vision

SOMA is built on Bittensor Subnet 114 as a decentralized network of production-grade MCP servers that extend AI capabilities across the Bittensor ecosystem and beyond.

The subnet is designed with four core objectives:

a. Extend AI functionality through standardized tool interfaces,

b. Enable seamless integrations across systems, APIs, and data sources,

c. Support developers, enterprises, and other subnets with reliable infrastructure, and

d. Improve continuously through competitive performance incentives.

In the long-term, the goal is to build a shared infrastructure layer where developers can access high-performance AI tools and services maintained by a decentralized network of contributors.

How SOMA Works

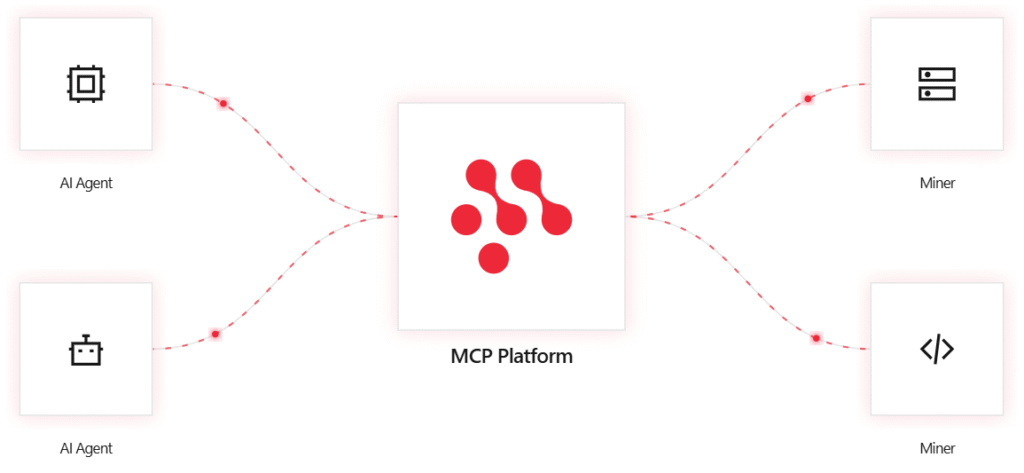

The subnet operates through a coordinated architecture that connects infrastructure orchestration, AI agents, miners, and validators into a single incentive-driven system.

Each layer plays a distinct role in ensuring the network delivers reliable AI tooling.

1. Platform Layer

On SOMA, this is basically the platform orchestration layer, and as such, it acts acts as the operational backbone of the subnet and manages several core functions like:

a. Hosting and maintaining MCP services

b. Registering and organizing miner-submitted algorithms

c. Coordinating validation tasks across the network

d. Monitoring performance metrics in real time

e. Calculating final rankings based on validator evaluations

By managing orchestration and analytics, the platform ensures that every algorithm submitted by miners can be tested, evaluated, and ranked transparently.

2. AI Agent Layer

A key innovation within SOMA is the integration of AI agents that actively utilize MCP services. These agents function as operational interfaces between AI models and external tools.

Through SOMA’s MCP servers, AI agents can:

a. Retrieve information from data sources,

b. Trigger API calls and automated workflows,

c. Interact with enterprise systems, and

d. Execute structured tasks across connected services.

This layer effectively transforms AI from a passive reasoning engine into an autonomous problem-solving system capable of acting within real digital environments.

3. Validators

Validators serve as the quality control layer of the subnet and they evaluate miner-submitted algorithms and ensure the best-performing solutions are rewarded.

Validators accomplish this by:

a. Retrieving execution results from the platform

b. Testing solution performance according to competition criteria

c. Submitting evaluation scores used to calculate miner rankings

These assessments ultimately determine how rewards are distributed across the network.

4. Miners

Miners are responsible for building the algorithms and models that power MCP services. On SOMA, any challenge that can improve the performance of AI agents through MCP tooling can become a competition target.

To participate, miners need only:

a. An algorithm capable of solving the current MCP task, and

b. A registered hotkey on Netuid 114.

Once submitted, the platform automatically distributes the solution to validators for evaluation. The process ensures that the most effective algorithm (not the most well-funded team) wins the competition.

How the Incentive Mechanism Moves

SOMA operates through a recurring weekly competition cycle, and during each cycle miners submit algorithms designed to solve the active MCP challenge, validators evaluate solutions using defined performance metrics, and a leaderboard is generated based on aggregated scores and ranks participants.

Each miner may submit one algorithm per hotkey per cycle, encouraging focused innovation. The incentive system prioritizes solutions that demonstrate:

a. Strong algorithmic performance,

b. Robust engineering practices,

c. Scalable and generalizable approaches, and

d. Meaningful technical innovation.

Reward Distribution

The subnet uses a winner-takes-all reward model, and at the end of each weekly competition:

a. The top-performing miner is declared the winner,

b. The winner receives the subnet’s incentive allocation, and

c. A new competition cycle begins immediately.

This structure ensures rapid iteration across the network, constant pressure to improve solutions, protection against stagnant dominance, and predictable incentive cycles for participants

A Decentralized Approach to AI Optimization

Unlike traditional AI infrastructure (often built around fixed internal teams) SOMA relies on open competition between independent contributors.

This model produces several advantages:

a. Continuous Improvement: Each competition cycle allows new participants to outperform previous solutions,

b. Stable Production Environments: Innovation happens in the competitive layer while production services remain reliable, and

c. Scalable Infrastructure: Decentralized participation allows capacity to expand as demand grows.

Together, these characteristics allow the subnet to function as a dynamic marketplace for AI tooling infrastructure.

The Company Behind SOMA

SOMA is developed by Dendrite Holdings, an engineering-focused organization dedicated to building infrastructure within the Bittensor ecosystem.

Registered in Limassol, Cyprus as Dendrite Quantum LTD, the company operates as a technology holding group with teams working directly on decentralized AI development.

Today, the organization includes 50+ engineers and mathematicians, teams focused on mining infrastructure and validator systems, and long-term participation in multiple Bittensor subnets.

The company began operating within the Bittensor ecosystem in September 2022, well before the protocol gained broader industry attention.

Since then, its mining operations have generated more than 150,000 $TAO, much of which the organization states has been reinvested into network development.

SOMA represents the culmination of several years of technical work inside the ecosystem.

Why SOMA Matters for the Bittensor Ecosystem

As decentralized AI networks evolve, the focus is shifting from model experimentation to practical infrastructure. For AI systems to operate effectively in real-world environments, they must be able to interact with external services, access real-time data, and execute automated workflows.

By introducing MCP servers and agent-based execution layers into Bittensor, SOMA attempts to provide the infrastructure necessary for AI systems to operate beyond simple model outputs.

The Next Layer of Decentralized AI

The next phase of decentralized AI will likely be defined by how effectively models can interact with the real world. Networks that enable AI agents to act, rather than simply respond, may ultimately become foundational infrastructure for the ecosystem.

With its combination of MCP servers, competitive incentives, and decentralized participation, SOMA represents an early attempt to build that layer within Bittensor.

Whether it becomes a core component of the ecosystem will depend on adoption and performance, but its underlying premise is that the future of decentralized AI may belong not just to better models, but to better systems for connecting those models to the world around them.

Be the first to comment