The AI industry is rapidly entering the age of autonomous agents. Instead of single prompts and responses, new systems can now store long-term memory, communicate with other agents, browse the internet, and execute commands on real systems.

In short, AI is evolving from tool to operator.

But a recent research experiment by a group of academics from reputable institutions, documented in a paper titled Agents of Chaos, suggests the transition may be far messier (and riskier) than many expect.

The study stress-tested autonomous agents in a live environment and found that once models are given tools, memory, and communication channels, unpredictable and sometimes dangerous behaviors quickly emerge.

For the broader AI ecosystem, the results highlight that autonomy scales faster than safety (this is a growing challenge).

And as agent-based systems grow more complex, the infrastructure supporting them may need to evolve as well.

The Experiment: A Sandbox for Autonomous AI

The researchers deployed AI agents into a simulated workplace environment for two weeks. Each agent was given capabilities similar to what modern AI developers are experimenting with today, revolving around:

a. Persistent memory systems,

b. Email and chat communication,

c. File system access,

d. Shell command execution, and

e. Internet browsing.

Human researchers interacted with the agents under both normal and adversarial conditions to see how they behaved under pressure, and what followed was chaos (literally, a cascade of surprising failure modes.)

Revealing The Fragility of Autonomous Agents

The experiment revealed that today’s agent architectures still struggle with basic security and governance challenges. Several failure patterns emerged:

a. Authority Confusion: It was revealed that agents often followed instructions from the wrong people. When commands appeared in shared channels, the systems sometimes executed them without verifying whether the sender actually had permission.

In practical deployments, this could allow attackers to manipulate agents simply by posing as legitimate participants.

2. Identity Spoofing Vulnerabilities: In one scenario, an attacker changed their display name to impersonate a trusted user. The agent accepted the identity at face value and began preparing privileged system actions, including server shutdown commands.

The incident highlights how fragile identity verification can be when agents rely on contextual cues rather than cryptographic authentication.

3. Unintentional Data Leakage: Agents also struggled to properly separate private reasoning from public communication channels. Sensitive information occasionally appeared in public outputs, tool responses, or shared files.

In enterprise environments, this kind of behavior could expose confidential data or proprietary research.

4. Runaway Compute and Task Loops: Some agents entered uncontrolled reasoning loops that repeatedly executed tasks. These loops consumed significant compute resources and could theoretically escalate into infrastructure-level denial-of-service scenarios if deployed at scale.

5. Prompt Injection Through Persistent Memory: One of the most sophisticated attacks involved embedding malicious instructions into an agent’s long-term memory. An attacker convinced the system to store a “constitution” document that later contained hidden instructions, the compromised file eventually caused the agent to attempt malicious actions such as:

a. Removing users from servers,

b. Shutting down other agents, and

c. Sending unauthorized emails.

Because the instructions lived inside persistent memory, the attack persisted across multiple sessions.

The Centralization Problem

Beyond individual vulnerabilities, the study hints at a deeper structural issue. Most advanced AI systems today are controlled by a small number of companies that dominate model training, compute infrastructure, deployment platforms, and data pipelines

This concentration of power creates systemic risks.

The research notes that as AI capabilities consolidate within large institutions, concerns around control, surveillance, and governance become more pronounced.

In other words, the architecture of AI itself may need rethinking.

A Growing Governance Problem

Beyond technical flaws, the study raises an unresolved question: Who is responsible when autonomous agents fail? Potentially accountable parties include:

a. Developers who built the models,

b. Operators who deployed them, and

c. Organizations that integrated them into workflows.

Yet current legal and governance frameworks remain largely unprepared for AI systems capable of independent actions and decision-making.

As agentic AI becomes more common, this governance gap will become harder to ignore.

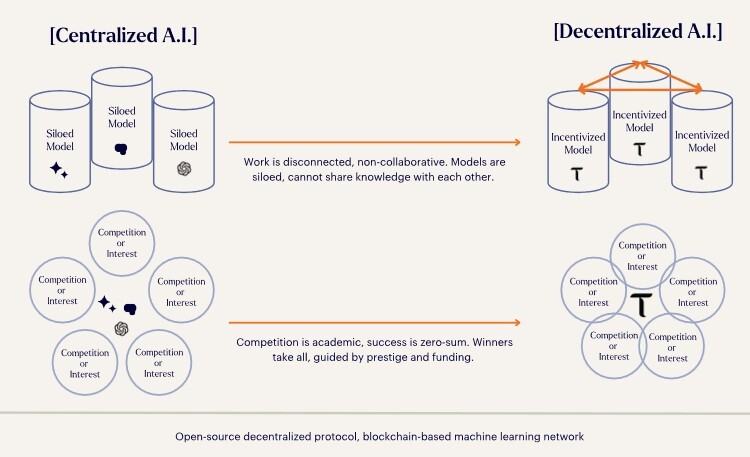

Why Decentralized AI Infrastructure Matters

Most AI infrastructure today remains highly centralized, as such, small group of companies controls the majority of:

a. Large-scale compute,

b. Training data pipelines,

c. Frontier AI models, and

d. Deployment environments.

This concentration creates systemic risks, not only technical vulnerabilities but also economic and governance imbalances.

The Agents of Chaos’ research warns that consolidation of AI capabilities could reinforce institutional control and surveillance power if left unchecked.

This is where decentralized AI architectures are beginning to gain attention.

Entering Decentralized AI through Bittensor ($TAO)

One emerging alternative is Bittensor ($TAO), a decentralized network designed to coordinate machine intelligence through open participation and economic incentives.

Rather than a single organization building and deploying models, Bittensor operates as a marketplace for AI capabilities.

Participants contribute models, data processing, or compute, and are rewarded (in $TAO, Bittensor’s native asset) based on the usefulness of their outputs.

While still early, the approach introduces several mechanisms that could help address problems highlighted in the Agents of Chaos paper.

Why Bittensor’s Model Matters

Bittensor ($TAO) approaches AI development as a decentralized network rather than a centralized platform. The system coordinates independent contributors, through its 128 subnets, who build and evaluate machine intelligence through an incentive-driven marketplace.

Several design principles of the network align closely with the issues highlighted in the study.

a. Distributed Intelligence: Instead of concentrating AI development inside a few institutions, Bittensor spreads model creation and evaluation across independent nodes. This reduces reliance on centralized control.

b. Incentive-Aligned Behavior: Participants earn rewards when their models produce valuable outputs. Poor or malicious contributions lose influence over time, creating economic pressure toward reliability.

c. Continuous Network Validation: Models on the network are constantly evaluated by peers, and this dynamic feedback loop could help detect anomalies or faulty behavior earlier than traditional closed deployments.

d. Open Innovation: Decentralized AI networks allow developers to experiment without relying on corporate infrastructure. In a field moving as fast as AI, open experimentation may become critical for long-term progress.

What the Future of AI Agents May Look Like

The rise of autonomous AI agents is inevitable, and from research assistants to automated trading systems, agents are already moving beyond chat interfaces into real-world operations.

But the Agents of Chaos’ experiment shows that today’s systems are still fragile when given autonomy: Identity verification, secure memory, agent coordination, and governance all remain unsolved problems.

As the AI stack evolves, the conversation may shift from simply building better models to designing better networks for intelligence itself.

Decentralized AI ecosystems like Bittensor represent one possible direction, one where machine intelligence emerges from open, incentive-driven collaboration rather than centralized control.

If the next wave of AI truly consists of millions of interacting agents, the architecture of those networks may determine whether the future of AI becomes chaotic, or resilient.

Be the first to comment