For most of its existence, Bittensor has been analyzed through the lens of incentives. Discussions have focused on emissions, staking dynamics, validator behavior, and the broader theory of decentralized intelligence. While those elements are important, they miss the point that ultimately determines whether any network sustains long-term value: Revenue.

This implies external users paying for products that solve real problems.

Across multiple subnets, Bittensor is beginning to produce something far more important to the ecosystem – independent, revenue-generating businesses operating on top of a shared incentive layer.

What follows is a breakdown of some of the subnets that are already selling, scaling, and competing in real markets.

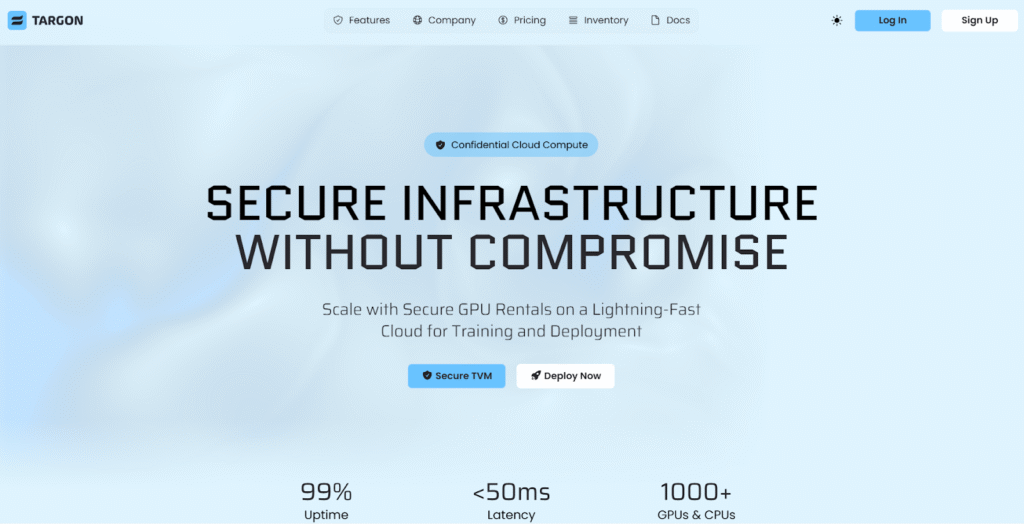

a. Targon (Subnet 4): Confidential Compute as a First-Class Primitive

Targon is positioning itself at one of the most critical points in the AI stack, which is secure, high-performance compute that can be trusted even in untrusted environments.

While many decentralized compute platforms focus on cost or access, Targon differentiates itself by addressing a deeper requirement, which is verifiable execution.

Targon operates as a full-stack cloud environment, designed to mirror the usability of traditional providers while introducing stronger guarantees around security and integrity.

Its offering includes:

a. Dedicated GPU and CPU rentals for training and inference,

b. Serverless execution for deploying models without infrastructure overhead,

c. Developer tooling through Python SDKs (Software Development Kits) and CLI (Command Line Interface) interfaces, and

d. Rapid onboarding with minimal configuration friction.

Structural Advantage

What makes Targon distinct is the environment in which workloads run.

a. Confidential computing powered by NVIDIA hardware,

b. Hardware-backed isolation using Intel TDX and AMD SEV, and

c. A virtual machine layer that enables attested, verifiable execution

This allows sensitive workloads, including proprietary models and private datasets, to be processed without exposing underlying data or relying on trusted intermediaries.

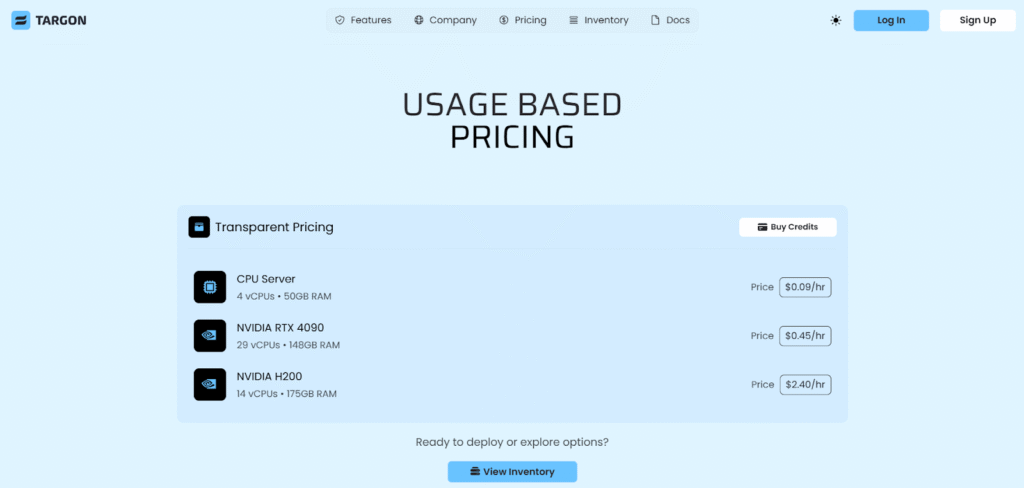

Commercial Model

Targon follows a straightforward usage-based pricing structure:

a. CPU instances starting around $0.09 per hour,

b. RTX 4090 GPU instances at approximately $0.45 per hour, and

c. High-end H200 compute at roughly $2.40 per hour.

The combination of competitive pricing and verifiable infrastructure positions Targon as a credible alternative to traditional cloud providers, particularly for AI workloads where trust is non-negotiable.

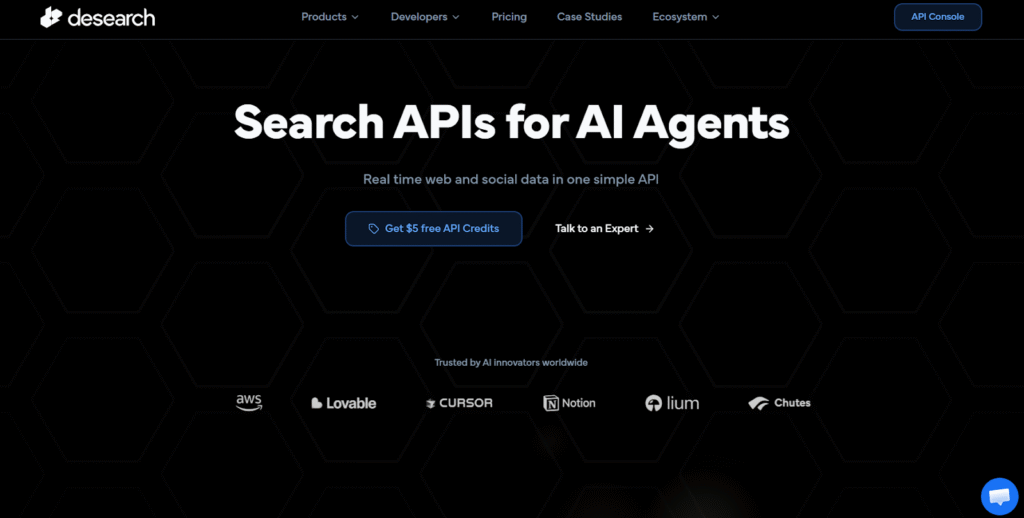

b. Desearch (Subnet 22): Real-Time Data Infrastructure for AI Systems

As AI agents move from experimentation into production, one constraint becomes immediately clear, which is access to fresh, structured, and reliable data.

Desearch addresses this directly by offering a unified API layer for real-time search across multiple sources.

Desearch consolidates several essential data primitives into a single developer interface:

a. Web search with structured, machine-readable outputs,

b. Social data access, including real-time Twitter streams,

c. Web crawling with full-page extraction and metadata parsing, and

d. AI-optimized search that aggregates across multiple sources.

Why It Gains Adoption

The platform is designed for developers building production-grade systems:

a. High rate limits and low latency suitable for live applications,

b. Native integrations with tools such as LangChain, n8n, and CrewAI, and

c. Real-time observability through logs, usage tracking, and debugging tools.

This makes Desearch particularly valuable for AI agents that depend on timely, contextual information rather than static datasets.

Commercial Model

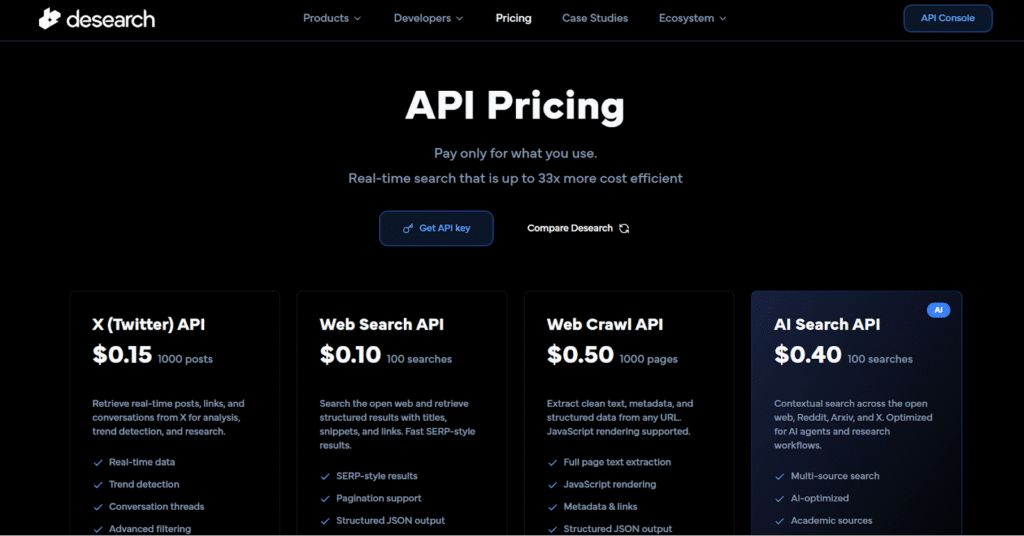

Desearch operates on a usage-based API pricing structure:

a. Social data queries from $0.15 per 1,000 results,

b. Web search queries from $0.10 per 100 requests, and

c. AI-aggregated search from $0.40 per 100 requests.

Its positioning as a cost-efficient alternative to legacy APIs makes it attractive for teams scaling data-intensive workflows.

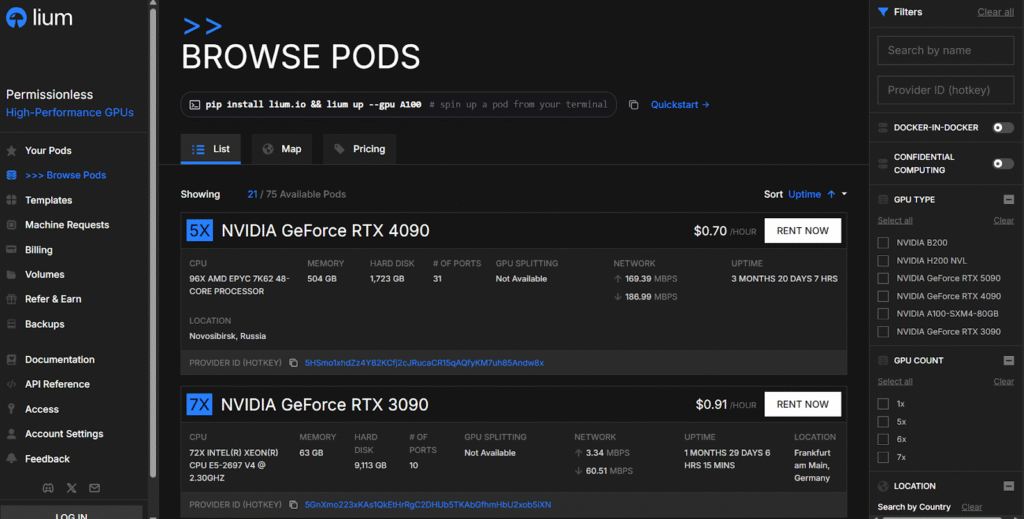

c. Lium (Subnet 51): A Global, Permissionless Compute Market

Lium represents a different approach to cloud infrastructure by transforming idle hardware into a globally distributed compute marketplace.

Rather than building centralized capacity, it aggregates supply from independent contributors and exposes it as on-demand infrastructure.

Through its platform, users can:

a. Access a wide range of GPUs, including A100s, H100s, and alternative accelerators,

b. Deploy workloads instantly through a web interface, and

c. Participate without traditional onboarding constraints, including KYC.

Market Position

Lium’s advantage comes from its economic model:

a. Lower costs driven by global supply aggregation,

b. Higher utilization through monetization of idle resources, and

c. Open participation that expands supply dynamically.

This creates a system where compute is not just cheaper, but structurally more efficient.

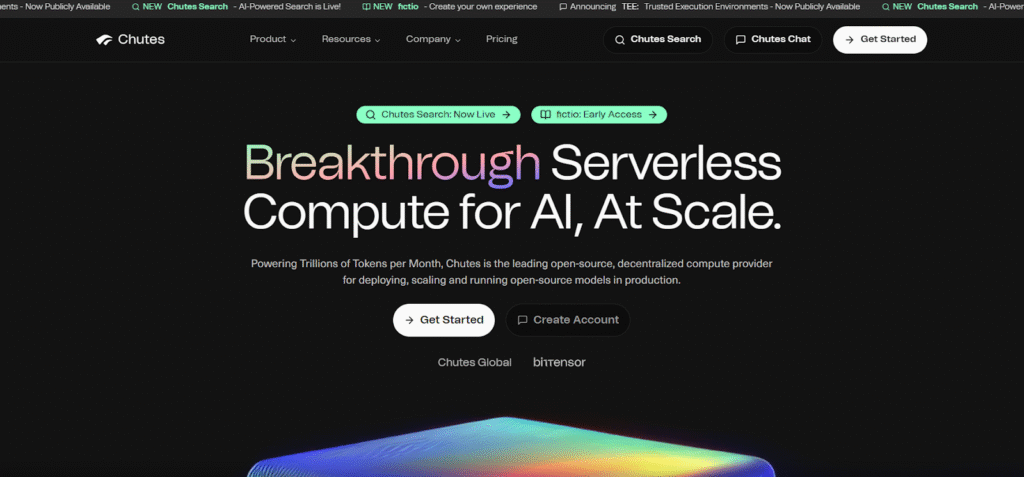

d. Chutes (Subnet 64): Serverless AI Inference With Production Throughput

Chutes is one of the clearest examples of a subnet moving beyond infrastructure into high-volume, revenue-generating product execution.

It provides a serverless platform for AI inference, allowing developers to deploy and scale models without managing infrastructure.

Chutes abstracts complexity into a simple interface to offer:

a. Instant deployment of AI models across modalities,

b. API-based access for seamless integration, and

c. Compatibility with model aggregators such as OpenRouter.

Execution Strength

What differentiates Chutes is its operational scale:

a. Designed for high-throughput inference workloads,

b. Continuous integration of state-of-the-art open-source models, and

c. Infrastructure capable of processing trillions of tokens monthly.

This positions it as a direct competitor to centralized AI APIs, with a more flexible and open architecture.

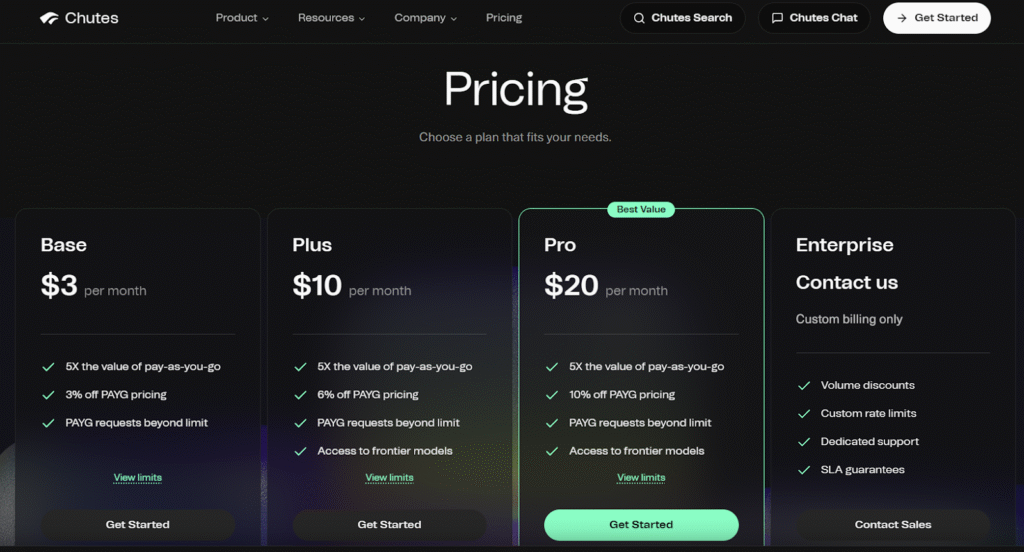

Commercial Model

Chutes combines subscription and usage pricing:

a. Entry tiers starting at $3 per month,

b. Incremental discounts on usage-based pricing, and

c. Enterprise configurations with custom scaling and SLAs.

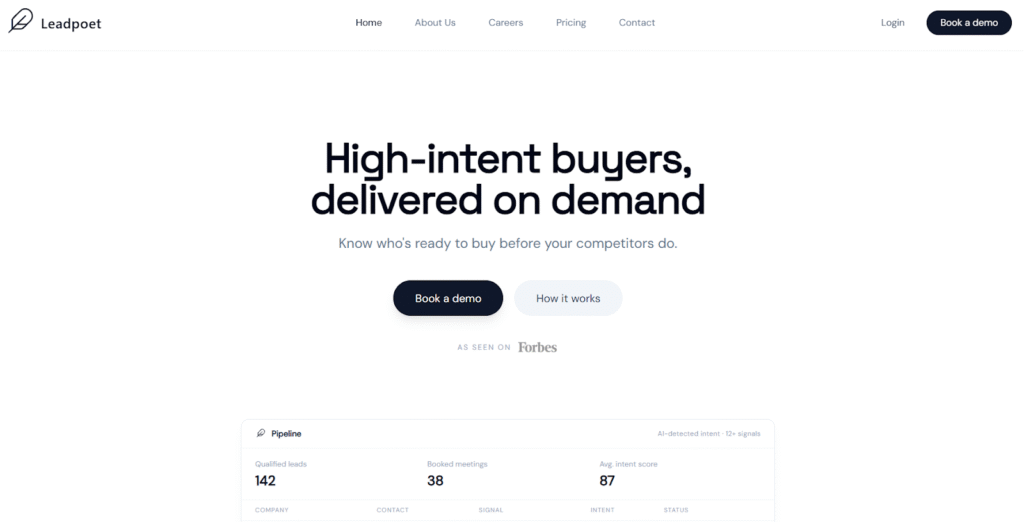

e. Leadpoet (Subnet 71): AI-Driven Sales Intelligence

Leadpoet moves further up the value chain by focusing not on infrastructure, but on business outcomes. Its core function is simple in concept but complex in execution, which is identifying and delivering high-intent customers ready to buy.

Leadpoet deploys autonomous AI agents to:

a. Monitor real-time signals such as hiring, funding, and behavioral data,

b. Identify prospects that match specific customer profiles, and

c. Score and validate leads before delivery

Business Impact

The platform replaces traditional lead generation with a more precise model:

a. Focus on conversion probability rather than volume,

b. Continuous prospect discovery without manual effort, and

c. Integration into existing sales workflows.

This transforms sales from a reactive process into a predictive, data-driven system.

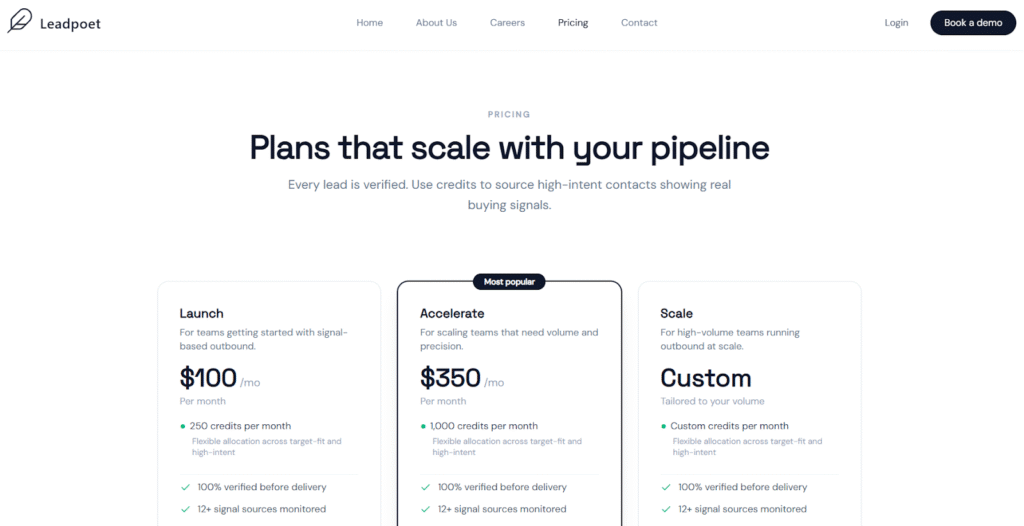

Commercial Model

Leadpoet operates on a subscription and credit basis:

a. Entry-level plans starting at $100 per month,

b. Scalable tiers for higher volume prospecting, and

c. Enterprise configurations with dedicated support and integrations

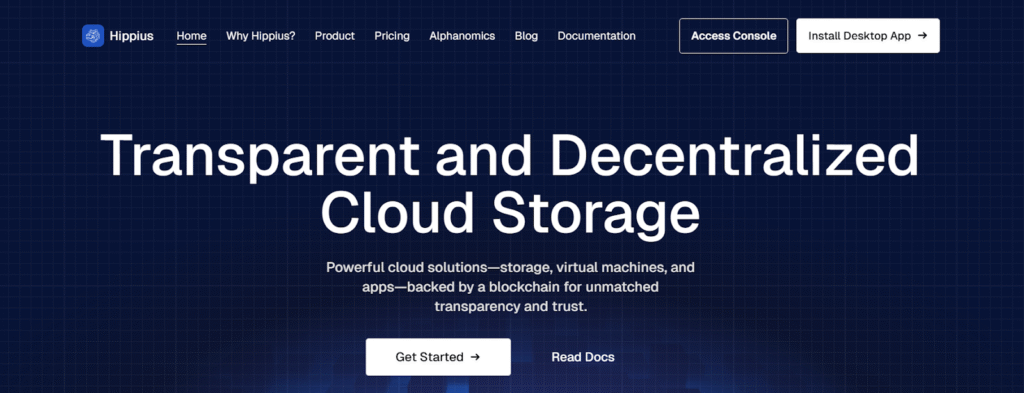

f. Hippius (Subnet 75): Persistent, Decentralized Storage

As compute and AI workloads scale, storage becomes a foundational requirement rather than an afterthought.

Hippius addresses this by providing decentralized, verifiable storage infrastructure within the Bittensor ecosystem.

Hippius delivers:

a. Distributed file storage built on IPFS, and

b. S3-compatible object storage for application integration.

System Role

Its importance lies in what it enables:

a. Persistent storage for models, datasets, and outputs,

b. Reduced reliance on centralized storage providers, and

c. Transparent and censorship-resistant data management

In effect, Hippius acts as the long-term memory layer for decentralized AI systems.

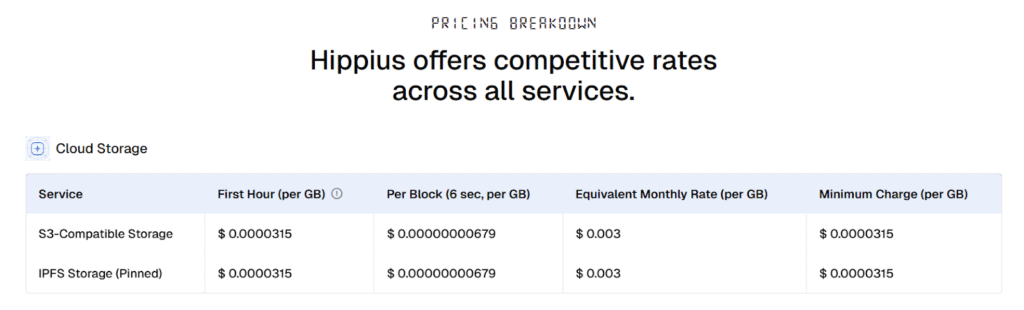

Commercial Model

Hippius uses a credit-based pricing system:

a. Entry plans around $3 per month for ~1TB storage,

b. Scalable tiers up to enterprise-level capacity, and

c. Automated credit systems for continuous usage.

From Incentives to Markets

What these Bittensor subnets demonstrate is economic validation. Each operates in a different segment of the stack:

a. Targon defines trusted compute,

b. Desearch delivers real-time data access,

c. Lium unlocks global compute supply,

d. Chutes executes AI inference at scale,

e. Leadpoet drives revenue through intelligence, and

f. Hippius secures persistent storage

Individually, they are useful, and collectively, they represent a system where decentralized AI infrastructure is no longer justified by incentives alone, but by its ability to compete, sell, and generate real economic value.

This is the inflection point.

Reason being, once users are willing to pay, the conversation shifts permanently from potential to performance, and from that point forward, the only metric that matters is what actually works!

Enjoyed this article? Join our newsletter

Get the latest Bittensor & TAO ecosystem news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment