As artificial intelligence continues to expand into more complex and high-stakes environments, the limitations of traditional infrastructure are becoming increasingly difficult to ignore.

AI systems today require more than raw compute power, because they must also handle sensitive data, scale dynamically under unpredictable demand, and operate in environments where trust cannot be assumed. Centralized cloud providers have historically addressed performance and scalability, yet they introduce structural weaknesses around data exposure, opaque control, and limited participation.

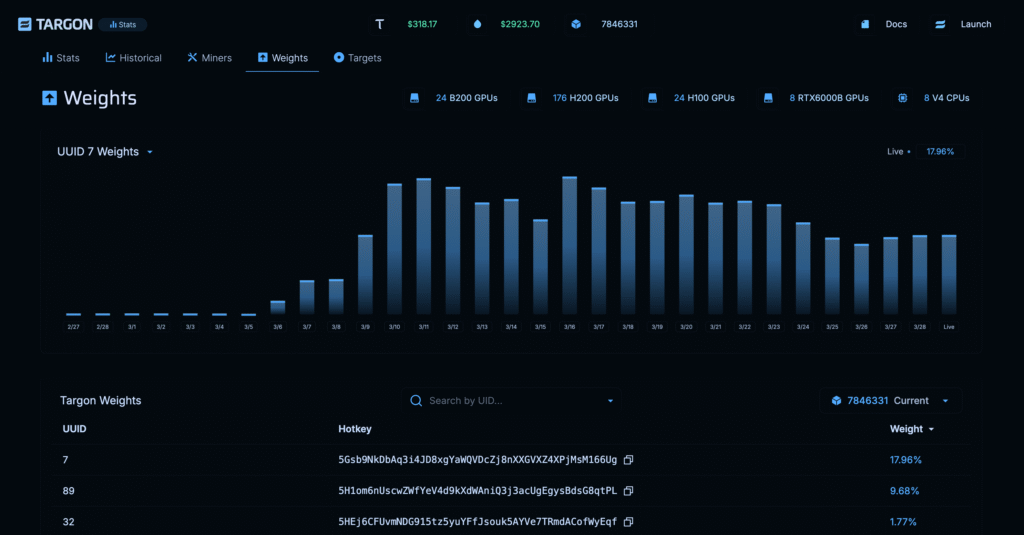

Targon, Bittensor’s Subnet 4, emerges as a response to these constraints by introducing a decentralized infrastructure layer where compute, confidentiality, and incentives are tightly integrated. Instead of treating these elements as separate concerns, Targon aligns them within a single system designed to support the next generation of AI applications.

What Targon Is

Targon is a decentralized cloud computing platform built within the Bittensor network, designed to provide secure and high-performance infrastructure for GPU-accelerated AI workloads while simultaneously functioning as a marketplace for AI-related digital commodities.

It enables developers and participants to:

a. Access high-performance compute resources without relying on centralized providers,

b. Deploy and scale AI models through serverless infrastructure,

c. Run workloads within hardware-protected confidential environments, and

d. Participate in a competitive, incentive-driven ecosystem.

At a higher level, Targon is not simply a compute provider, because it also coordinates how AI services are produced, evaluated, and rewarded through decentralized market dynamics. This dual role allows it to function both as infrastructure and as an economic layer for AI.

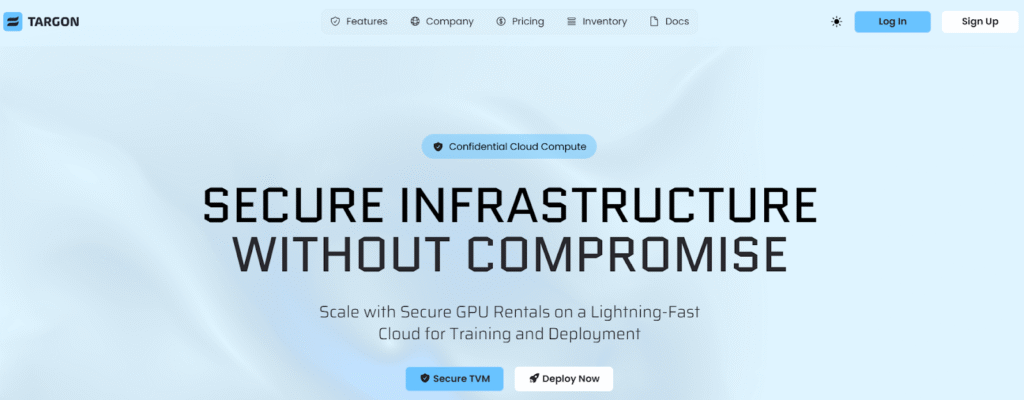

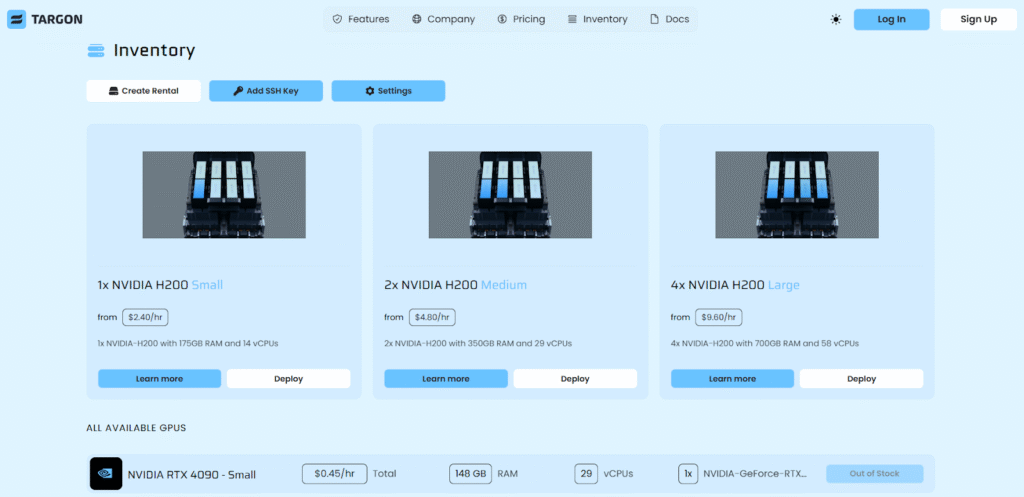

Core Infrastructure Capabilities

Targon’s infrastructure is designed to deliver performance, flexibility, and efficiency without compromising on decentralization. Key capabilities of this system includes:

a. GPU-Accelerated Compute: Access to advanced NVIDIA hardware, including H200s and RTX 4090s, enables efficient execution of training and inference workloads across a wide range of AI applications,

b. Serverless Auto-Scaling: Compute resources scale automatically based on demand, allowing applications to handle variable workloads without manual provisioning or over-allocation,

c. Pay-As-You-Use Model: Resource consumption is metered dynamically, ensuring that users only pay for what they actually use rather than maintaining idle infrastructure, and

d. Flexible Developer Access: Integration options such as Python SDKs (Software Development Kits), CLI (Command Line Interface) tools, serverless deployments, and dedicated rentals provide multiple pathways for interacting with the system

These capabilities collectively allow Targon to support both experimental and production-grade AI workloads in a manner that is efficient, scalable, and accessible.

Security by Design: Confidential AI at the Core

A defining characteristic of Targon is its emphasis on confidential computing, where data protection is enforced through hardware rather than policy.

The security architecture consists of:

a. Trusted Execution Environments (TEEs): Intel TDX and AMD SEV isolate computation from the host system, ensuring that data remains protected even from infrastructure operators,

b. GPU-Level Confidential Computing: NVIDIA Confidential Computing extends protection to GPU memory, enabling secure execution of AI workloads during both training and inference, and

c. Hardware-Backed Protection via TVM: The Targon Virtual Machine (TVM) provides a secure execution layer where encrypted data can be processed without exposure.

This approach ensures that:

a. Sensitive data remains inaccessible outside secure environments,

b. AI workloads can operate on private information without leakage, and

c. Trust is minimized because protection is enforced at the hardware level.

By embedding confidentiality directly into the infrastructure, Targon enables AI systems to be deployed in environments where privacy is a strict requirement rather than an optional feature.

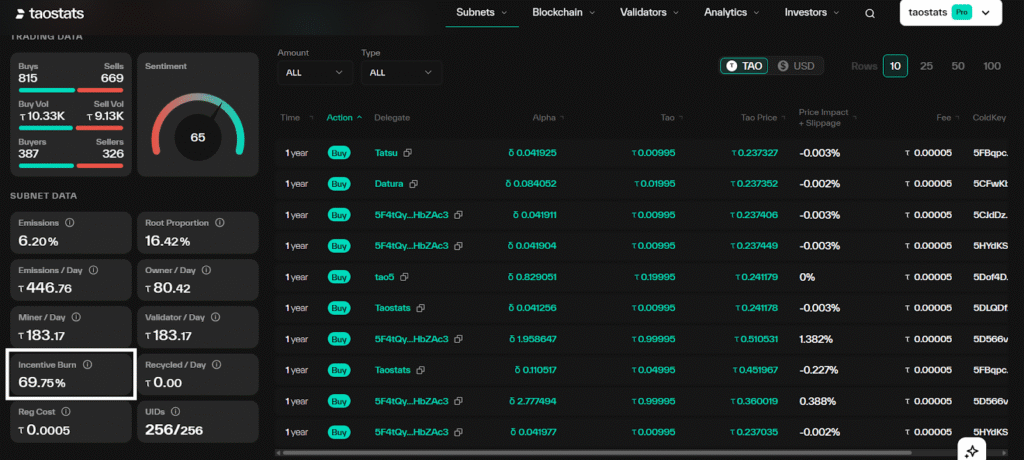

Targon’s $ALPHA and Its Burn Dynamics

Targon’s economic model is built within Bittensor’s Dynamic $TAO framework, incorporating liquidity, emissions, and incentive alignment into a unified system.

The liquidity structure of this ecosystem includes:

a. $TAO Reserve: which represents staked $TAO within the subnet, forming the base layer of economic participation,

b. Subnet ‘$ALPHA’ Reserve: A subnet-specific token used to facilitate internal transactions and incentives.

Participants can stake $TAO to acquire $ALPHA, enabling fluid interaction between the two assets and supporting continuous economic activity

However, a significant portion of incentives is burned. The burn rate is dynamic, not fixed. Targon defines a target number of nodes and a price it will pay for those nodes; any remaining emissions are burned. The burn percentage fluctuates — it can go as low as ~30% and rise considerably depending on Targon and $TAO price movements. The live burn rate can be monitored at targon.money. This burn mechanism reduces circulating supply pressure, and reinforces long-term economic sustainability while aligning incentives across participants.

This structure ensures that the subnet remains economically balanced while still incentivizing high-quality contributions.

Infrastructure, Intelligence, and Incentives Aligned

Targon represents a fundamental shift in how AI infrastructure is designed and operated, moving away from centralized, trust-based systems toward a model where performance, privacy, and participation are aligned within a decentralized framework.

By combining high-performance compute, hardware-enforced confidentiality, and incentive-driven coordination, Targon establishes a system where AI services are not only produced efficiently but are continuously refined through competition and validation.

It is not simply a platform for running AI, but a marketplace where intelligence is created, evaluated, and improved in real time.

As AI continues to expand into domains that demand both scale and confidentiality, Targon provides a blueprint for infrastructure that does not compromise between power and privacy, but instead integrates them into a single, coherent system.

Enjoyed this article? Join our newsletter

Get the latest Bittensor & TAO ecosystem news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment