There is a subtle shift happening in AI infrastructure. As models become more powerful, the conversation is no longer just about access to compute.

It is about who controls that compute, and more importantly, how secure it is while running.

This is where things start to get interesting.

Because while most platforms are still optimizing for speed and scale, a new category is emerging. One that treats privacy, isolation, and verifiability as first-class requirements.

Targon is positioning itself right at the center of that shift.

A Strategic Entry Into NVIDIA’s Startup Ecosystem

Targon, Bittensor Subnet 4, has been accepted into the NVIDIA Inception program. On the surface, it is a milestone many startups reach, but in this context, it signals something more meaningful.

It places the decentralized compute network into one of the most influential AI ecosystems in the world, and it does so without compromising ownership or alignment.

The team has made it clear that this is not just symbolic, and that the focus is to enhance what they call the Confidential NVIDIA GPU (Graphics Processing Unit) experience within their platform.

What Targon is Actually Building

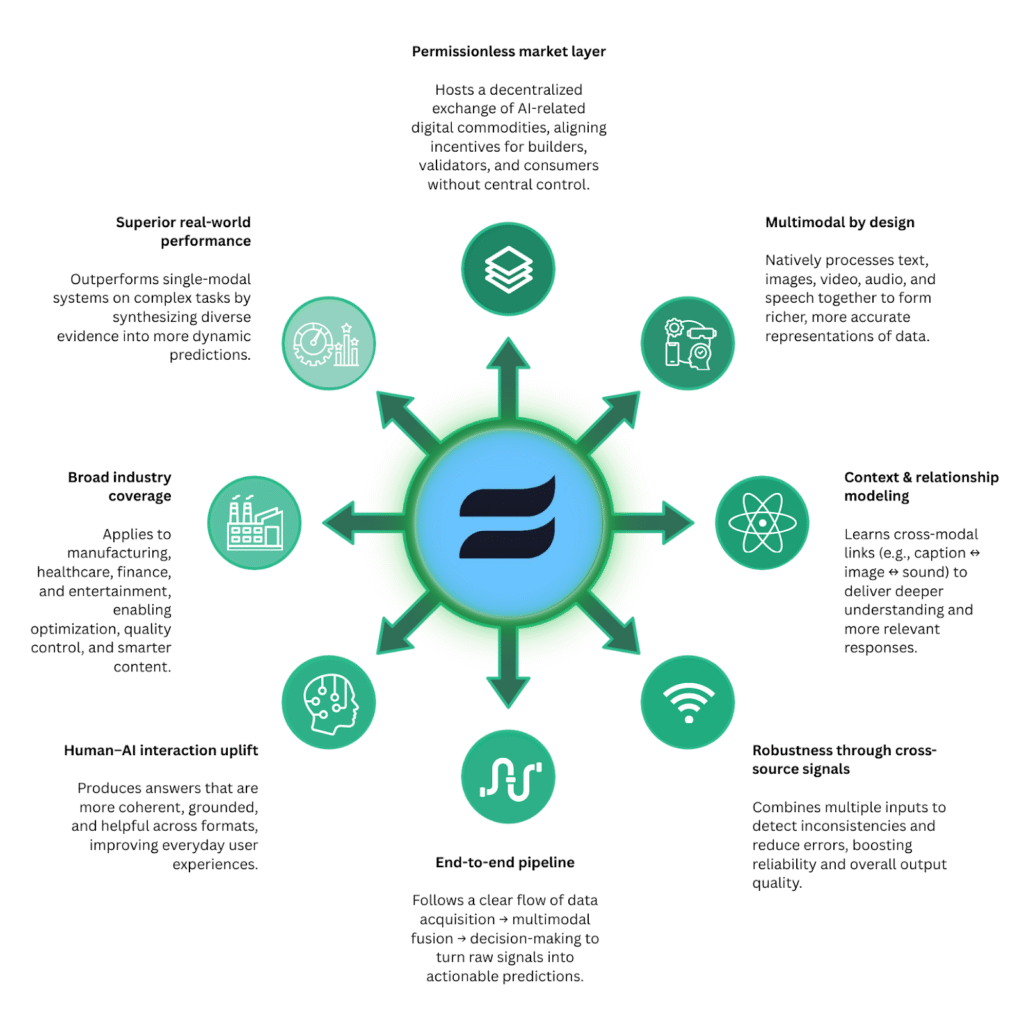

Targon is not just another GPU marketplace, it is a decentralized infrastructure layer for AI inference and compute, designed to balance three things that rarely coexist well:

a. High performance,

b. Scalability, and

c. Data confidentiality.

Instead of relying on centralized providers, Targon distributes workloads across a network of miners who supply GPU resources. This creates a marketplace where compute is both accessible and permissionless.

But the real differentiation is not distribution, it is how computation is executed.

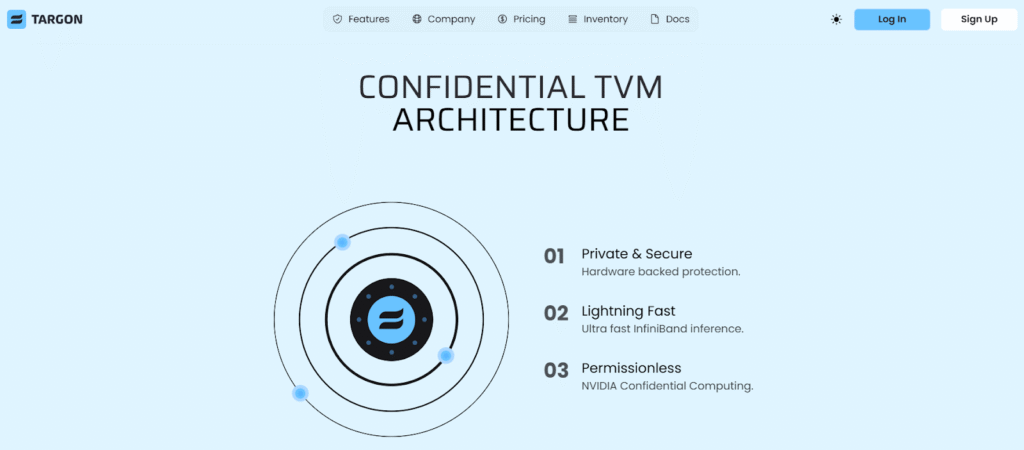

Confidential Compute as the Foundation

Most AI infrastructure today assumes a level of trust in the provider, Targon removes that assumption.

Through its architecture, developers can run workloads in environments where data remains protected, even during execution. This is made possible through a combination of:

a. Hardware isolated environments,

b. Trusted execution mechanisms, and

c. Confidential GPU processing.

At the center of this system is the Targon Virtual Machine (TVM).

The TVM allows AI models to run inside secure enclaves, ensuring that sensitive inputs and outputs are never exposed to the underlying host system. This is particularly relevant for:

a. Enterprise AI workloads,

b. Privacy sensitive data processing, and

c. Proprietary model deployment.

In a world moving toward AI everywhere, this level of control is becoming less optional.

Why NVIDIA Inception Matters Here

Asides from being just a “badge,”, the NVIDIA Inception program also provides tangible advantages that can accelerate Targon’s roadmap in meaningful ways:

a. Access to advanced developer tooling and technical training,

b. Preferential pricing on NVIDIA hardware and software,

c. Exposure to a global network of partners and investors, and

d. Go to market support at scale.

Importantly, it does all of this without taking equity.

For a decentralized project, that matters, because it allows Targon to scale its infrastructure while staying aligned with its network-driven model.

Where This Fits Within Bittensor

Targon’s role inside the Bittensor ecosystem is becoming increasingly clear. While many subnets focus on intelligence and model outputs, Targon focuses on the compute layer that makes those outputs possible.

It provides:

a. Infrastructure for running AI workloads,

b. A marketplace for GPU access, and

c. A privacy preserving execution environment.

This positions it as a foundational piece in a broader system where subnets generate intelligence, Targon enables secure execution.

That interplay is where long-term value begins to compound.

A Signal, Not Just an Announcement

This development is easy to overlook as just another partnership announcement, but it really is not.

It reflects a growing recognition that decentralized infrastructure is not just experimental, it is becoming relevant to the broader AI ecosystem.

More specifically, it highlights a shift toward:

a. Verifiable compute,

b. Confidential execution, and

c. Decentralized access to high performance hardware.

Targon is aligning itself with that future early.

Securing a Faster and Secure Phase of AI Infrastructure

For a long time, the race in AI was about capability. Now, it is expanding into something more nuanced: Trust, privacy, and control.

Targon’s entry into the NVIDIA Inception program does not just accelerate its growth, it strengthens its position in a category that is only going to become more important.

Because as AI systems move closer to real world deployment, the question is no longer just what they can do; It is whether they can do it securely, privately, and without compromise.

That is exactly the layer Targon is building.

Enjoyed this article? Join our newsletter

Get the latest Bittensor & TAO ecosystem news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment