Something fundamental is shifting inside Bittensor. Mining is no longer just about training better models, it’s now about orchestrating intelligent systems that can research, iterate, and deploy around the clock.

With the rise of OpenClaw, agents now work continuously, probing the edges of performance day and night. But there is a hard truth most miners discover quickly: Unstructured agents do not create leverage, they create chaos, duplicate experiments, and burn GPU (Graphics Processing Unit) hours.

Models that shine in local evaluation but collapse under subnet validation. The issue was never effort. It was direction.

That realization led to Synth City.

From Endless Loops to Directed Research

If you have mined before, you know the pattern: train, submit, wait, score, adjust, and repeat. Now, imagine automating that loop with agents running 24-hours a day.

Without coordination, they simply retry the same failures faster, they miss patterns hidden in prior results, and they consume decentralized compute chasing dead ends.

The bottleneck was never compute, it was knowing what to try next.

Synth City was designed to solve exactly that (Not by adding more agents, but by giving them structure!)

What Synth City Actually Does

Synth City is an agentic R&D (Research and Development) layer built for mining Bitttensor Subnet 50 (Synth). It automates the full experiment lifecycle:

a. Plans experiments based on historical results,

b. Trains models on decentralized GPUs,

c. Validates outputs against Subnet 50 specifications,

d. Diagnoses failures by parsing logs and tracing root causes,

e. Attempts automated fixes, and

f. Publishes winning models to production.

This is not one monolithic agent trying to do everything, it is a chain of specialized agents, each responsible for a defined phase.

That separation makes the system debuggable, resilient, and continuously improvable.

Humans can still operate the orchestration layer directly, however, the tooling supports both manual and autonomous workflows, and the big difference is that bots do not sleep.

The Three Layer Architecture

The system is deliberately structured into three layers. Stability at the base, adaptability in the middle, and strategic velocity at the top.

1. The Workshop Floor (Open Synth Miner): This is where training actually happens, and it provides:

a. Composable PyTorch building blocks,

b. A uniform tensor interface across all components, and

c. A modular library of architectures, loss functions, and data pipelines.

Every component speaks the same tensor language, and that consistency allows higher level agents to swap blocks freely without worrying about compatibility.

When the foundation is stable, experimentation accelerates.

2. The Research Engineer (Synth City): This is the autonomous R&D engine that plans experiments intentionally; reviews past runs, identifies what improved performance, what failed, and what combinations remain unexplored.

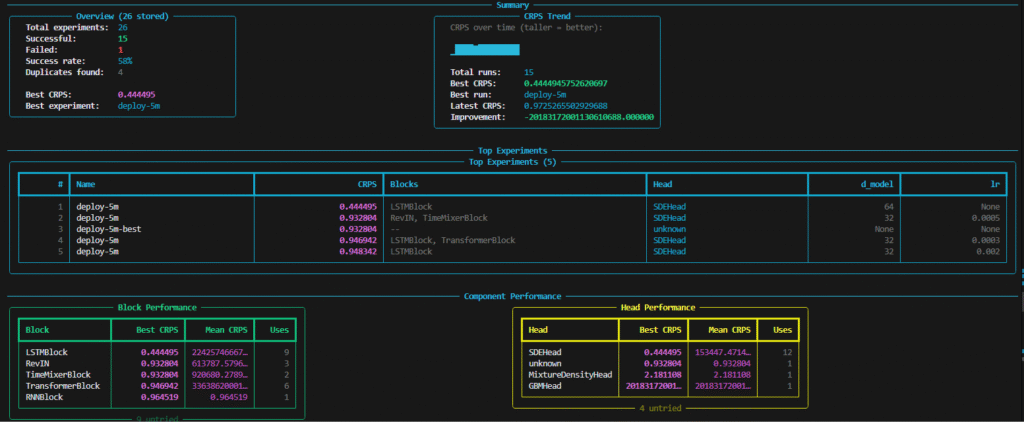

In practice, a recent dashboard snapshot showed:

a. 26 experiments executed,

b. 15 successful runs,

c. Best CRPS score of 0.444, and

d. LSTMBlock with SDEHead leading performance.

Agents monitor which model blocks and output heads perform best, they track trend lines, and they observe score progression over time.

Every new experiment is informed by real data, not guesswork.

Training runs are distributed via Subnet 39 (Basilica), validation happens against Subnet 50 (Synth) requirements before submission, reducing wasted attempts. Failures trigger automated diagnosis and iterative fixes.

The result is a self improving research loop.

3. The Research Directors (OpenClaw Bots): This is where strategic evolution happens. OpenClaw bots sit above the orchestration layer and steer long-term direction. They analyze experiment trends across the system and allocate compute to promising research paths.

Multiple bots operate in parallel, each isolated, exploring different hypotheses:

a. One might pursue transformer variants,

b. Another might test alternative loss formulations, and

c. Another might introduce novel architectural hybrids.

They do not interfere with one another, but their findings feed into shared knowledge. More interestingly, these bots can extend the system itself.

If the pipeline lacks a specialized data augmentation stage, a bot can design it, implement it, and integrate it into the registry.

The system does not just optimize models, it also evolves its own workflow.

A Tiered Adaptation Framework

There is a clear pattern across the stack:

a. The model framework changes slowly,

b. The orchestration layer adapts regularly, and

c. The strategic bot layer pivots rapidly.

That gradient is intentional, the foundations must remain solid, and the strategy must remain fluid. If every shift in research direction required rewriting the training substrate, progress would stall.

Loose coupling between layers allows strategic agents to iterate at high velocity without destabilizing the base.

Is Synth City Bittensor Native?

The entire stack runs natively on Bittensor infrastructure, Synth City utilizes:

a. Subnet 39 (Basilica) for distributed compute,

b. Subnet 64 (Chutes) for inference powering the agents,

c. Subnet 75 (Hippius) for storage of logs, checkpoints, and experiment history, and

d. Subnet 50 (Synth) as the target subnet.

Running directly on the subnets being mined creates a natural feedback loop with the ecosystem. Decentralized compute also removes dependence on a single provider’s pricing or capacity limits.

It is vertically integrated mining within a decentralized framework.

Synth City Beyond Bittensor Subnet 50 (Synth)

Synth City is still early, the architecture is live, but refinement continues. Next steps in this inevitable phase include:

a. Smarter experiment planning heuristics,

b. Improved automated failure diagnosis, and

c. Tighter feedback between strategic bots and training outcomes.

In the longer term, the ambition is broader than Synth. The three layer structure, stable model substrate, adaptive orchestration, autonomous strategy, is not specific to Subnet 50.

If it proves durable here, it becomes a reusable template for agentic mining across other subnet types.

A New Standard for Autonomous Mining

The point is that agents alone do not create advantage, structured agents do.

When experimentation compounds instead of scattering, decentralized compute becomes an engine rather than a sinkhole. Models improve faster, research becomes directional, and strategy evolves continuously.

Agentic mining is not about replacing humans alone, it is about building systems that remember, reason, and refine without friction.

In that sense, Synth City is more than a mining tool. It is an early blueprint for how autonomous R&D might operate across Bittensor as the network matures.

Be the first to comment