Bittensor has a noise problem. Over a hundred subnets compete for emissions, liquidity, and attention. But strip away the branding and the Twitter hype, and a harder question emerges: which of these projects are actually building something worth paying for?

Jacob Steeves (“Const”), Bittensor’s co-founder and one of the ecosystem’s sharpest critics-from-within, proposed a simple framework to answer that question. Six filters. If a subnet can’t clear all six, it probably shouldn’t command serious capital.

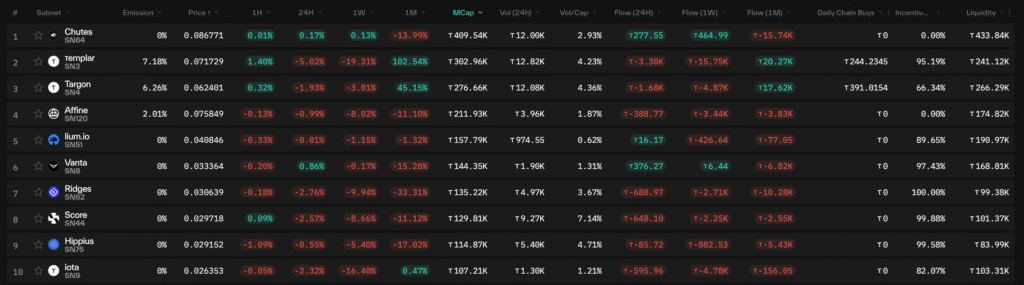

What’s striking is that the current top 10 subnets by market cap pass every single one. That’s not a coincidence. It’s the market doing exactly what Const’s framework predicts.

The Six Filters

The framework works like a progressive sieve. Each filter is binary (yes or no) and each one eliminates a different species of pretender.

1. Does it produce a digital commodity? Not a token. Not a governance vote. A commodity, something a buyer would pay for independent of the Bittensor ecosystem. Inference calls. Trained model weights. Storage. Annotated data. AI agents. If the subnet disappeared from Bittensor tomorrow, would anyone outside the network notice?

2. Are the miners actually productive? Mining in Bittensor isn’t proof-of-work in the Bitcoin sense. It’s proof-of-useful-work. The question is whether miners are generating real utility like running GPU workloads, training models, storing files, and creating SOTA agents. Or they’re just gaming a reward function.

3. Is it intelligent? This is Bittensor, not a generic compute network. The subnet should involve genuine AI reasoning, adaptation, or learning. The strongest subnets must embed intelligence at their core.

4. Is it hard? Easy tasks get commoditized, memorized, and gamed. The best subnets push toward the state of the art where miners have to actually think. Permissionless LLM training across heterogeneous hardware. Real-time video analysis. Adversarial financial markets. These are hard problems, and that difficulty is a moat.

5. Is it not a ponzi? The most uncomfortable filter, and the most necessary. Are rewards tied to verifiable performance, or do they flow to whoever stakes the most, markets the loudest, or arrives earliest? A subnet where the primary “product” is the emission itself is a ponzi by any honest definition. Const’s filter demands that value creation precede value capture.

6. Is it AI-native? Could this subnet exist and thrive without AI at its foundation? If you could swap out the intelligence layer for a simple script or a manual process, and nothing meaningful would change, the subnet isn’t AI-native. It’s AI-adjacent at best, and probably doesn’t belong on Bittensor.

The Top 10, Filter by Filter

Now the real test. Below is every current top-10 subnet by market cap, run through all six filters.

1. Chutes (SN64): Serverless AI Compute

Chutes sits at #1 for a reason this framework makes obvious: it produces something people will pay cash for.

- Digital commodity? Yes, on-demand inference API calls.

- Miners productive? Yes, running real GPU workloads for paying users.

- Intelligent? Yes, model execution and orchestration.

- Hard? Yes, dynamic scaling across heterogeneous hardware.

- Not a ponzi? Yes, paid per verifiable inference.

- AI-native? Yes.

Verdict: 6/6. As close to a pure digital commodity as Bittensor gets.

2. Templar (SN3): Decentralized LLM Pre-Training

Templar proved decentralized training at scale with Covenant-72B, the largest permissionless model trained on heterogeneous hardware using novel compression techniques.

- Digital commodity? Yes, trained model weights and checkpoints.

- Miners productive? Yes, contributed real compute to production-grade LLMs.

- Intelligent? Yes, full-scale LLM training.

- Hard? Extremely. Permissionless coordination, heterogeneous compute, novel compression.

- Not a ponzi? Yes, rewards based on measurable training contribution.

- AI-native? Yes.

Verdict: 6/6. The flagship proof that decentralized training works.

3. Targon (SN4): Confidential GPU Compute

Targon answers the enterprise question: “Yes, but can you do it without seeing my data?” That gets harder, not easier, when you add decentralization.

- Digital commodity? Yes; private, verifiable AI inference.

- Miners productive? Yes, running enterprise-grade workloads.

- Intelligent? Yes, multimodal processing under privacy constraints.

- Hard? Yes, privacy and performance is a genuine engineering challenge.

- Not a ponzi? Yes.

- AI-native? Yes.

Verdict: 6/6.

4. Affine (SN120): Reinforcement Learning & Coordination

Perhaps the most conceptually ambitious subnet in the top 10. Affine applies reinforcement learning to coordinate and improve models across the ecosystem — a meta-intelligence layer that makes other AI systems better.

- Digital commodity? Yes, improved models via RL optimization.

- Miners productive? Yes, competitive model submissions evaluated on performance.

- Intelligent? Very, higher-order intelligence coordination.

- Hard? Yes, Pareto-frontier optimization across multiple environments.

- Not a ponzi? Yes, strictly performance-based.

- AI-native? Yes.

Verdict: 6/6.

5. Lium (SN51): Decentralized GPU Marketplace

Lium rounds out the compute layer as a GPU rental marketplace. The commodity this subnet produces is real and the trustless rental mechanics are non-trivial.

- Digital commodity? Yes, rentable compute hours.

- Miners productive? Yes, providing real GPUs to real workloads.

- Intelligent? Enables the execution of AI workflows.

- Hard? Yes. Trustless rental + live workload management.

- Not a ponzi? Yes.

- AI-native? Yes, core use case is AI compute.

Verdict: 6/6.

6. Vanta (SN8): AI Trading Signals

Vanta brings AI to financial markets, generating trading signals and running prop strategies. The “not a ponzi” filter is especially relevant here: the model is profit-driven, with buybacks tied to actual trading performance.

- Digital commodity? Yes, tradable alpha signals.

- Miners productive? Yes, evaluated on live trading performance.

- Intelligent? Yes, strategy generation and adaptation.

- Hard? Yes, real markets are the ultimate adversarial environment.

- Not a ponzi? Yes; profit-driven buybacks, not emission farming.

- AI-native? Yes.

Verdict: 6/6.

7. Ridges (SN62): Autonomous Coding Agents

Coding remains one of the most difficult domains for AI — high-dimensional, context-dependent, and adversarial in the sense that broken code fails loudly. If Ridges’ miners can produce code that ships, the commodity sells itself.

- Digital commodity? Yes; working code, bug fixes, tests.

- Miners productive? Yes, agents solving real software engineering tasks.

- Intelligent? Yes, software engineering intelligence.

- Hard? Extremely. Coding is notoriously difficult for AI.

- Not a ponzi? Yes.

- AI-native? Yes.

Verdict: 6/6. One of the strongest passes through the “hard” filter on this list.

8. Score (SN44): Computer Vision

A niche that looks small until you realize the data market is enormous. Real-time video annotation, object detection in dynamic environments, event understanding — with paying customers on the other end.

- Digital commodity? Yes, annotated video data and insights.

- Miners productive? Yes, real-time video analysis.

- Intelligent? Yes, object detection and event understanding.

- Hard? Yes; dynamic, unpredictable real-life environments.

- Not a ponzi? Yes, revenue from paying customers.

- AI-native? Yes.

Verdict: 6/6.

9. Hippius (SN75): Decentralized Cloud Storage

The odd one out on the “intelligent” filter as storage isn’t inherently an AI task. But every training run needs data, every model needs persistence, and Hippius positions itself explicitly as Bittensor’s memory layer.

- Digital commodity? Yes; persistent, S3-compatible cloud storage.

- Miners productive? Yes, storing and serving real data.

- Intelligent? Yes, complex storage infrastructure.

- Hard? Yes, decentralized durability and bandwidth at scale.

- Not a ponzi? Yes.

- AI-native? Yes, purpose-built as the memory layer for Bittensor AI.

Verdict: 6/6.

10. IOTA (SN9): Cooperative LLM Pre-Training

IOTA attacks the same monster as Templar — decentralized LLM pre-training — but takes a cooperative swarm approach. Same commodity, different architecture, same brutal difficulty.

- Digital commodity? Yes, trained model parameters.

- Miners productive? Yes, orchestrated swarm training.

- Intelligent? Yes, full LLM pre-training.

- Hard? Very. Heterogeneous, adversarial network coordination.

- Not a ponzi? Yes.

- AI-native? Yes.

Verdict: 6/6.

What the Scorecard Tells Us

A clean sweep across the top 10 is the headline, but the more interesting story is in the pattern of what succeeded. Const’s filters clarify that if you can’t point to a commodity, productive miners, real intelligence, genuine difficulty, clean incentives, and AI-native design, the market will eventually find you out.

The subnet leaderboard is dominated by infrastructure and tooling (compute, training, storage, inference) with a growing application layer (trading, coding, computer vision) building on top. This makes sense for where Bittensor is in its lifecycle. The network is still laying its foundational capabilities, and the market is rewarding the subnets providing picks and shovels.

If you’re building a subnet, mining, or allocating capital in the Bittensor ecosystem, these six questions are the fastest way to separate real from grift. And the current leaderboard is proof that the market agrees.

Enjoyed this article? Join our newsletter

Get the latest Bittensor & TAO ecosystem news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment