The written internet is only half the story. Every day, founders explain strategy on podcasts, researchers debate methodology in recorded panels, operators break down real execution details in long-form interviews.

These conversations contain nuance, uncertainty, context, and insight that rarely make it into blog posts or press releases. Yet, for AI systems, most of this signal might as well not exist.

ReadyAI (Bittensor Subnet 33) is changing that.

The Market Signal Was Already There

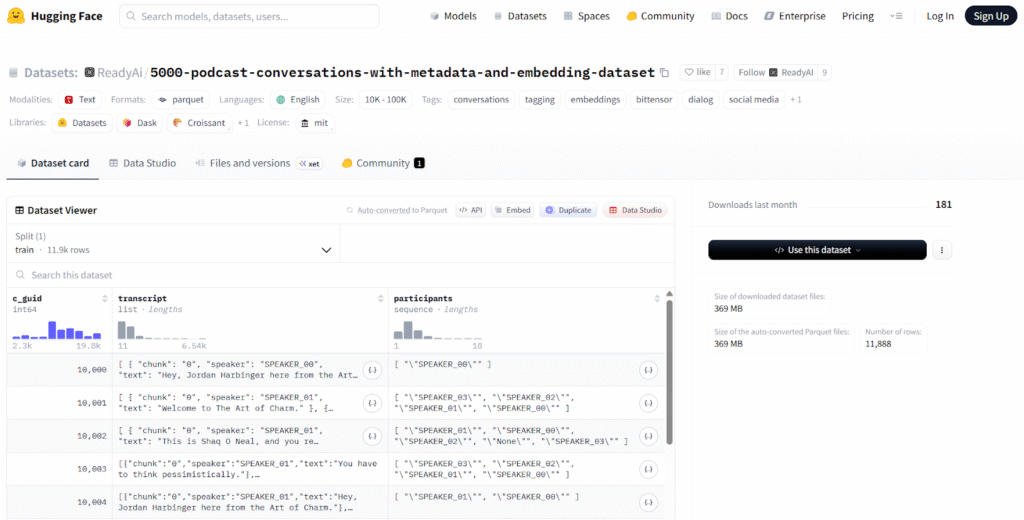

When ReadyAI’s podcast conversations dataset surpassed 300,000 downloads on HuggingFace, it confirmed something important: That the demand for structured conversation data is real and growing.

Developers, researchers, and AI builders are actively searching for high-signal spoken content that has been cleaned, structured, and made machine readable.

The issue was never “supply,” as the internet is flooded with spoken expertise, the real issue was structure.

Millions of hours of public conversations across podcasts, interviews, debates, and panels remain largely unindexed, untagged, and inaccessible to AI agents in a meaningful way.

Subnet 33 calls it the web’s dark matter. This week, that dark matter becomes visible!

Introducing Agentic Transcription

ReadyAI is launching its agentic transcription system, an autonomous pipeline designed to discover, retrieve, and process public conversations across the web at scale.

This is not a manual upload system, thus, it does not wait for someone to submit a file. It operates as an autonomous agent.

The pipeline:

a. Discovers relevant public conversations,

b. Retrieves audio content autonomously,

c. Transcribes and structures the material, and

d. Routes it directly into the subnet for enrichment.

The super-cycle is aimed at continuously expanding the corpus of structured spoken knowledge.

Category From Day One

Every conversation that enters the system is tagged to a category immediately, and this is kind of foundational. Category-specific tagging enables:

a. Targeted task routing for miners,

b. Domain-specific enrichment workflows,

c. Performance benchmarking by subject vertical, and

d. Customer-defined data extraction pipelines.

More importantly, it unlocks something strategic: customer requested categories.

If a research team needs every meaningful AI-focused podcast from the past 90 days transcribed, enriched, and delivered as structured JSON (JavaScript Object Notation), the system is architected to handle that request natively.

This moves Subnet 33 from passive indexing to programmable knowledge infrastructure.

Why Spoken Data Matters

Spoken conversation often contains:

a. Unfiltered strategic insight,

b. Nuanced technical explanations,

c. Early signal on emerging trends,

d. Disagreement and intellectual tension, and

e. Context that rarely appears in formal writing.

For AI systems tasked with forecasting, research synthesis, or reasoning over evolving domains, this layer of information is invaluable. Yet without structured transcription, tagging, and enrichment, it remains unusable at scale.

Subnet 33 turns it into a first-class data asset.

Infrastructure for the Next Generation of Agents

The implications extend beyond transcription. By continuously feeding structured conversational data into the subnet, ReadyAI enables:

a. Domain-specific knowledge training,

b. Agent retrieval pipelines built on spoken expertise,

c. Real-time monitoring of evolving industries, and

d. Search across conversations, not just documents.

This is foundational infrastructure for AI agents that need to reason about what experts say, not just what they publish.

Making the Invisible Visible

The internet’s written layer has been indexed, ranked, and monetized for decades. Its “spoken layer” has remained fragmented, ephemeral, and largely inaccessible to machines.

ReadyAI is closing that gap.

With agentic transcription and category-native routing, the subnet is not merely collecting conversations. It is organizing them into structured, programmable knowledge; and now, it’s not just what people write, it’s what they say.And in doing so, Subnet 33 is transforming the highest signal layer of the internet into something AI systems can finally understand, query, and build upon.

Enjoyed this article? Join our newsletter

Get the latest TAO & Bittensor news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment