AI (Artificial Intelligence) no longer suffers from a lack of compute; it suffers from a lack of trustworthy compute.

Over the past decade, infrastructure has scaled aggressively to meet the demands of LLM (Large Language Models), high-performance inference, and data-intensive training pipelines. Yet as workloads become more valuable (through embedding proprietary datasets, sensitive intellectual property, and competitive model architectures) the question has shifted from “can we run this?” to something far more fundamental: “Where can we run this safely?”

Centralized cloud providers attempt to answer this with scale, convenience, and managed security. However, they remain structurally limited by their own design:

a. They concentrate trust in a single operator,

b. They abstract away hardware guarantees, limiting verifiability, and

c. They price premium security at a premium cost.

In a world where access to model weights or training data can define competitive advantage, these trade-offs are no longer acceptable.

This is the context in which the work by Manifold Labs (Founder of Targon) and Intel, leading technology firm, becomes important as a redefinition of how trust is established in compute systems.

The Core Problem: Running Code on Machines You Don’t Control

Decentralized compute networks promise the ability to tap into a global pool of underutilized hardware, transforming idle machines into productive infrastructure. However, this model introduces a problem that cannot be ignored: If you don’t control the hardware, you cannot trust the execution.

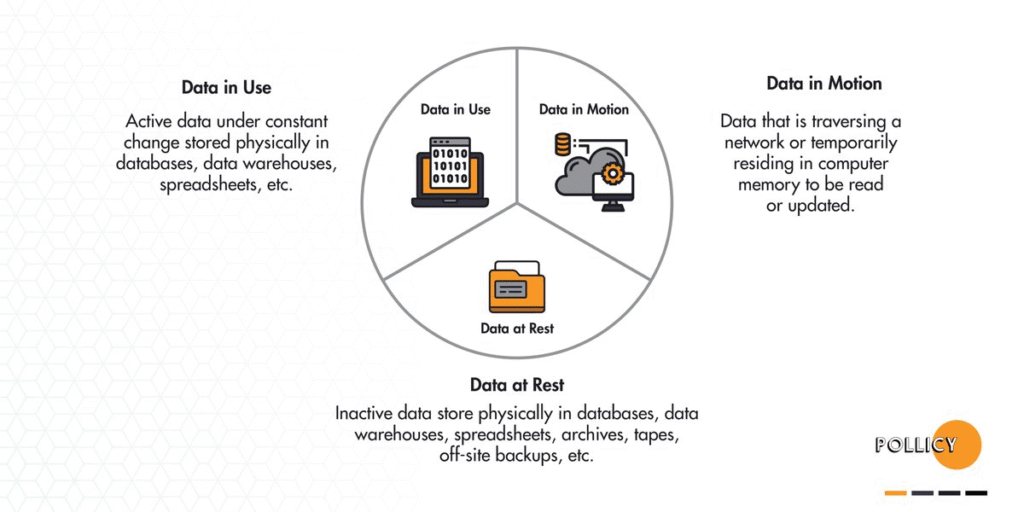

Traditional security models break down under this assumption:

a. Encryption protects data at rest, but not while it is being processed,

b. Secure transport protects data in motion, but not inside memory, and

c. Virtualization isolates workloads, but still trusts the host system.

At the moment of execution, sensitive data must be decrypted and in conventional systems, that is precisely when it becomes exposed.

While this might seem as a minor gap, it really isn’t! It is the central unsolved problem of decentralized infrastructure.

From Trust-Based Systems to Proof-Based Systems

The key innovation introduced in “Decentralized Compute on Untrusted Hardware Using Intel TDX and Encrypted CVMs” is a shift away from trusting operators toward verifying execution cryptographically and at the hardware level.

This is enabled through a stack of confidential computing technologies:

a. Intel® Trust Domain Extensions (TDX): Establishes isolated, encrypted execution environments at the CPU level,

b. Intel® Trust Authority (ITA): Provides independent, verifiable attestation of execution environments, and

c. NVIDIA Confidential Computing: Extends the same guarantees to GPU workloads, which are critical for AI.

Together, these components enable something that has historically been out of reach: The ability to run sensitive workloads on untrusted hardware with enforceable guarantees.

Confidential Virtual Machines: The Primitive That Changes Everything

At the center of this architecture is the Confidential Virtual Machine (CVM), a fully encrypted, hardware-isolated execution environment designed specifically for adversarial conditions.

Unlike traditional virtual machines, CVMs do not assume a trusted host. Instead, they enforce trust through cryptography and hardware isolation.

The key properties of this CVMs is that they ensures:

a. End-to-End Encryption: Data remains protected at rest, in transit, and during execution,

b. Hardware-Enforced Isolation: Memory is encrypted and inaccessible to the host OS or hypervisor,

c. Attestation-Gated Access: Decryption keys are released only after verified execution integrity,

d. GPU-Level Confidentiality: AI workloads remain protected across both CPU and GPU, and

e. Non-Migratable Identity: Each VM is cryptographically bound to a specific hardware context.

The result is a system where even the machine owner cannot see or interfere with the workload, fundamentally redefining what it means to “run code” on someone else’s hardware.

Targon (Bittensor Subnet 4): Turning Theory Into a Live System

While confidential computing has existed in isolated forms, the contribution of Manifold Labs is in operationalizing it within a decentralized network.

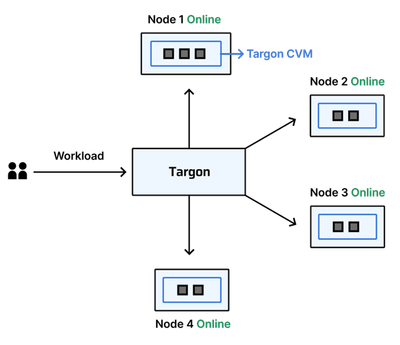

Targon, built on Bittensor Subnet 4, transforms these primitives into a functioning marketplace for secure compute.

The architecture is composed of five interlocking layers, each reinforcing both security and scalability.

1. Hardware Root of Trust

Everything begins with hardware that enforces confidentiality by design which are Intel TDX-enabled CPUs, and NVIDIA GPUs with Confidential Computing support.

This layer guarantees:

a. Encrypted memory at the silicon level,

b. Isolation from host interference, and

c. Protection against inspection or tampering.

Without this foundation, the rest of the system would collapse into trust assumptions.

2. Deterministic and Encrypted VM Provisioning

Each compute provider receives a freshly instantiated, uniquely-encrypted CVM, created through a controlled pipeline:

a. A hardened base image (Ubuntu 24.04) is cloned,

b. A per-instance encryption key is generated,

c. The key is stored in Intel’s Key Broker Service (KBS), and

d. The VM is configured for attestation before launch.

Critically, the provider cannot access the disk contents, and the VM cannot boot without successful verification, ensuring that control over hardware does not translate into control over workloads.

3. Continuous Attestation: Trust That Doesn’t Expire

Unlike traditional systems where trust is assumed at launch, Targon enforces continuous verification. The attestation model is designed for:

a. Boot-Time Verification: Confirms that the VM matches its expected configuration,

b. Periodic Re-Attestation (~72 minutes): Ensures the system remains uncompromised over time,

c. Challenge–Response Validation: Prevents replay attacks using cryptographic nonces, and

d. Unified CPU + GPU Attestation: Verifies both compute layers in a single proof.

The outcome has been that nodes that fail verification are immediately removed, decryption keys are never released to untrusted states, and the network maintains real-time awareness of integrity

This transforms trust from a static assumption into a continuously enforced property.

4. Incentives Aligned With Security (Bittensor Subnet 4)

The system does not rely on trust, it economically enforces it. Within Bittensor’s framework:

a. Validators independently verify node integrity,

b. Contributions are measured relative to total compute, and

c. Rewards are distributed via stake-weighted consensus.

This creates a system where honest behavior is rewarded, malicious behavior is unprofitable, and verification is decentralized and redundant.

Security is no longer a cost center, it becomes a source of economic alignment.

5. Decentralized Orchestration at Scale

On top of this secure foundation, Targon deploys a Kubernetes-based orchestration layer, enabling:

a. Distributed scheduling of workloads,

b. Automatic failover and recovery, and

c. Efficient utilization of heterogeneous hardware.

Key guarantees of this layer include:

a. Workloads run only on attested nodes,

b. Compromised nodes are immediately excluded, and

c. Jobs are seamlessly rescheduled when failures occur.

This allows the system to function as a cohesive compute cloud, despite being composed of untrusted machines.

Security by Design: Operating Under Worst-Case Assumptions

The architecture assumes an adversary with maximum capabilities such as:

a. Full control over hardware and software stack,

b. Ability to intercept, replay, or manipulate data, and

c. Physical access to machines.

Yet, even under these conditions, the system guarantees:

a. Confidentiality of data, models, and execution,

b. Integrity of runtime behavior,

c. Authenticity of hardware environments, and

d. Non-reusability of encrypted workloads.

This is achieved not by limiting attackers, but by eliminating their ability to observe or interfere.

Why This Matters: The Emergence of a New Compute Layer

What Manifold Labs and Intel are working on is not just a technical architecture, it is a new market primitive.

By combining hardware-rooted security, cryptographic verification, decentralized coordination, and incentive alignment, the system enables a global, permissionless market for secure compute.

This has immediate implications as:

a. Startups gain access to enterprise-grade infrastructure,

b. Researchers can run sensitive workloads without vendor lock-in,

c. Idle hardware becomes economically productive, and

d. Security becomes verifiable rather than assumed.

Compute Is Becoming a Commodity — Trust Is Becoming the Product

The most important shift described in this paper is not about virtualization, encryption, or orchestration in isolation. It is about what happens when these pieces come together under a single principle, as trust should not be granted but should be proven.

As AI continues to scale, the value of compute will increasingly depend not on raw performance, but on the guarantees surrounding its use. Systems like Targon, built on Bittensor and designed for confidential computing, suggest a future where:

a. Infrastructure is open,

b. Execution is verifiable, and

c. Trust is enforced by design.And in that future, the question will no longer be “who owns the machine?” It will be: “Can the machine prove it deserves to run my code?”

Enjoyed this article? Join our newsletter

Get the latest Bittensor & TAO ecosystem news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment