For all the progress in AI coding, something still does not add up. Models are getting larger, benchmarks keep improving, new agent frameworks are released almost weekly, and yet, when tasks become truly complex, when they require sustained reasoning across files, systems, and constraints, performance drops sharply.

This happens not because models cannot code, but because they were never fully trained to “think.”

Where the Breakdown Actually Happens

Recent research is starting to converge on a clear insight. Coding performance has never been just about generating syntax, it is about reasoning before generation.

PlanSearch, featured at ICLR 2025, showed that when models reason in natural language before writing code, both correctness and diversity improve significantly.

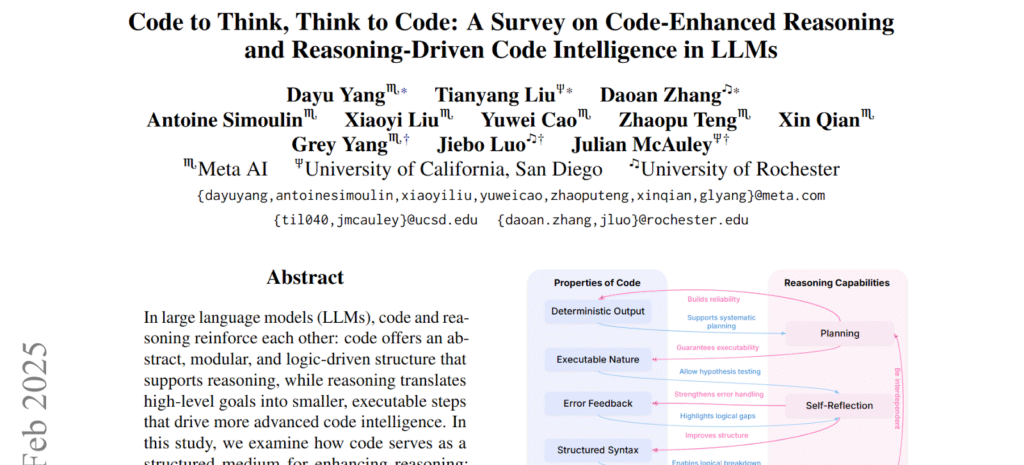

Around the same time, Yang et al. in “Code to Think, Think to Code” demonstrated something deeper: That reasoning and coding are not separate capabilities, they reinforce each other.

This simply means that better reasoning leads to better code, and better coding data strengthens reasoning.

The relationship is cyclical, but here is the problem!

The Real Bottleneck is Not Compute, It is Data

Despite advances in model architecture, the field is running into a much more fundamental constraint: High-quality training data.

Multiple studies now point to the same issue:

a. The Immersion in the GitHub Universe paper highlights that agent progress is fundamentally limited by the scarcity of high quality data

b. SWE Rebench notes that most datasets are either one shot code generation tasks or small curated samples

c. Research on synthetic datasets identifies a persistent gap in data that combines: Diversity, reasoning, and functional correctness at scale.

This is not a marginal limitation, it is structural.

Why Current Benchmarks Expose the Gap

The weakness becomes obvious on harder evaluations.

On SWE Bench Verified, GPT 5 with OpenHands reaches around 65%. A strong result on the surface.

But on SWE EVO, which tests sustained reasoning across multiple files and evolving constraints, performance drops to roughly 21 percent.

That gap is not random, it reflects something specific.

These systems struggle when they need to:

a. Understand why a system is designed a certain way,

b. Track decisions across multiple components, and

c. Adapt reasoning as context evolves.

In other words, they struggle when coding becomes thinking.

So, Where Does Reasoning Data Come From?

If reasoning is the missing ingredient, the next question becomes unavoidable. Where do you actually find reasoning rich data at scale? Not polished tutorials, not isolated code snippets, and definitely not synthetic prompts.

The real answer is far more human, it lives in conversations.

The Untapped Dataset Hiding in Plain Sight

Some of the richest reasoning data already exists, it just has not been treated as training data.

Think about how engineers actually work through problems:

a. Long-form podcast discussions

b. Architecture reviews

c. Technical debates between developers

d. Deep dives into system design decisions

This is where reasoning happens in its natural form. Engineers explain tradeoffs, they question assumptions, and they refine ideas in real-time.

It is messy, contextual, and deeply informative, and until now, it has been largely unusable at scale.

Turning Conversations Into Training Infrastructure

This is the gap ReadyAI, Bittensor Subnet 33, is on a mission to filing. Rather than focusing purely on model outputs, ReadyAI focuses on extracting the reasoning layer that precedes them.

The latest release introduces specialized enrichment logic that can:

a. Identify architectural reasoning within conversations

b. Extract contextual decision making patterns

c. Preserve multi-step thought processes

d. Structure this data into training ready formats

The goal is not just to collect more data, it is to collect the right kind of data.

Why This Changes the Trajectory of Coding Agents

This approach reframes how progress in AI coding happens. Instead of relying on larger models, more compute, and more synthetic data, it focuses on improving the quality of cognitive signal in training datasets.

This has direct implications through:

a. Better performance on multi-file and long-horizon tasks

b. Stronger generalization across unfamiliar systems

c. Improved ability to reason through constraints and tradeoffs

In short, it targets the exact failure modes current benchmarks expose.

Early Signals, and What Comes Next

The early results from Subnet 33 are already promising.

Initial enrichment pipelines are successfully extracting structured reasoning from conversational data, opening up a new category of training input that did not previously exist in usable form.

Benchmark improvements, particularly on more difficult coding evaluations, are expected to follow, and if they do, the implication is clear.

The next leap in coding agents will not come from writing more code, it will come from teaching models how to think before they do.

From Code Generation to Thought Generation

For years, the focus has been on making models better programmers, but programming was never just about code.

It has always been about reasoning: Understanding systems, weighing tradeoffs, and adapting to complexity.

What Subnet 33 introduces is a shift in perspective from generating code to generating thought. In that shift lies the missing layer that could define the next generation of AI coding systems.

Enjoyed this article? Join our newsletter

Get the latest Bittensor & TAO ecosystem news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment