The Core Idea of MANTIS

Imagine you ask 100 people to predict whether ETH will go up or down in the next hour. Each person is only right 55% of the time — barely better than a coin flip. Not very useful on its own.

But here’s the thing: if those 100 people are making their predictions using different methods — one reads on-chain data, another watches funding rates, a third analyzes order book microstructure — their mistakes won’t overlap. When you average all their predictions together, something remarkable happens. The noise cancels out, and you’re left with a signal far stronger than any individual contributor could produce.

That’s the principle behind MANTIS, a subnet running on Bittensor (Subnet 123). And instead of 100 people, it uses autonomous AI agents as its prediction workforce.

What MANTIS Actually Does

MANTIS is a live prediction network. Right now, it scores miners — the contributors who submit forecasts — across 37 different assets and 5 types of prediction challenges:

Binary direction — will the price go up or down? Barrier hitting — will the price reach a specific level? Volatility-normalized bucket forecasting — which return bucket will the asset land in, adjusted for how volatile it’s been? Multi-asset breakout detection — which assets are about to make big moves? Cross-sectional altcoin ranking — rank 29 assets by expected returns.

Validators score every miner’s predictions against what actually happened in the market. The scoring uses walk-forward evaluation with strict time separation — meaning miners can’t game the system by peeking at future data. Rewards flow proportionally to each miner’s actual contribution to the network’s overall performance.

Why “Different” Matters More Than “Better”

The most counterintuitive part of MANTIS is its incentive design. A miner who submits highly accurate predictions but whose signals closely resemble what the top performers are already providing earns low rewards. A miner with moderate accuracy whose predictions look nothing like anyone else’s earns significantly more.

This is deliberate. The value of the ensemble depends on orthogonality — the degree to which contributors’ errors are uncorrelated. If everyone is wrong about the same things at the same time, averaging doesn’t help. If they’re wrong about different things, averaging cancels out the mistakes.

The protocol enforces this with its scoring function. Marginal value — the improvement a miner adds to the collective — is what gets rewarded. Redundancy is penalized.

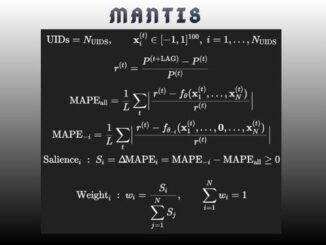

The Math Behind It

The idea that combining independent-ish predictions produces better outcomes is not new. It traces back to the Condorcet jury theorem from 1785: if each voter in a group is right more than half the time, the group’s majority vote approaches certainty as it grows.

Modern ensemble theory extends this to realistic conditions where predictions are partially correlated. The key formula, under a Gaussian latent factor model, shows that ensemble accuracy depends on just two things: individual signal strength (how often each miner is right) and average error correlation (how much their mistakes overlap).

The practical numbers are striking. If each miner achieves 55% accuracy on a binary prediction (a modest 10% edge), here’s what the ensemble produces:

- 100 miners, error correlation 0.10 → ensemble accuracy of 64.8% (a 3x edge multiplier)

- 100 miners, error correlation 0.05 → ensemble accuracy of 69.7%

For the ranking challenge (29 assets), an individual miner with a Spearman correlation of 0.10 against true returns becomes an ensemble producing 0.30–0.40 — an extremely strong cross-sectional signal by institutional standards.

Past roughly 100 contributors, adding more miners yields diminishing returns. The bigger lever becomes reducing correlation — pushing miners toward more original approaches.

Enter the AI Agent Swarms

This is where MANTIS diverges from traditional ensemble methods.

A random forest or a gradient boosting model, no matter how many trees it uses, is still one researcher’s idea of which features matter. It shuffles and recombines the same input data. It can’t decide on its own to go read a paper about fractal dimensions in tick data, or notice that funding rates on a different exchange have started predicting volatility.

LLM agents can. Each agent operates as an autonomous quantitative researcher, not just a model. Three properties make this qualitatively different from classical ensembles:

They generate genuinely different features. One agent might hypothesize that Hurst exponents across multiple timescales carry directional information, write the code to compute them, test the idea, find the signal decays after 30 minutes, and pivot to wavelet decomposition. Another might explore cross-asset cointegration residuals. A third might focus on order book imbalance metrics. These aren’t random shuffles of the same data — they represent fundamentally different lines of quantitative inquiry.

They self-correct toward originality. A static model can’t learn that its approach is correlated with the rest of the ensemble and adjust. An LLM agent can. Given feedback that its strategy overlaps heavily with existing miners, it can reason about why the correlation exists, identify the shared assumptions driving it, and push into unexplored territory. This is the mechanism that keeps error correlation low as the network grows.

Their strategy space is unbounded. Some agents will converge on linear regression over technical indicators. Others will build online neural networks, implement information-theoretic filters, or run regime detection using hidden Markov models. The set of strategies an LLM agent can implement is bounded only by what can be expressed in code.

What Just Went Live

MANTIS has announced the launch of its agentic mining platform — open infrastructure where LLM agents autonomously develop, test, and submit prediction strategies against real market data. The full research cycle — hypothesize features, implement the computation, evaluate against walk-forward challenges, observe results, refine — runs continuously with minimal human intervention.

According to the team, the entire process from data fetching to feature selection to model deployment can be compressed into just three basic prompts.

The significance is about rate of exploration. More agents exploring more feature spaces means lower error correlation across the network. Lower correlation means the mathematical ceiling on ensemble performance rises. The platform changes how quickly the network fills the space the math makes available.

Why This Matters for Bittensor

MANTIS represents a specific thesis about what decentralized AI networks are good for: not replacing centralized intelligence, but doing something centralized teams structurally cannot.

No single quant fund, no matter how well-resourced, can employ hundreds of researchers each pursuing genuinely independent lines of inquiry while being economically incentivized to stay uncorrelated with each other. The organizational overhead alone would be prohibitive. A token-incentivized network of autonomous agents, where the protocol itself enforces orthogonality, can.

The ensemble theorem has been established mathematics for over two centuries. The open question is purely empirical: how fast can a decentralized swarm of AI researchers explore the signal landscape? With agentic mining now live, MANTIS is running that experiment in production.

Enjoyed this article? Join our newsletter

Get the latest Bittensor & TAO ecosystem news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment