At first glance, launching a hardware challenge may appear straightforward, especially in a decentralized system where miners are expected to do the heavy lifting. However, in the context of ChipForge (Bittensor Subnet 84), the difficulty does not lie in defining the problem, but in proving that the solutions can be evaluated correctly.

This distinction is not subtle, because in hardware, validation is the work. Without a reliable way to measure correctness, performance, and efficiency, a challenge does not produce value, it produces noise.

The reason ChipForge takes time to launch new challenges is therefore not a matter of delay, but of standard. A challenge is only ready when the system evaluating it is capable of handling real-world complexity.

What ChipForge Actually Solves Before Launching a Challenge

Every hardware design problem on ChipForge begins with two fundamental questions:

a. Is the problem worth solving?

b. Can the solutions be validated properly?

The first question is usually straightforward, because if a problem contributes to the broader ecosystem, advances open hardware, and has practical relevance, then it qualifies as a meaningful challenge.

The second question is where the real work begins, because validation defines whether the output of the system has any credibility at all.

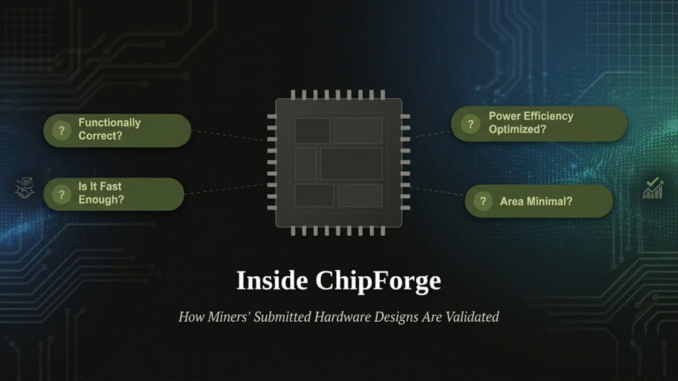

Before any challenge goes live, the validation pipeline must be able to answer critical questions with confidence:

a. Is the design functionally correct?

b. How much silicon area does it consume?

c. What is its power efficiency?

d. How does it perform under realistic workloads? And

e. Has it been tested thoroughly enough to trust?

Without clear answers to these questions, there is no basis for scoring, no foundation for incentives, and no reason to trust the results.

Why Hardware Validation is Fundamentally Hard

The central challenge in chip design is not building functionality, but verifying it under all relevant conditions.

In semiconductor engineering, functional verification is widely recognized as the primary bottleneck, often consuming more than 70% of total design effort as system complexity increases.

This is the part that is often underestimated.

Unlike software systems, where testing can be iterative and patches can be deployed after release, hardware must be correct before fabrication. Once a chip is manufactured, errors cannot be patched, and the cost of mistakes increases dramatically depending on when they are discovered.

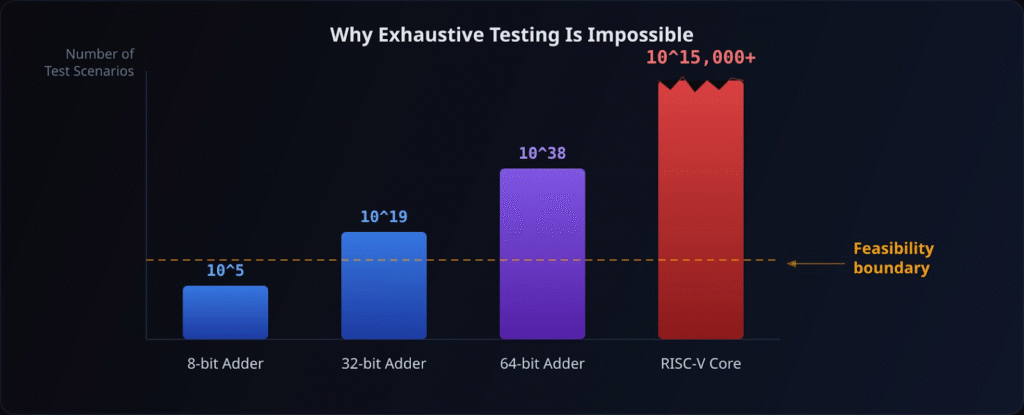

You Cannot Brute-Force Hardware

The scale of the problem becomes clear when considering even the simplest components. A standard 64-bit adder has:

a. 2¹²⁸ possible input combinations, and

b. Approximately 3.4 × 10³⁸ total cases.

Even under extremely optimistic assumptions, where a high-end GPU processes trillions of cases per second, exhaustive testing would take on the order of 10¹⁷ years.

This is not a limitation of tooling, but a limitation of mathematics, and this is only for a basic arithmetic unit.

When moving to full systems such as processors or NPUs (Neural Processing Units), the complexity expands to include:

a. Instruction sequences and execution flows,

b. Memory interactions and state dependencies,

c. Pipeline hazards and control flow changes, and

d. Long-running system behaviors across millions of cycles

At this scale, exhaustive testing becomes impossible, which means validation must rely on smarter strategies rather than brute force.

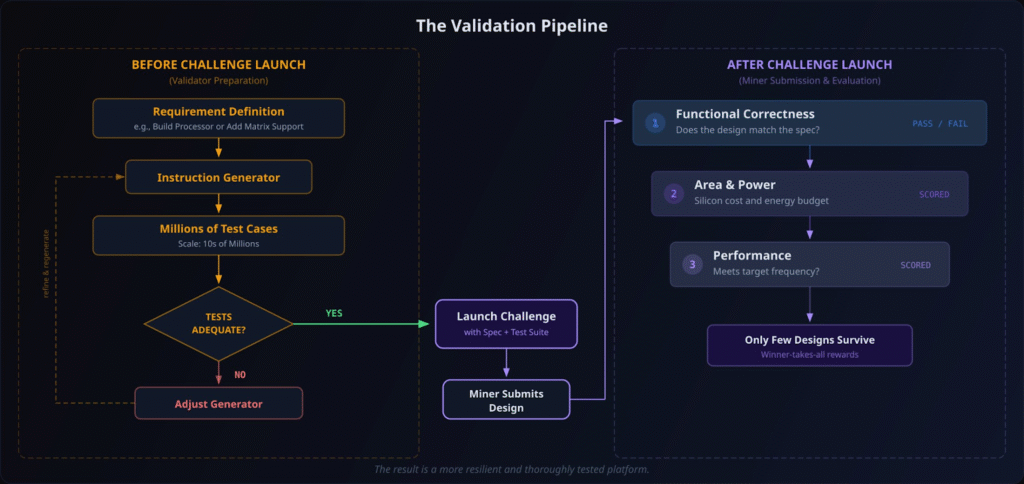

The Validation Pipeline: Finding Bugs Early and Reliably

Because complete testing is not feasible, ChipForge focuses on building a validation pipeline designed to expose real issues as early and as reliably as possible. Core mode of validation includes:

a. Simulation to test expected behavior under controlled scenarios,

b. Constrained-random testing to explore unexpected edge cases,

c. Coverage-driven verification to measure how thoroughly the design has been exercised,

d. Fuzzing to push systems into unpredictable states, and

e. Formal methods to mathematically prove specific properties.

These methods are not optional additions, but necessary components of a system that must operate under exponential complexity.

The goal is not to prove perfection, because that is unattainable, but to systematically increase confidence by exposing weaknesses before they become critical failures.

Every Improvement Expands the Problem

In hardware systems, even small changes introduce new layers of complexity that must be revalidated.

A modification to a single component in a processor or NPU requires re-evaluating:

a. Interaction with other components,

b. Timing behavior and performance characteristics,

c. Memory access patterns, and

d. Long-term execution stability.

This is why validation is continuous rather than finite.

Early-stage testing may cover hundreds of millions of instructions to filter out weak designs, while stronger candidates undergo progressively deeper stress testing. In practice, verification continues until the final stages before fabrication, because confidence is built incrementally, not declared once.

The Cost of Getting It Wrong

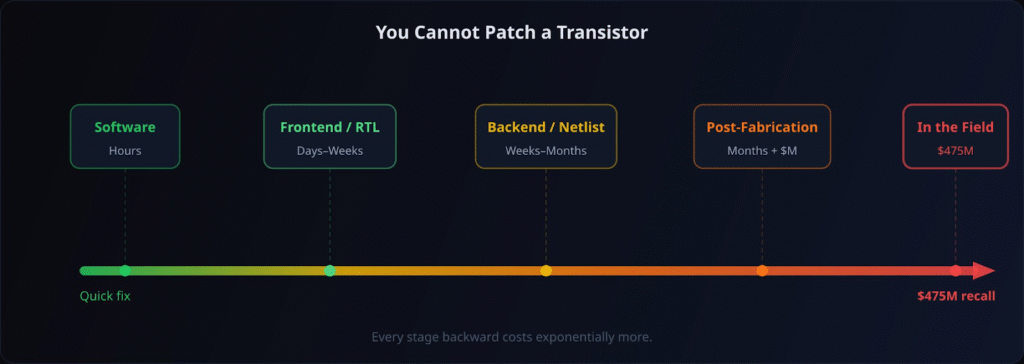

The consequences of insufficient validation increase sharply depending on when a defect is discovered. The stages of impact are as follow:

a. Frontend (RTL Stage): Bugs can be fixed relatively quickly through code changes and re-simulation,

b. Backend (Post-Synthesis): Fixes require restarting large parts of the design and verification process, often adding months of delay, and

c. Post-Fabrication: Errors require new manufacturing cycles, additional costs, and potential hardware replacement.

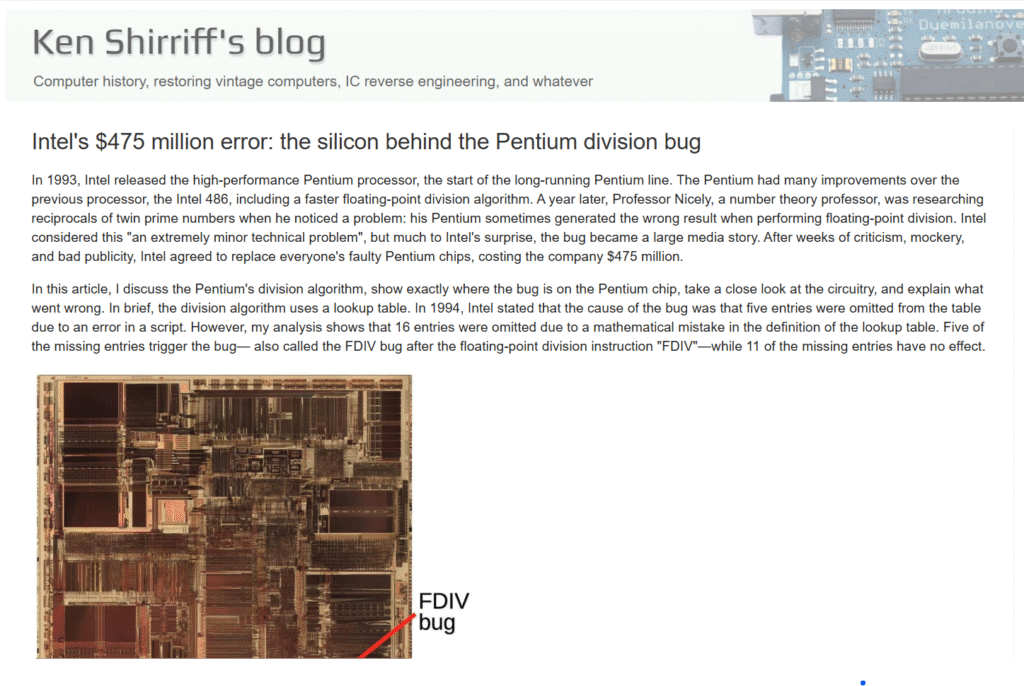

One of the most well-known examples is Intel’s Pentium FDIV bug, which resulted in a reported cost of approximately $475 million.

This highlights a fundamental truth that software bugs can be patched, and hardware bugs must be prevented.

Real-World Validation: Beyond Theory

ChipForge’s approach is not theoretical, because it has already been applied to real hardware systems.

Work on Google’s Coral NPU, an open-source RISC-V-based AI accelerator, has demonstrated that the validation pipeline is capable of identifying and resolving bugs in complex, production-grade designs.

This is an important signal, because it shows that the system is not limited to synthetic tasks, but is capable of handling real-world engineering challenges at an industrial level.

Why Challenges Take Time: The Standard That Matters

The reason ChipForge does not rush challenge deployment can be reduced to a single principle:

a. A challenge is not ready when the idea is ready, and

b. A challenge is ready when the validation pipeline is ready.

This distinction defines the quality of the entire subnet, and without a strong validation layer:

a. Scoring becomes unreliable,

b. Incentives become misaligned, and

c. Outputs lose credibility

With a strong validation layer:

a. Contributions can be measured accurately,

b. Incentives reflect real value, and

c. The system produces meaningful outcomes.

Not Fast, But Correct

ChipForge (Subnet 84) is not designed to maximize the number of challenges launched, but to maximize the quality and reliability of each one.

By focusing on validation as the core problem, Subnet 84 ensures that every challenge reflects real engineering standards rather than superficial activity. This approach may take more time upfront, but it establishes a foundation where results can be trusted, contributions can be measured, and innovation can compound.

In hardware, speed without validation is noise, but correctness creates value.

That is the standard ChipForge operates by, and it is precisely why the subnet matters.

Enjoyed this article? Join our newsletter

Get the latest Bittensor & TAO ecosystem news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment