When public figures misspeak, humans instinctively repair the sentence before passing it on, machines do not. That gap captures the difference between translation and interpretation, and according to Matthew Karas (Founder of Babelbit, Bittensor Subnet 59), it is the architectural frontier artificial intelligence must now cross.

For decades, machine translation has focused on converting words from one language to another with increasing accuracy. Yet, conversation is not a word matching exercise, it is a dynamic, contextual exchange shaped by culture, intent, tone, and audience.

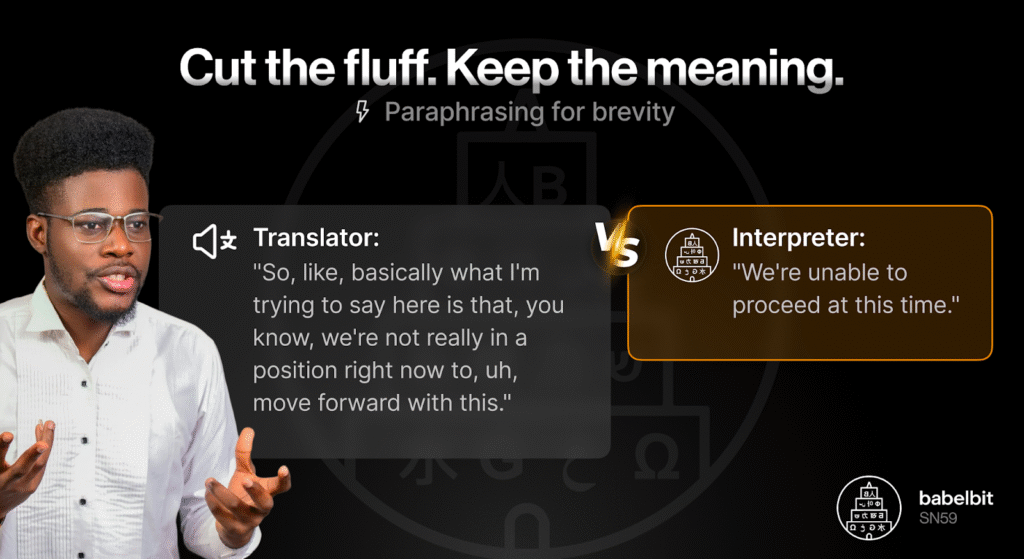

Translation may preserve literal meaning, but what interpretation does is that it preserves communicative intent.

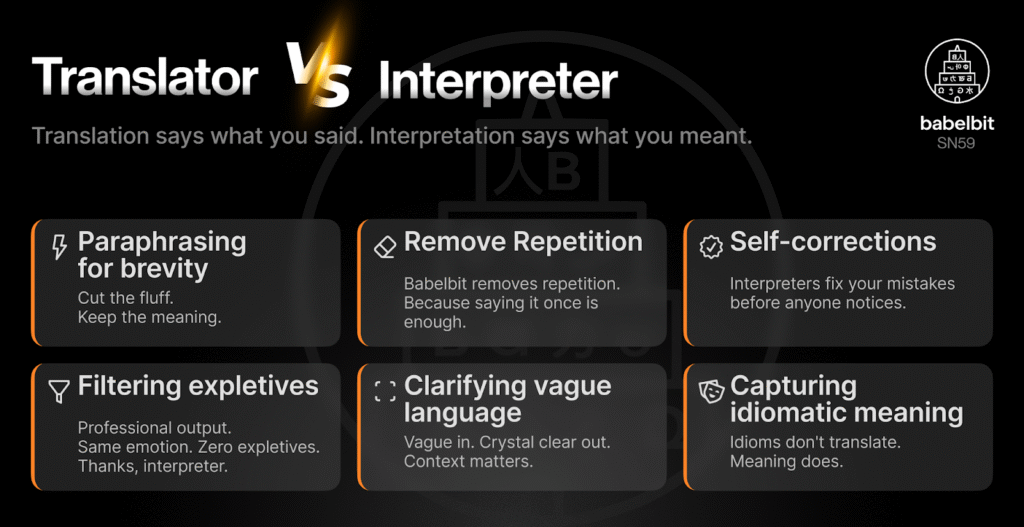

Translation Converts Words, Interpretation Reconstructs Meaning

A human interpreter does more than map vocabulary across languages. In real time, they:

a. Remove repetition,

b. Filter offensive or culturally sensitive phrasing,

c. Paraphrase for clarity and brevity,

d. Repair vague or incomplete speech,

e. Adjust tone for audience and context, and

f. Continue listening while reformulating.

Traditional machine systems cannot easily replicate this behavior because they operate as pipelines. Speech to text, text to translation, and translation to speech? Each stage is optimized independently, every additional constraint, such as filtering expletives, requires extra processing layers.

With this, latency increases, complexity compounds, and context fragments.

While output may be accurate, it rarely feels human.

The Architectural Shift Introduced by Large Language Models (LLMs)

Large language models (LLMs) change the structure of the problem. Instead of chaining separate components, LLMs integrate contextual reasoning into a single generative process.

And rather than translating first and correcting later, they apply constraints while generating output. This is done with cultural awareness, tone adjustment, and contextual filtering happening within the same forward pass.

Karas describes this as an architectural transformation rather than an incremental upgrade. The model does not execute explicit business logic for every rule, it predicts language based on context and audience simultaneously.

This is the difference between assembling translation features and simulating interpretive cognition.

Building a Machine Interpreter

At Babelbit (Subnet 59 on Bittensor), Karas and his team are developing a system designed specifically for real-time interpretation rather than post-processed translation.

The architecture includes:

a. Direct speech tokenization without intermediate speech to text conversion,

b. Forward token prediction to reduce latency,

c. Confidence weighted decisions about when to commit to translation, and

d. Single-pass output generation that applies contextual constraints in real-time.

The goal is sub-3-second latency with interpreter level reasoning embedded in the model itself with the aim to reframe simultaneous translation as a predictive problem rather than a pipeline problem.

The system does not wait for complete sentences before responding; it listens, anticipates, and adapts.

Why Now? Why Not?

Karas argues that this shift is only possible because of two converging developments:

a. LLMs capable of contextual reasoning and probabilistic confidence weighting, and

b. Scalable decentralized training infrastructure, including networks such as Bittensor, which make development economically viable at scale.

This would not result only in faster translation, it is the potential very emergence of the first machine interpreter.

Translation Serves Text, Interpretation Serves Conversation

Text translation works because text is static, conversation(s) is not. An interpreter facilitates dialogue, they adapt meaning in motion, they anticipate ambiguity, they protect clarity, as well as balance cultural expectations without interrupting flow.

The next frontier of language AI is not about higher BLEU (Bilingual Evaluation Understudy) scores or marginal latency gains, it is conversational alignment.

Babelbit’s plan to launch French-English real-time interpretation with a public API (Application Programming Interface) in the first quarter of this year (2026), would ultimately position its system as an alternative to voice-enabled translation tools.

If translation was about converting language, interpretation is about sustaining human exchange.

And as Karas frames it, the future of simultaneous translation will belong to systems that stop thinking in pipelines and start thinking in predictions.

Be the first to comment