In artificial intelligence, bold claims are common, but transparent proof is rare.

This week, something measurable happened on Bittensor Subnet 6. A top-performing miner on Numinous delivered forecasting results that surpassed Google’s Gemini baseline on a live, public benchmark.

Same questions, same evaluation window, same scoring method, and the numbers are not subtle.

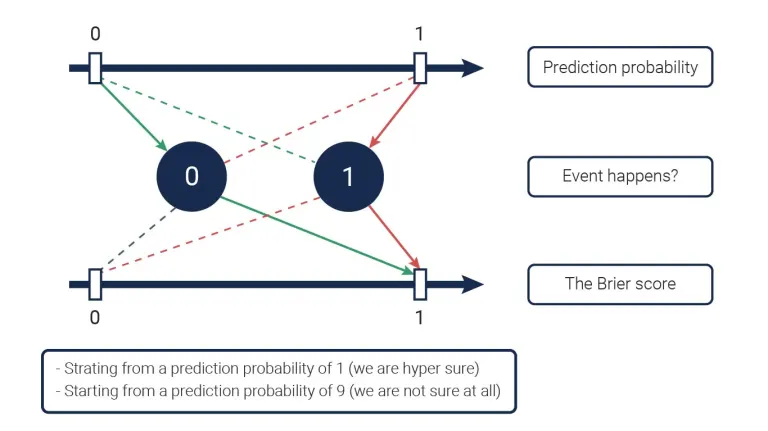

The Metric That Matters: Brier Score

Forecasting is not about guessing correctly; it is about assigning the right probability to uncertain outcomes.

The benchmark used here is the Brier Score, widely regarded as the gold standard for probabilistic forecasting. It measures calibration and confidence.

But, overconfidence is penalized, and hesitation is penalized (even randomness is penalized.)

In simple terms:

a. Predict 90% and be wrong? Heavy penalty.

b. Predict 51% and get lucky? Minimal reward.

c. Assign precise probabilities consistently across hundreds of events? That is skill.

This is the same class of metric used in intelligence analysis and quantitative finance and it cannot be gamed at scale.

The Results

Across more than 600 forecasting events: The top Miner (UID 128) had a Brier Score of 0.1772 with 71.8% directional accuracy over 600 scored events, and against 221 competing agents on the network

Gemini’s baseline, evaluated on the same questions and time window, produced a higher Brier score. In this context, higher means worse.

To appreciate the margin: in serious forecasting competitions, improvement is often measured in the fourth decimal place. Sustaining 0.177 over hundreds of live events is not variance, it is calibration at scale.

This miner was not hedging toward neutrality, as a 71.8% accuracy paired with that Brier score indicates confident probability assignments that consistently aligned with outcomes.

Why This is Structurally Important

For years, the dominant assumption has been that frontier AI capability requires centralized capital, vertically-integrated research teams, and several billion dollar infrastructure.

The implication was that decentralized markets might experiment, but they would not compete at the top tier.

This miner’s milestone challenges that assumption in a specific, measurable domain.

Decentralized competition, when paired with transparent evaluation and financial incentives, can produce outputs that rival or exceed those of major centralized labs in specialized tasks.

Forecasting is one such task.

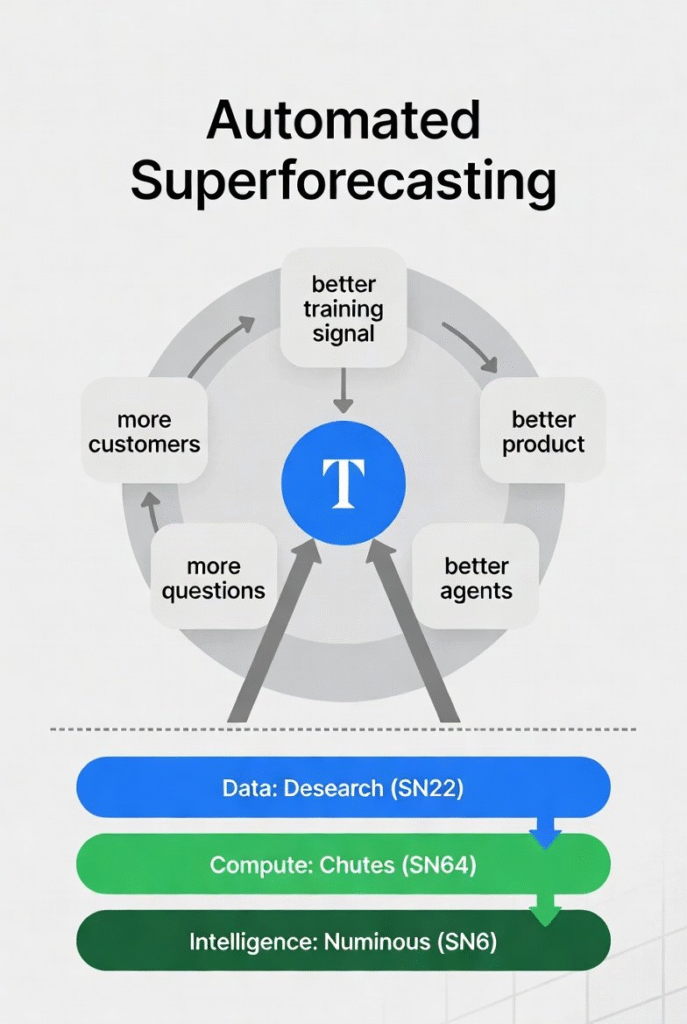

How the Architecture Actually Works

This is not simply prompting a language model and scoring the output; the structure on Subnet 6 is closer to automated superforecasting infrastructure.

Here is the stack:

a. The data layer is powered by Desearch (Subnet 22),

b. The compute layer is tapped directly from Chutes (Subnet 64), and

c. Numinous handles the forecasting super-machine.

On this very infrastructure, miners submit agent code, not raw predictions. These codes are executed by validators in controlled sandboxes using curated data and tools.

The agents get to work at this point, they gather information, updates beliefs, and outputs probabilities.

This interesting cycle, hosted transparently on Bittensor, creates:

a. Equal evaluation conditions,

b. Zero cherry picking,

c. Continuous selection pressure, and

d. Public performance history.

Every miner can see the leaderboard; they can iterate, compete under identical rules, while performance compounds.

When one miner improves, the competitive baseline rises, and the network adapts in real time.

Centralized models do not evolve because an independent engineer improved a kernel overnight; this system does.

From Benchmark to Market

The implications extend beyond leaderboard prestige. Forecast probabilities generated by this infrastructure are usable in the real world. “Eversight”, an initiative from the Numinous team, would distribute probabilities via API to traders and institutional users.

This is an economic loop where better calibration leads to demand, demand reinforces incentives, and the incentives drive iteration.

Also, the leaderboard reflects the outcome in public.

The Broader Thesis

The claim is not that decentralized AI will outperform centralized AI across every domain simultaneously, that is not how competitive systems evolve.

The stronger claim is narrower and more powerful: That when evaluation is transparent, incentives are aligned, and specialization is rewarded, decentralized markets can produce superior outputs in specific domains. Forecasting is one of them.

Subnet 6 has demonstrated that a permissionless network of ∼221 competing agents can, in a measurable window, outperform a frontier corporate model on a rigorous probabilistic benchmark.

A Moment Worth Paying Attention To

The AI industry is accustomed to breakthroughs emerging from heavily funded labs behind closed doors. This one emerged from an open market.

If decentralized systems can achieve this level of calibration in forecasting today, the question is no longer whether they can compete.

The more relevant question is where they will specialize next.

The leaderboard is public, the data is transparent, the competition is on, and the market is watching.

Enjoyed this article? Join our newsletter

Get the latest Bittensor & TAO ecosystem news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Impressive. What I find particularly important is a decentralized aspect of the predictions. No political bias, no owner’s philosophy, no skewed vision of the reality. The top miner wins and the whole system proogress. A quality of the input data is very important. But the score against Gemini shows that it is well taken care of. I understand that the system is self-improving while more questions flow-in. Is there a capacity limit or Eversight Chat is reasonable scalable?