There is a big difference between saying, “Bitcoin will hit this price next week,” and saying, “Here is the full probability distribution of how Bitcoin might move, how volatile it could be, and where risk is actually concentrated.”

Most people never make that distinction.

That is exactly why the conversation between Herelle Jean, CEO (Chief Executive Officer) of Crunch DAO, and James Ross, founder of Synth (Bittensor Subnet 50), matters.

Synth is a financial forecasting subnet built on Bittensor, and it just became the first coordinator live on Crunch mainnet.

But this is not just a milestone announcement, it is a statement about where decentralized financial intelligence is heading.

Let’s unpack it properly.

“We Don’t Predict Prices. We Model Distributions.”

When Jean opened the discussion, he framed the moment clearly. He noted that Crunch had introduced the coordinator role on testnet, and for the first time, a coordinator had successfully deployed the required smart contract infrastructure and reached mainnet. That coordinator is Synth.

James did not describe Synth as a trading platform, he called it a probabilistic intelligence layer for asset prices, and that wording matters.

Most finance competitions reward point predictions: A number, a target, or a directional guess. Synth does something different:

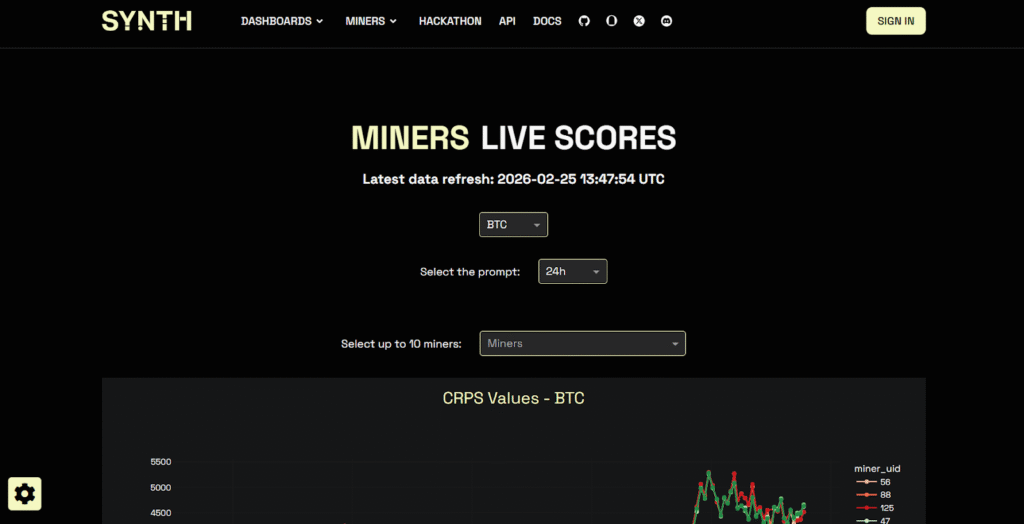

a. Miners submit 1,000 simulated price paths every hour,

b. They forecast distributions, not price targets,

c. Submissions are scored continuously against live market data,

d. Rewards are based on calibration, not hype

It is about being statistically consistent (and accurate!) over time, and that changes just everything.

Why Decentralized AI Makes Sense for Forecasting

Jean pointed out something that can’t be ignored: When you build a trading algorithm, you are competing against institutions that spend millions, sometimes hundreds of millions, mining micro edges in financial data, so ,why attempt this in a decentralized setup?

James’ answer was simple. He noted that crowdsourced intelligence works.

He (James) and his team had previously competed in data science competitions, they saw how open competition (when structured correctly) can produce surprisingly strong outputs.

The opined that the key ingredients are:

a. Transparent scoring,

b. Fair incentives,

c. High-quality reward mechanisms, and

d. Continuous evaluation.

Synth distributes roughly $80,000 to $100,000 per month to participants, this level of incentive attracts serious model builders, and because everything is scored live, performance cannot be faked.

If users overfit, the market instantly exposes it, and if you try to cheat? The cost structure utilizes the ‘cane’.

The only sustainable strategy is accuracy.

Live Scoring: The Ultimate Anti-Cheat Mechanism

One of the strongest parts of the discussion was around scoring integrity. Synth uses a continuous-ranked probability scoring framework. In simple terms:

a. Confident and correct forecasts are rewarded,

b. Confident and wrong forecasts are heavily penalized,

c. Vague distributions converge toward mediocre results, and

d. Rankings update live.

There is also a structural cost to gaming the system, thus, mass registrations and low-quality attempts are economically discouraged.

Jean emphasized that live scoring is the ultimate filter (through this, backtest can actually be faked, but not live markets). Models that cannot survive real-time volatility, do not last.

Where Crunch Comes In

Crunch DAO acts as an accessibility layer. Opening mining slots directly can be complex and capital intensive, but through its coordinator structure, Crunch allows participants to:

a. Test models before committing capital.

b. Build reputation over a one month warmup period,

c. Submit ensemble or meta models, and

d. Access external data inside secure execution environments.

This last point is crucial. James explained that volatility today is heavily driven by news events, social sentiment, and macro catalysts. Thus, pure price modeling is often not enough.

With Crunch enabling external API (Application Programming Interface) calls inside the trusted environment, competitors can integrate:

a. News feeds,

b. Social data,

c. Proprietary datasets, and

d. Cross network signals.

In an environment where price data has already been heavily mined, orthogonal data becomes the edge.

Real-World Validation: The Polymarket Experiment

To test how strong their probabilistic forecasts were, Synth ran experiments on Polymarket. They used forecast discrepancies between Synth’s probability distributions and market pricing to trade binary markets.

The reported performance was strong. But more importantly, the team observed instances where late market moves appeared manipulative. Having a calibrated probabilistic baseline helped filter those distortions.

The broader insight was that probabilistic forecasts are not just for trading desks. They can be integrated into:

a. Options pricing frameworks,

b. Risk management systems,

c. Automated trading bots, and

d. Prediction market arbitrage strategies.

The intelligence layer becomes composable.

Preparing for 24-Hour Markets

Synth currently models 9 assets across crypto and equities, further expansion will be demand driven.

With equity markets increasingly moving toward extended trading hours, the need for round the clock probabilistic data is growing.

James described this shift as structural as crypto has been 24/7 for years, and traditional markets are now catching up.

When everything trades continuously, volatility modeling becomes even more critical.

What Makes a Top Performer on Synth?

Jean asked what differentiates the best miners, and James highlighted several themes:

a. Strong volatility modeling frameworks,

b. Rapid integration of breaking news,

c. Social sentiment ingestion, and

d. Continuous improvement over time.

The subnet is competitive, the rewards are meaningful, and the barrier to excellence is high. But that is exactly the point. The output quality of Synth is directly tied to the intensity of its competition.

The Bigger Picture

The conversation between Jean and James was not about short-term hype, it was about infrastructure.

As AI agents increasingly participate in financial markets, they will not just need historical data, they will need calibrated forward-looking uncertainty modeling.

Synth aims to provide that layer, and Crunch lowers the entry barrier and expands participation.Together, they are building something that feels less like a competition and more like a decentralized volatility engine, and in serious markets, calibration beats confidence every time.

Enjoyed this article? Join our newsletter

Get the latest Bittensor & TAO ecosystem news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment