Covenant AI just released PULSE (Patch Updates via Lossless Sparse Encoding), a technique that slashes bandwidth for weight synchronization in decentralized reinforcement learning (RL) by 100×+, while staying completely lossless (bit-identical reconstruction, SHA-256 verified on every sync).

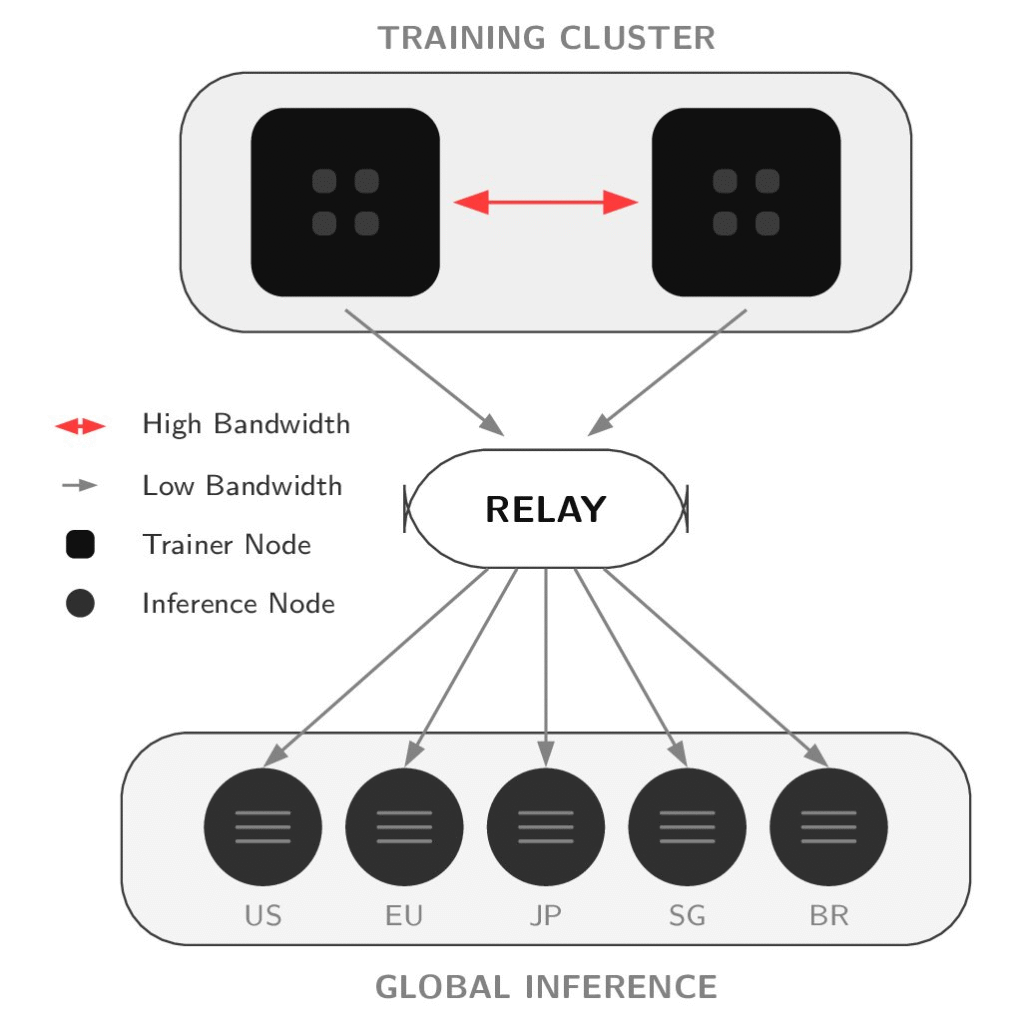

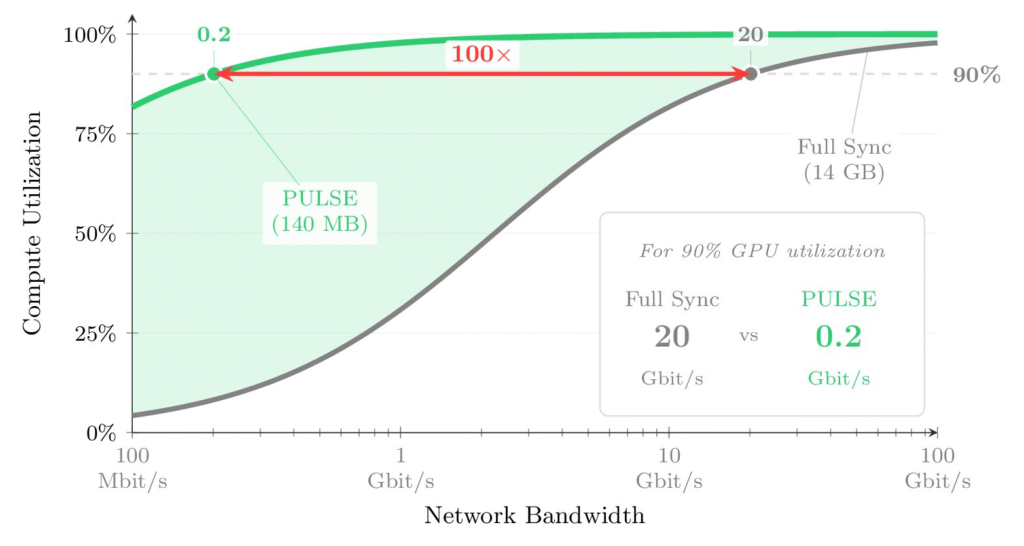

The biggest bottleneck in decentralized RL: training can happen on fast interconnects, but inference nodes are spread globally over normal internet. Syncing full model weights becomes a bandwidth nightmare. A 7B checkpoint is ~14 GB, and repeatedly syncing that reduces performance.

PULSE fixes this by exploiting a powerful (and honestly surprising) property: RL weight updates are extremely sparse—around 99% sparse per step.

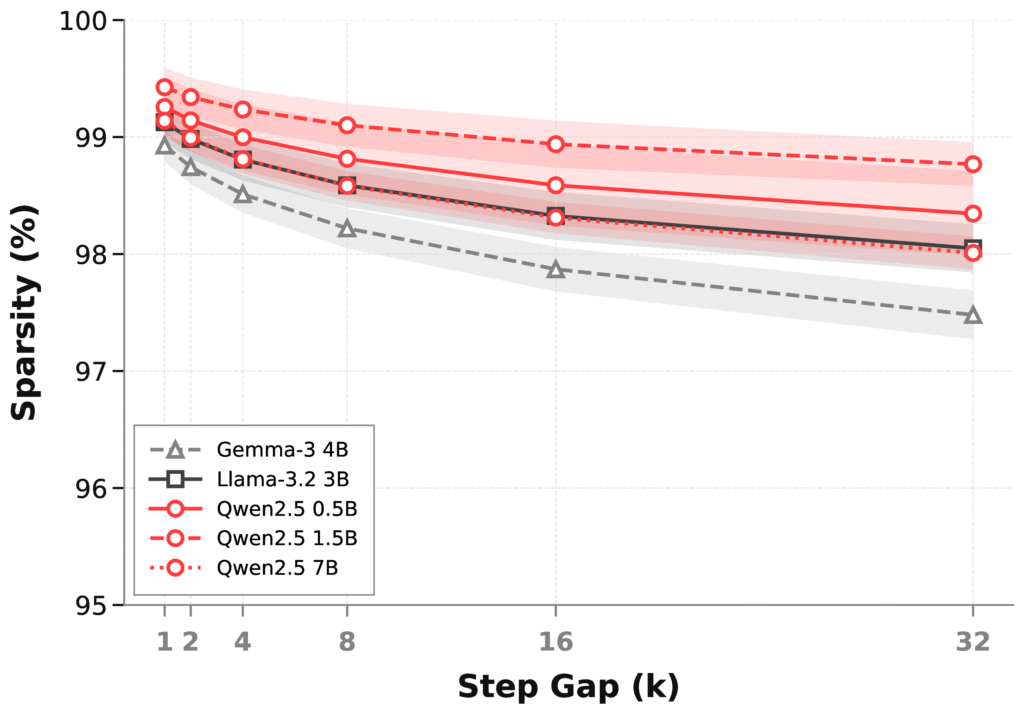

What makes this solid is how they measured it, which is by bitwise sparsity step-by-step, not just comparing initial vs final checkpoints. They tested across model families, sizes, and even async delays up to 32 steps. The result showed that sparsity consistently stays above 98% in realistic training conditions.

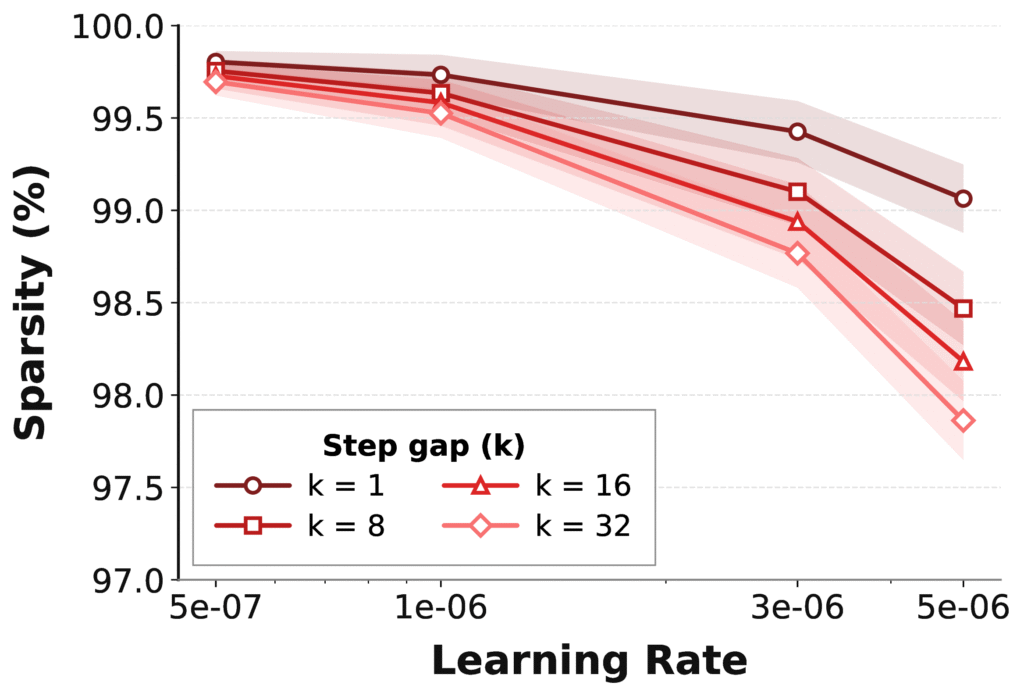

The reason is inferred in the update. Gradients are dense, but after Adam optimization + BF16 precision at RL learning rates around 1–3×10⁻⁶, most updates are so small they round to zero. So you get sparsity “for free” with standard RL hyperparameters.

Learning rate matters a lot: lower LR → smaller deltas → more values fall below BF16 precision → higher sparsity.

From there, they built the full compression pipeline:

- Bitwise checkpoint diff: extract changed indices + values

- Delta encoding for indices

- Type downscaling (uint8/16 for small deltas)

- zstd compression

Average compression lands at ~79×, often reaching 100×+.

And this isn’t theoretical. PULSE is already deployed live on Grail AI — Covenant’s decentralized, incentivized RL network.

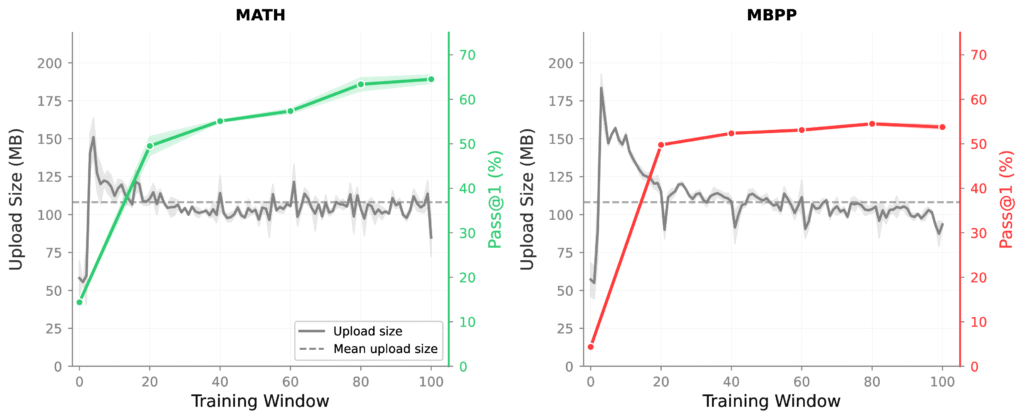

In real deployment:

- Patch sizes stabilize around ~108 MB (~130× smaller than 14 GB full syncs)

- Bandwidth drops from 20 Gbit/s → 0.2 Gbit/s

- GPU utilization stays around 90%

- Every sync is SHA-256 verified, meaning zero information loss and no drift

That bandwidth reduction is the real unlock. It takes what used to require datacenter-grade networking and makes it viable over normal consumer connections. Decentralized RL starts matching centralized performance.

Learn more about the PULSE update:

Paper: https://arxiv.org/pdf/2602.03839

Code: https://github.com/one-covenant/grail

Enjoyed this article? Join our newsletter

Get the latest Bittensor & TAO ecosystem news straight to your inbox.

We respect your privacy. Unsubscribe anytime.

Be the first to comment