This week’s community brief from Covenant AI, streamed from Nairobi, was less about optics and more about execution. TGIF #27 focused on infrastructure maturity, economic realism, and a recurring theme across the Bittensor ecosystem: build what scales, not what trends.

Nairobi Visit: Signal Over Optics

While event attendance in Nairobi fell short of expectations, one outcome stood out: a technically serious operator building in radiology acceleration demonstrated deep structural understanding of Bittensor.

The individual had traveled from South Africa, built a private 107-channel research Discord with a partner, and operated (still operating!) a radiology acceleration business serving real clinics.

The takeaway was never about the crowd size; but about conversions that yield measurable outcomes. Covenant’s leadership emphasized that builders who truly understand subnet mechanics and economic alignment remain rare, but they are emerging. That’s the real barometer.

Templar’s Crusade: Open Competition Model

On Templar (Subnet 3), the team continues refining its competition framework. Key points derived from updates from this model are that there shall be:

a. No manual removal of exploitative submissions,

b. If vulnerabilities are abused, consequences are systemic rather than selectively enforced,

c. A formal bounty system exists for reporting bugs,

d. The subnet is approaching theoretical performance limits on a single NVIDIA A100, and

e. Multi-GPU scaling is expected as early as next week.

The design choice is to avoid toxicity common in ‘competition subnets’ where owners manually disqualify participants.

A “special report” is expected next Friday, described internally as potentially historic.

HeteroLoco: Aggregating Any Compute Source

Research efforts are advancing under the internal project name Heterogeneous ‘HetereoLoco’ SparseLoCo, which is aimed at aggregating heterogeneous compute for large-scale training.

Key objective of this is to:

a. Train large models using heterogeneous hardware,

b. Accept compute from any source: MacBooks, consumer GPUs, Raspberry Pi-class devices, and

c. Reduce dependency on expensive centralized compute clusters.

The team cited prior training runs as compute-heavy and expensive, motivating this shift toward distributed aggregation. The long-term goal is to scale toward larger parameter models while maintaining economic feasibility.

Grail: Kernel Verification and Decentralized Training Speed

Grail (Subnet 81) is moving into kernel verification. What this means is that Grail would be operating:

a. Decentralized training at near-centralized speeds,

b. Support for multi-turn and long-horizon training, and

c. Production-level robustness rather than experimental iteration.

This phase is intended to validate distributed training performance under stricter verification constraints.

Basilica: Stripe Integration and Payment Unlock

One of the most material updates involves Basilica’s payment infrastructure. Previously, Basilica (Subnet 39) could only accept $TAO payments which limited onboarding outside the Bittensor ecosystem due to friction in requiring users to acquire $TAO.

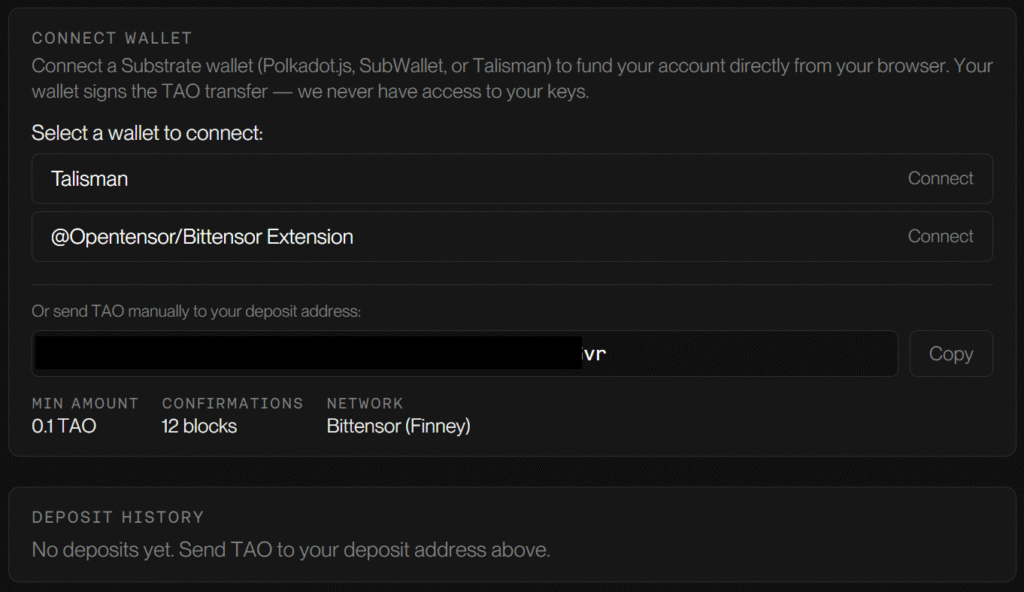

Basilica: Wallet Funding Option

The constraint stemmed from the need to integrate with Stripe, which required:

a. Establishing a U.S. entity,

b. Securing an EIN (Employer Identification Number), and

c. Receiving formal IRS (Internal Revenue Service) documentation required for banking access.

Delays were exacerbated by U.S. government administrative slowdowns, but the documentation was finally received this week.

As a result:

a. Basilica would now support conventional payment rails,

b. External go-to-market efforts can accelerate,

c. Revenue from OpenClaw is already live and expected to scale, and

d. Onboarding friction outside Bittensor is materially reduced.

The team noted that Bittensor-native users have been strong early customers, but growth beyond the ecosystem requires seamless payments.

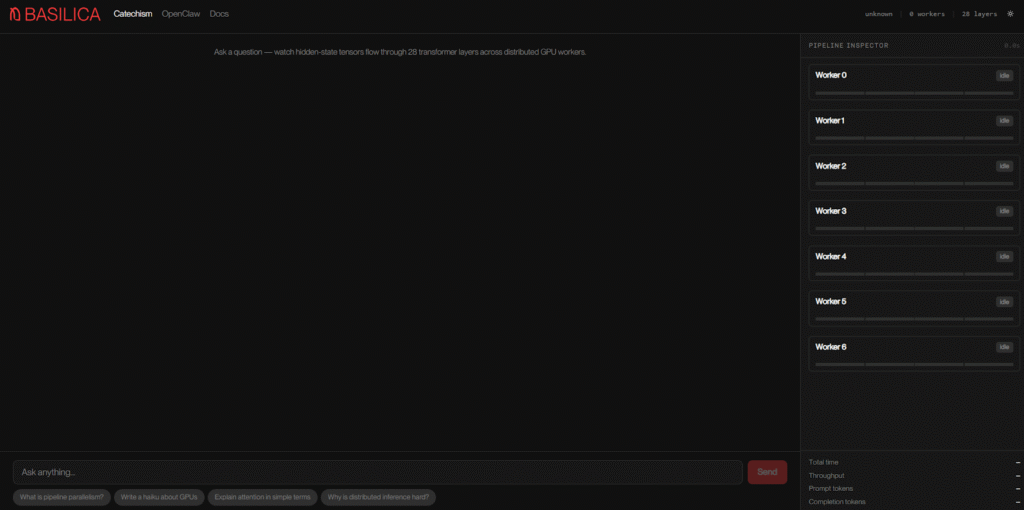

“Catechism”: Decentralized Inference Economics

A new research initiative, internally referred to as “Catechism,” focuses on decentralized inference. The premise on which this was found was that:

a. Latest-generation NVIDIA hardware is not strictly required for effective inference.

b. Models can be partitioned across varied GPU classes.

c. Serving models across distributed mid-tier GPUs (e.g., RTX-class cards) may materially reduce cost compared to concentrated high-end deployments.

The goal is to lower inference costs, increase subnet profitability or pass savings to users, and demonstrate competitive decentralized inference for large models, including ambitions toward serving 70B-class models.

The protocol is in early research, with the initial version being drafted internally. Whether it becomes a standalone subnet remains an open question.

Leadership explicitly questioned the proliferation of subnets and argued that some innovations may be better structured as applications rather than new protocol layers.

Additional Updates

Asides these updates, others that were mentioned during the brief includes:

a. OpenClaw instances are receiving a major update next week,

b. OpenClaw revenue generation is underway,

c. Hardware purchases through Covenant are beginning to occur organically, and

d. The team believes the ecosystem may already have too many subnets and emphasized discernment in deciding what should be a subnet versus an application.

Closing Perspective

TGIF 27 did not center on announcements for speculation cycles, it focused on economic durability.

From decentralized training speed to inference cost compression and real-world revenue rails, Covenant AI appears intent on solving structural bottlenecks rather than chasing narrative momentum.

In an ecosystem often driven by novelty, this week’s brief underscored something rarer: operational seriousness.

Be the first to comment